Visiting Lecture Course: Modelling time-varying features of speech: tools and methods

- Michele Gubian

Short CV: Michele Gubian

Michele Gubian obtained a Master of Engineering degree from Polytechnic University of Milan (Italy) in 2004 with final thesis in automatic speech recognition carried out at STMicroelectronics labs. After that he pursued a Doctoral degree in Information and Communication Technology at the university of Trento (Italy), with a thesis on statistical methods for smart sensor design.

Since 2008 Michele works in the academic field of phonetics and psycholinguistics, mainly focusing on statistical methodologies and simulation. Between 2008 and 2014 he was with the Centre for Language and Speech Technology at the university of Nijmegen (the Netherlands), working on two projects. The first one within the Marie Curie Research Training Network Sound2Sense allowed him to develop new ideas for the statistical analysis of speech-related dynamic signals. In the second project Michele worked on a software prototype aimed at simulating some cognitive processes involved in first language acquisition. Between 2014 and 2018 Michele joined the School of Experimental Psychology at Bristol University (UK), where he worked on the development of a software platform for the simulation of cognitive processes involved in visual word recognition. Since 2018 Michele joined the Institute of Phonetics and Speech Processing at LMU Munich (Germany), where he currently works on agent-based simulation of sound change.

Abstract

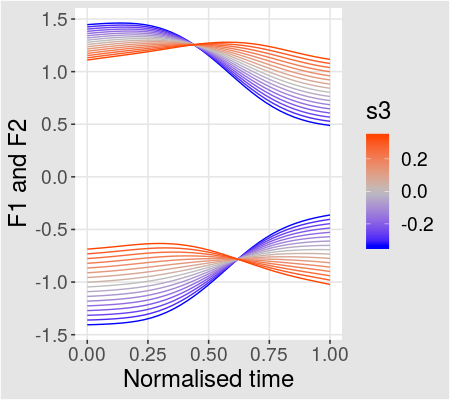

The quantitative analysis of speech production, whether based on acoustic features like formant tracks (e.g. F1 and F2) or on electromagnetic articulography (EMA) or other forms of articulator imaging, involves the exploration, formulation and testing of hypotheses on some dynamic aspects of the involved signals. For example, analysing the degree of diphthongisation of a vowel in different contexts/dialects entails the analysis of (at least) F1 and F2 tracks shapes; similar considerations apply to alignment patterns of pitch accents with respect to syllable boundaries, as well as to the effects of coarticulation on the shape of one or more articulator trajectory, e.g. tongue dorsum reaching the palate to realise a closure.

In this scenario, one needs to tackle a number of technical challenges. First, the analysis has to translate curves or trajectories into quantitaties (vectors) on which statistical modelling can be applied. Such translation should be as data-driven and automated as possible, as opposed to be reliant on manual marking or any other human perception-based intervention. Second, multidimensional signals, like F1 and F2 belonging to the same vowel, need to be treated as a whole whenever possible, as opposed to running independent analyses on each dimension. Third, as signals have different durations and duration is an important aspect of speech production, appropriate methods are required to incorporate duration in the analysis of signal shapes. This is particularly important (and difficult) when signals are long, like in the case of fundamental frequency (f0) contours spanning several syllables, where different syllables can exhibit different duration patterns, while at the same time f0 contours need to be referred to the underlying syllable boundaries as if they were fixed.

The course presents a work flow based on functional principal component analysis (FPCA) that tackles all the above mentioned problems. The advantage of the proposed approach is its simplicity, since FPCA is the only shape-analysis component embedded into an ordinary vector-analysis work flow based on popular tools (e.g. linear mixed-effects models). Like any method, this work flow has also limitations. These will be exposed by comparing it with the currently more popular method based on GAMMs (generalised additive mixed-models), which in turn has other limitations. Possible improvements based on Bayesian approaches will also be sketched.

Learning Goals

Students will learn the theoretical aspects of the methodology mainly by examples, the mathematical aspects will be kept to a minimum (but not zero). Basic calculus helps, but is not mandatory, basic knowledge on linear (mixed-effects) regression is more relevant. The practical aspects will be learned by solving together a number of coding exercises based on R. At the end of the course the students will be able to apply one or more variants of the proposed work flow to their own data, and will be able to choose which approach to use based on research goals and data set characteristics.

Evaluation

Students will be evaluated on an assignemnt, which can be carried out individually or in pairs. All assigments consist of running a complete analysis on a given data set. Variations around this theme are:

- take a published article and reproduce its results (starting from the same data)

- take a published article and run the analysis based on an alternative approach

- take your own data (real or artificial) and run an analysis.

In any case, two elements must be present.

- the complete code that produced the results

- a written explanation about research question, hypotheses, data set, motivation for the approach and an interpretation of results.

While the classes are based on R, students can use other languages (Python, MATLAB, etc.) for the assignment.

Dates

The course takes place in the mornings of 4 consecutive Days in April 2020 (21.4.: 8.30-12.30, 22.4.: 8.00-10:00 & 12.15-15:00, 23.4. 8:00-10.00 & 12.30-14:00, 24.4. 8:30-12.30), the final projects will be presented and discussen on May 7th, 9.00-10.00). ROOM: Seminarraum CGV (ID02104, Inffeldgasse 16c, 8010 Graz).

Registration

Please register via TUGonline. If you are from an Austrian University other than TUGRAZ, please co-register (“MITBELEGEN”) at TUGraz first. This is free of cost, but necessary so you can register and then transfer your credits to your University. TUGonline link: https://online.tugraz.at/tug_online/pl/ui/$ctx/wbLv.wbShowLVDetail?pStpSpNr=233575&pSpracheNr=1