Deep Structured Mixtures of Gaussian Processes

- Published

- Sun, Mar 01, 2020

- Tags

- rotm

- Contact

Gaussian Processes (GPs) are powerful non-parametric Bayesian regression models that allow exact posterior inference, but exhibit high computational and memory costs. In order to improve scalability of GPs, approximate posterior inference is frequently employed, where a prominent class of approximation techniques is based on local GP experts. However, the local-expert techniques proposed so far are either not well-principled, come with limited approximation guarantees, or lead to intractable models.

In this paper, we introduce deep structured mixtures of GP experts, a well-principled stochastic process model which i) allows exact posterior inference, ii) has attractive computational and memory costs, and iii), when used as GP approximation, captures predictive uncertainties consistently better than previous approximations. Furthermore, deep structured mixtures can optionally be fine-tuned locally – regularised using local similarity constraints – which enables modelling of heteroscedasticity and non-stationarities.

In a variety of experiments, we show that deep structured mixtures have a low approximation error and outperform existing expert-based approaches.

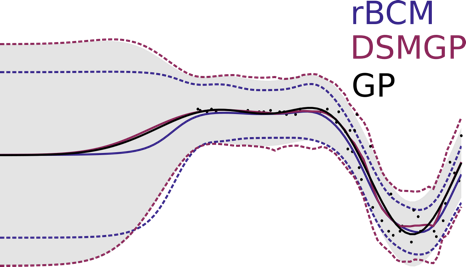

Figure: Qualitative comparison of the state-of-the-art expert-based approach (rBCM) and our model (DSMGP) against an exact Gaussian process (GP). Even though our approach results in slight discontinuities, DSMGPs approximate the exact GP more accurately than rBCMs.

More information can be found in our paper which will be presented at the International Conference on Artificial Intelligence and Statistics, 2020.

Browse the Results of the Month archive.