Result of the Month

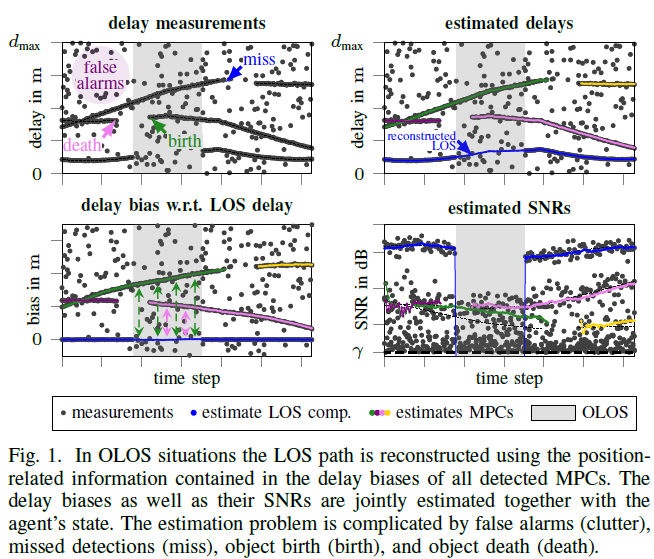

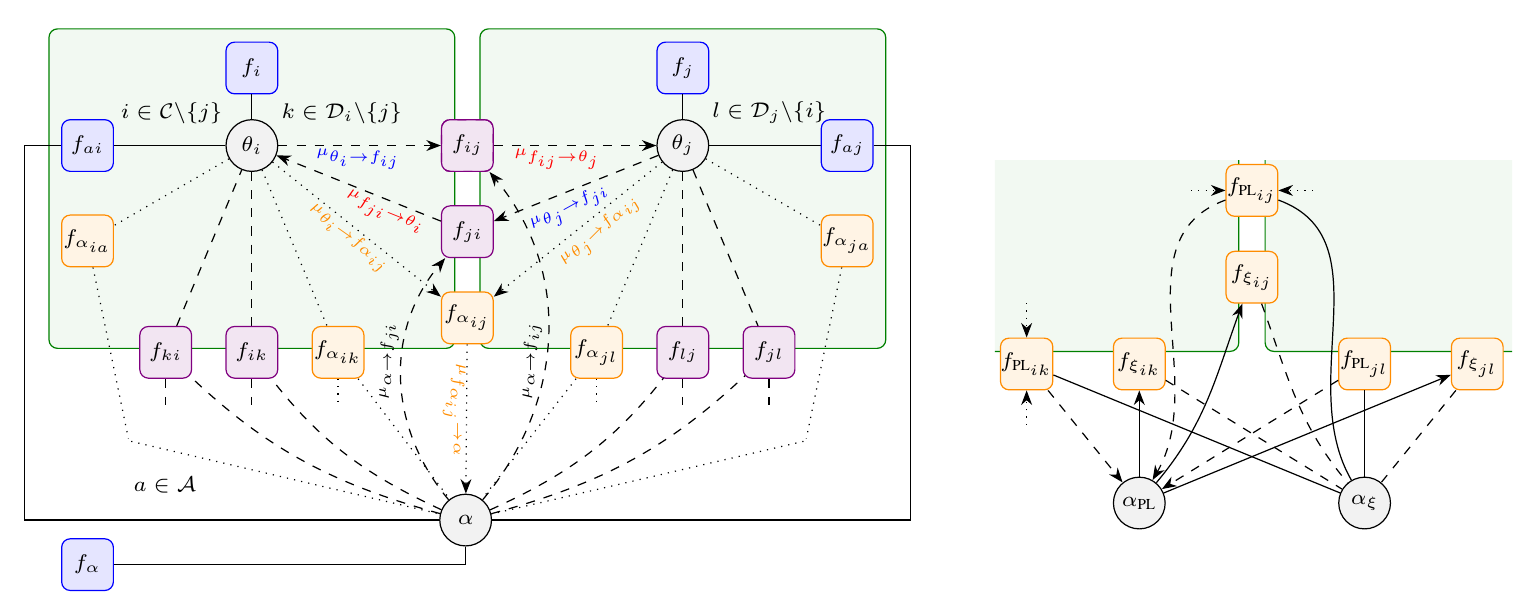

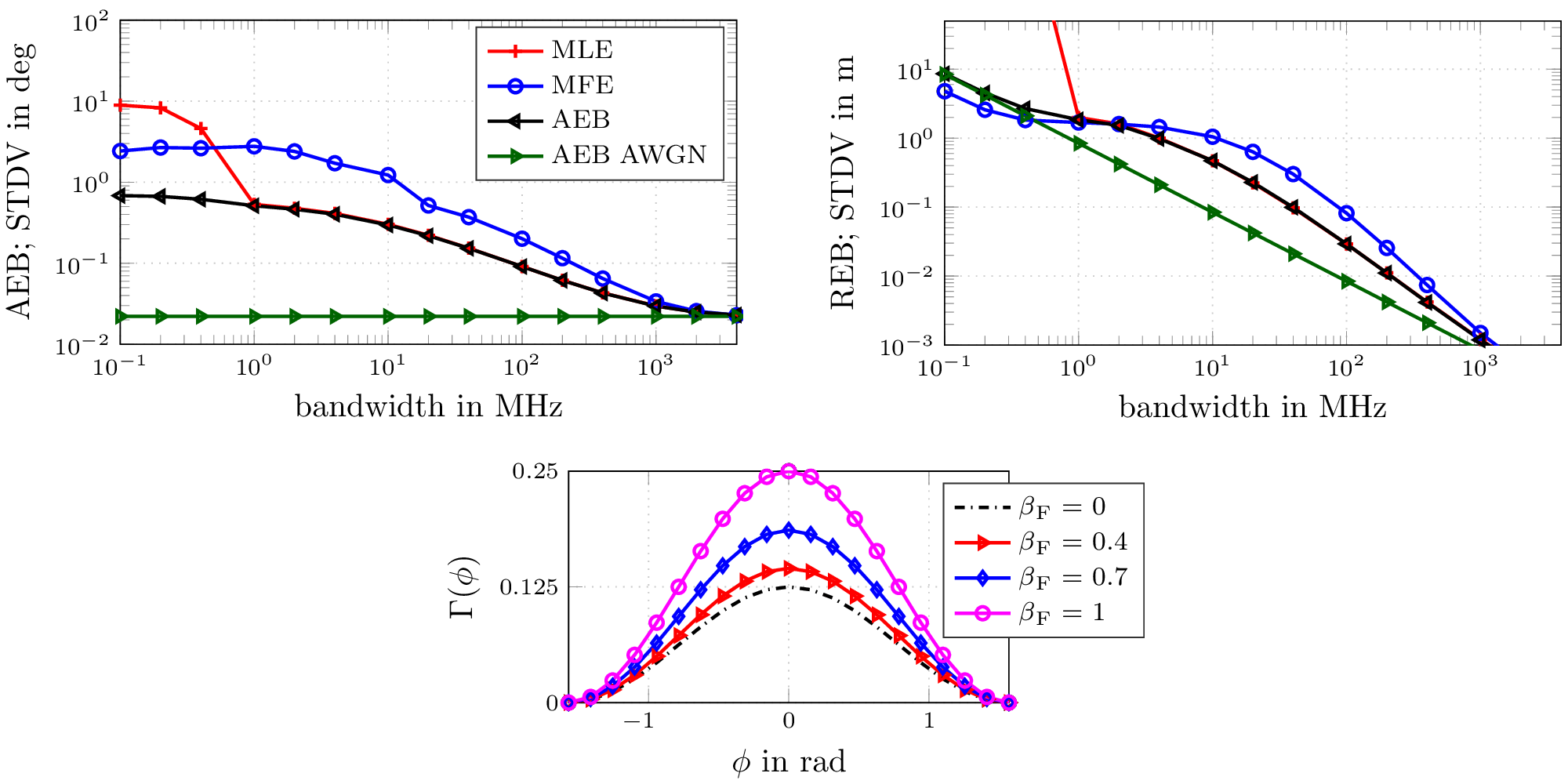

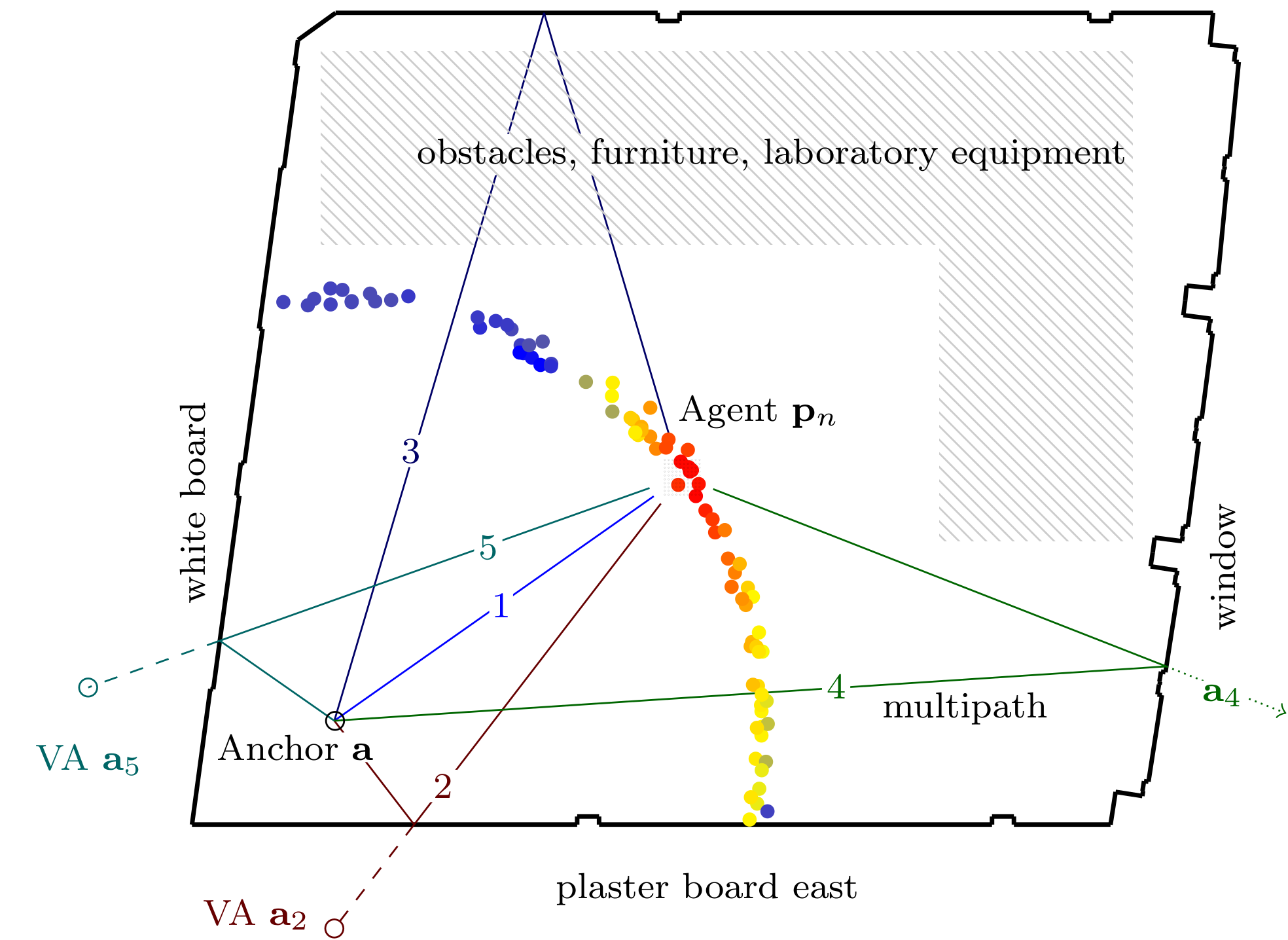

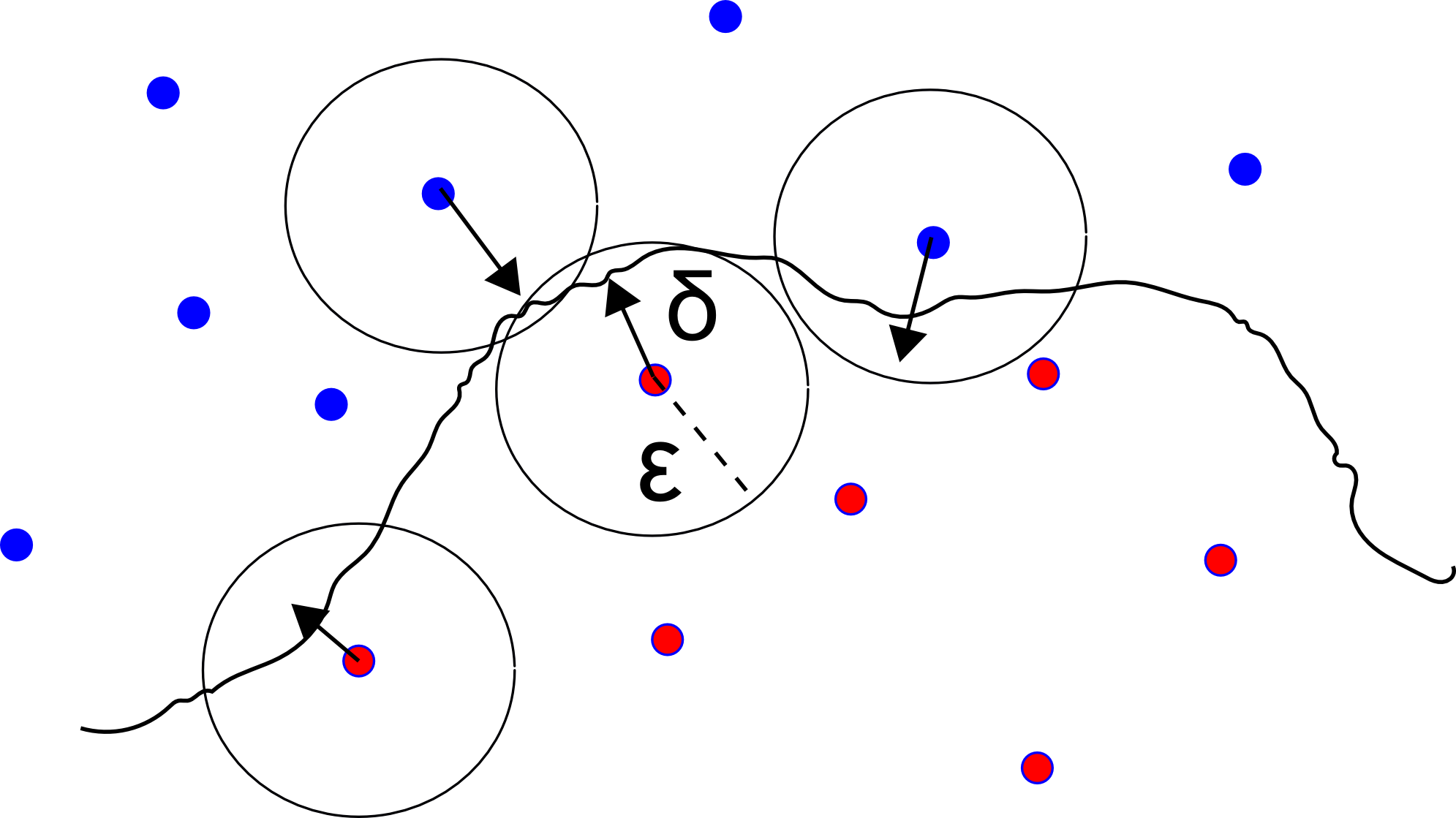

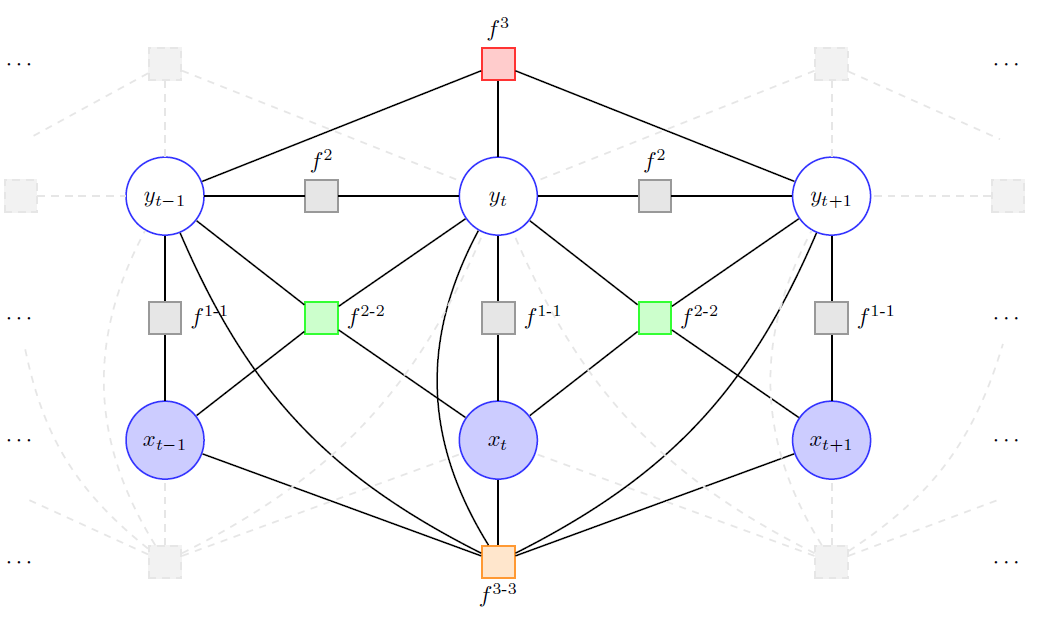

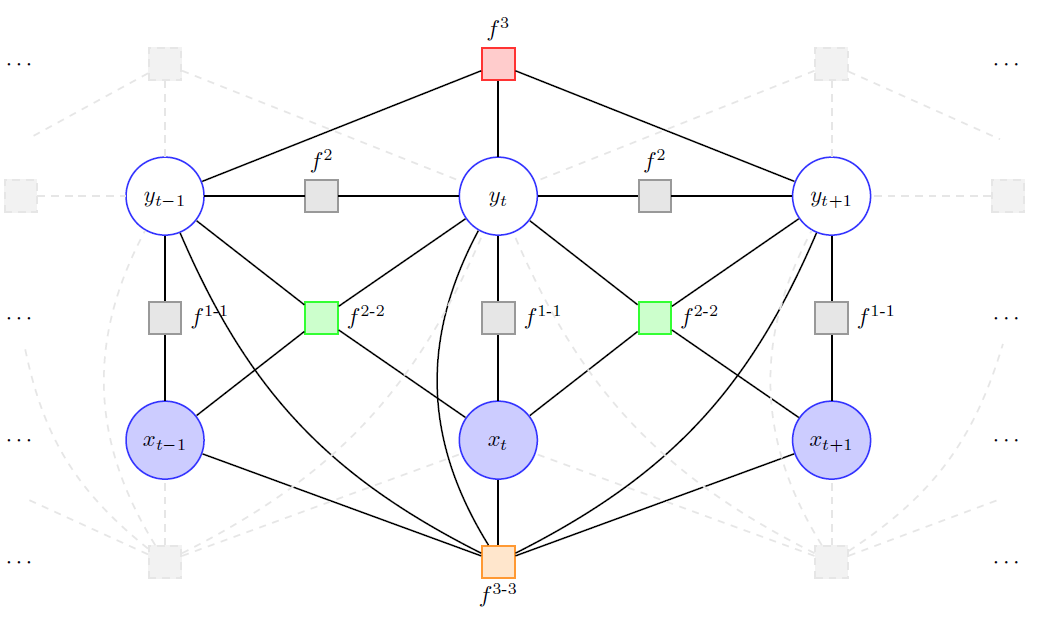

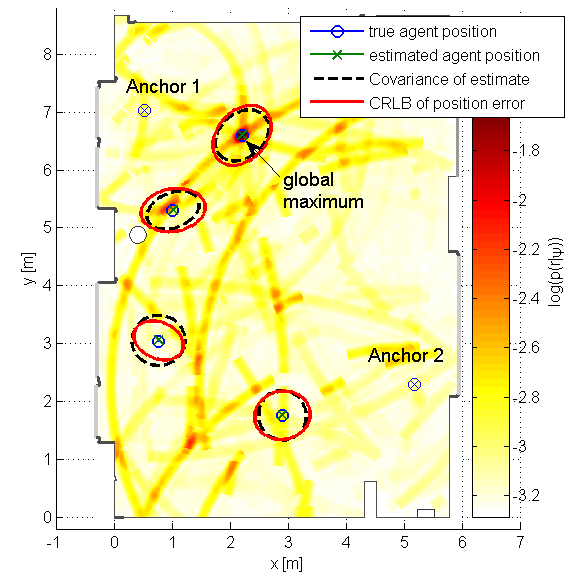

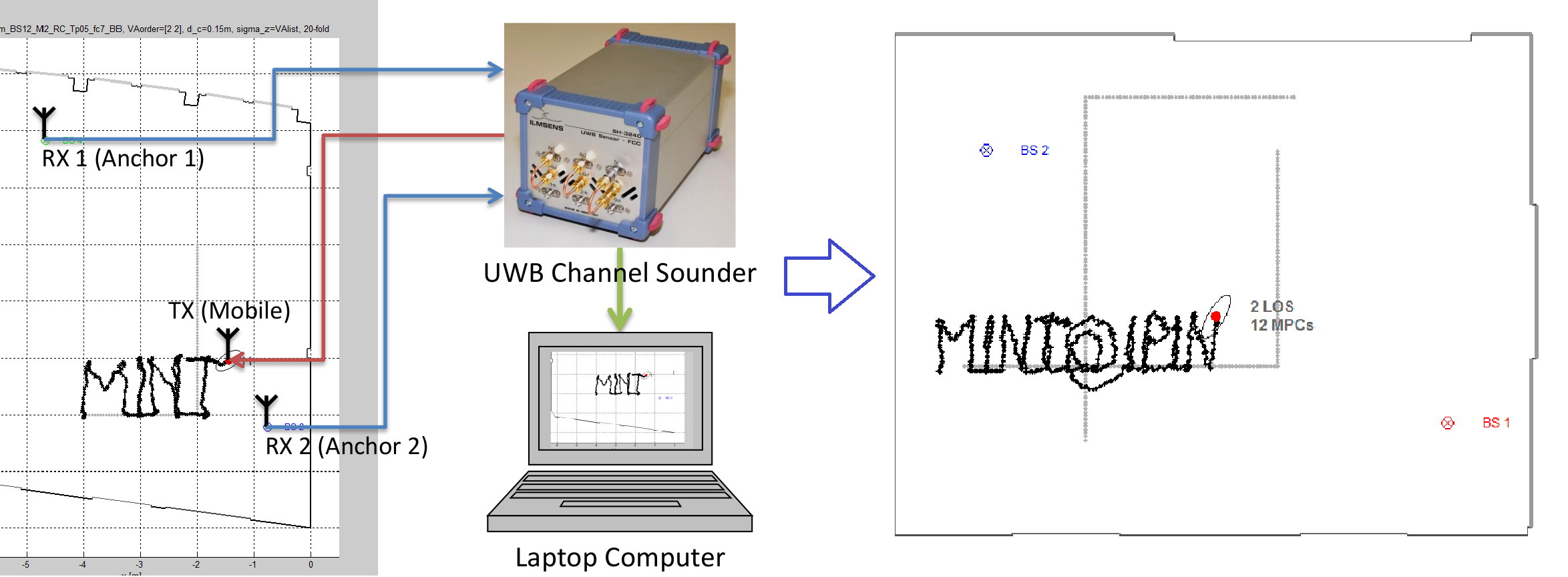

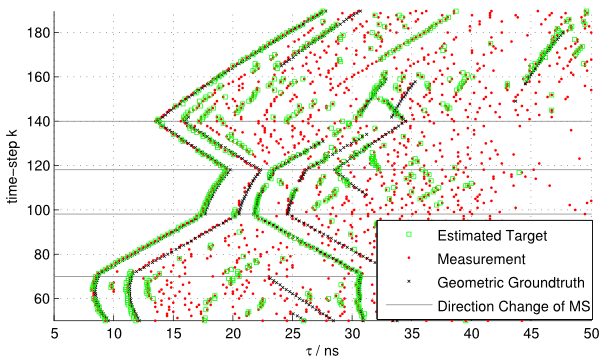

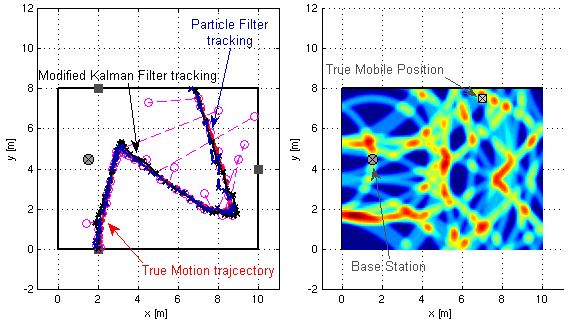

We present a factor graph formulation and particlebased sum-product algorithm for robust localization and tracking in multipath-prone environments. The proposed sequential algorithm jointly estimates the mobile agent’s position together with a time-varying number of multipath components (MPCs). The MPCs are represented by “delay biases” corresponding to the offset between line-of-sight (LOS) component delay and the respective delays of all detectable MPCs. The delay biases of the MPCs capture the geometric features of the propagation environment with respect to the mobile agent. Therefore, they can provide position-related information contained in the MPCs without explicitly building a map of the environment. We demonstrate that the position-related information enables the algorithm to provide high-accuracy position estimates even in fully obstructed line-of-sight (OLOS) situations. Using simulated and real measurements in different scenarios we demonstrate the proposed algorithm to significantly outperform state-of-the-art multipath-aided tracking algorithms and show that the performance of our algorithm constantly attains the posterior Cram´er- Rao lower bound (P-CRLB). Furthermore, we demonstrate the implicit capability of the proposed method to identify unreliable measurements and, thus, to mitigate lost tracks.

Read the full article.

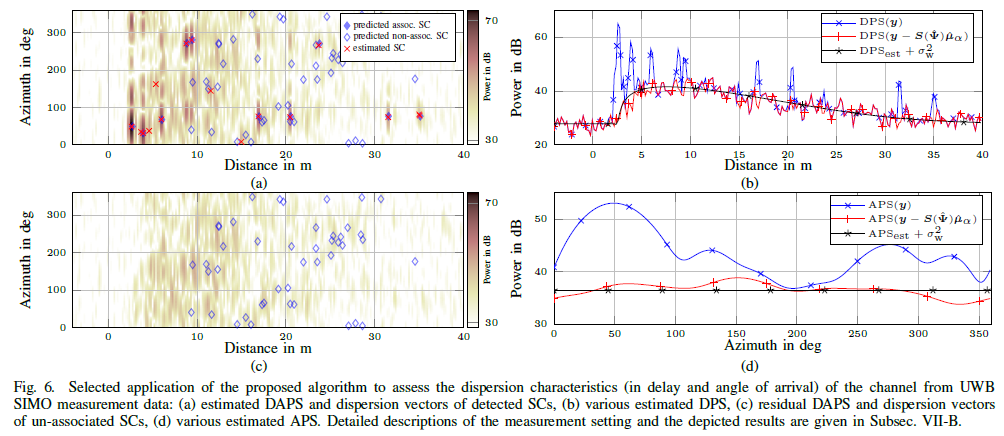

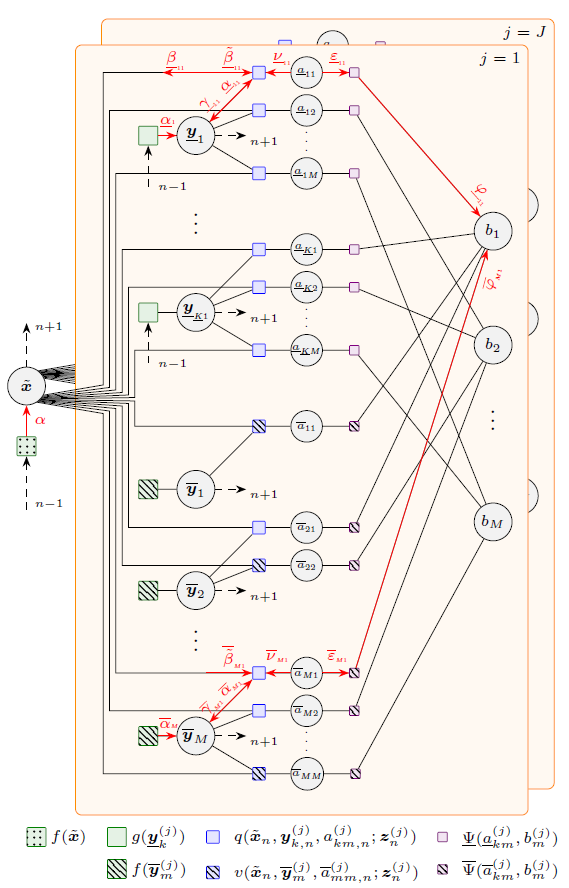

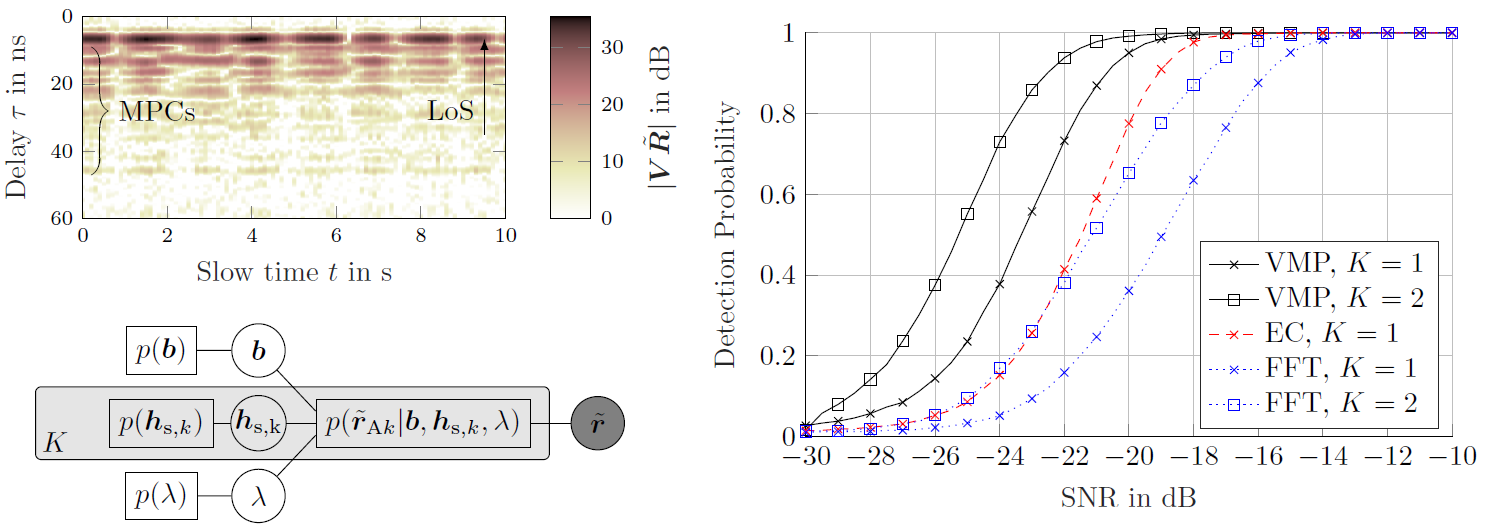

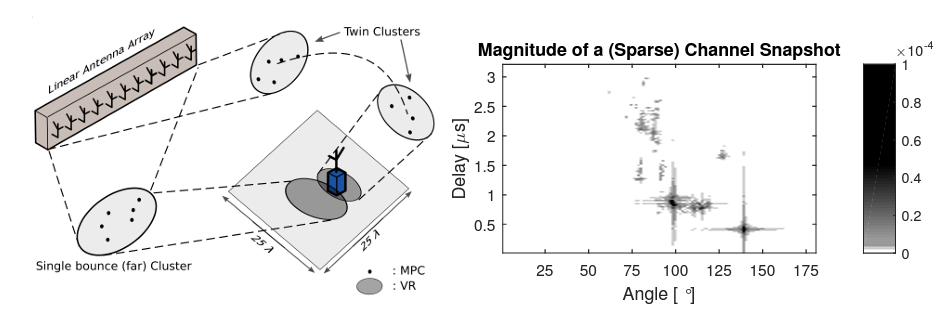

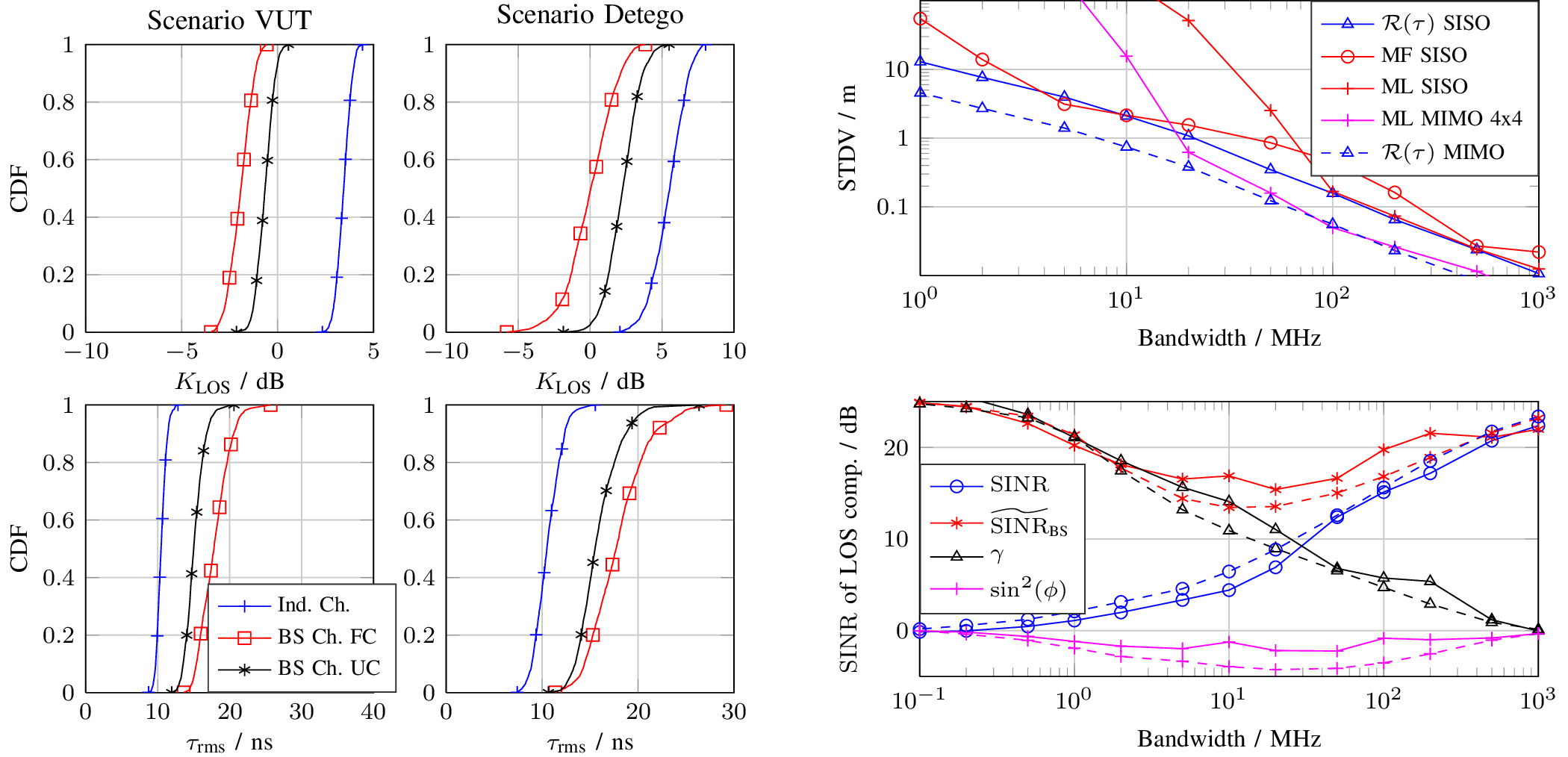

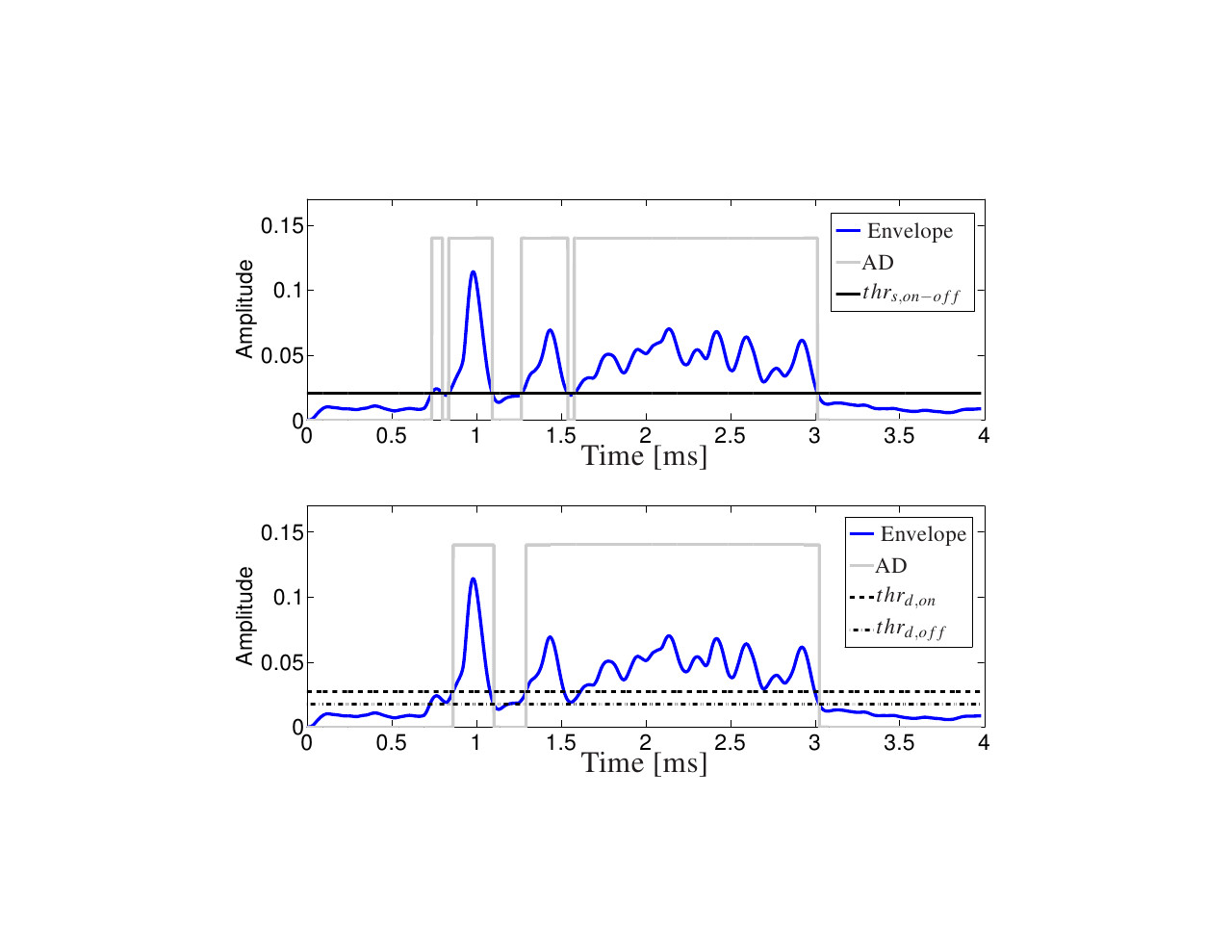

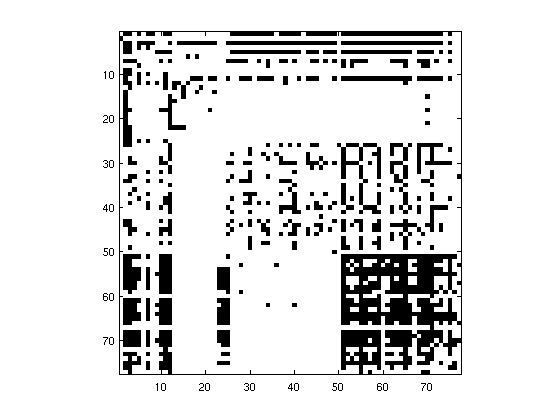

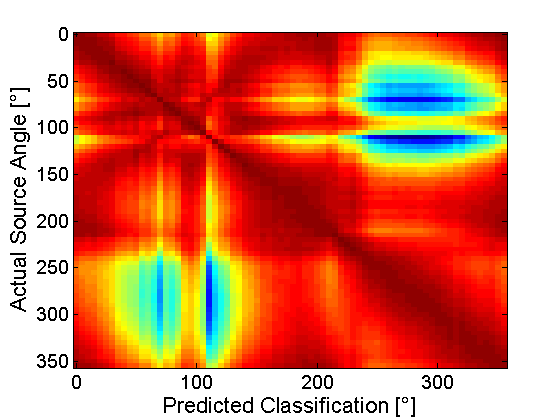

In this paper, we present an iterative algorithm that detects and estimates the specular components (SCs) and estimates the dense component (DC) of single-input—multipleoutput (SIMO) ultra-wide-band (UWB) multipath channels. Specifically, the algorithm super-resolves the SCs in the delay–angle-of-arrival domain and estimates the parameters of a parametric model of the delay-angle power spectrum characterizing the DC. Channel noise is also estimated. In essence, the algorithm solves the problem of estimating spectral lines (the SCs) in colored noise (generated by the DC and channel noise). Its design is inspired by the sparse Bayesian learning (SBL) framework. As a result the iteration process contains a threshold condition that determines whether a candidate SC shall be retained or pruned. By relying to results from extreme-value analysis the threshold of this condition is suitably adapted to ensure a prescribed probability of detecting spurious SCs. Studies using synthetic and real channel measurement data demonstrate the virtues of the algorithm: it is able to still detect and accurately estimate SCs, even when their separation in delay and angle is down to half the Rayleigh resolution limit (RRL) of the equipment; it is robust in the sense that it tends to return no more SCs than the actual ones. Finally, the algorithm is demonstrated to outperform a state-of-the-art super-resolution channel estimator in terms of robustness in the estimation of the amplitudes of specular components closely spaced in the dispersion domain.

Read the full article.

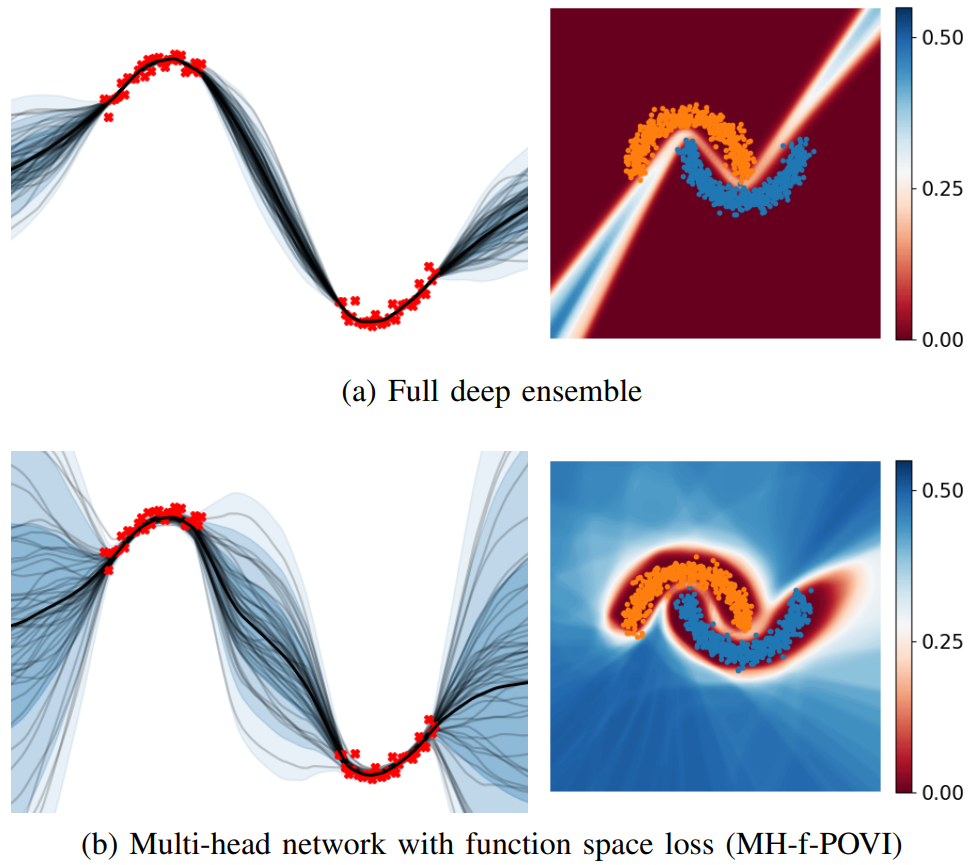

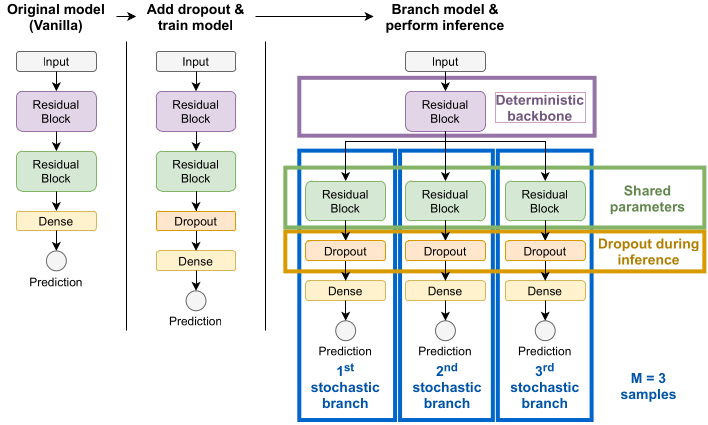

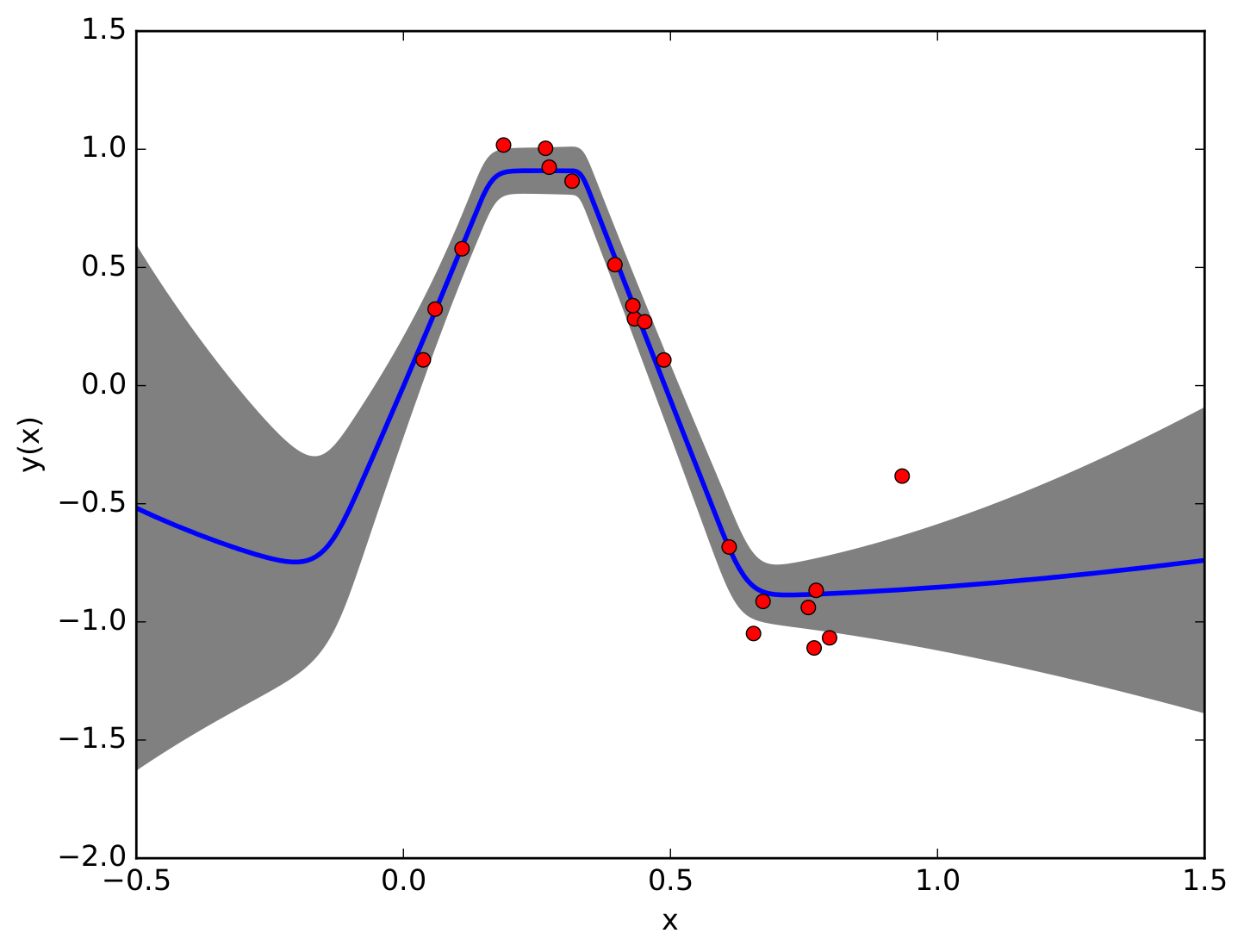

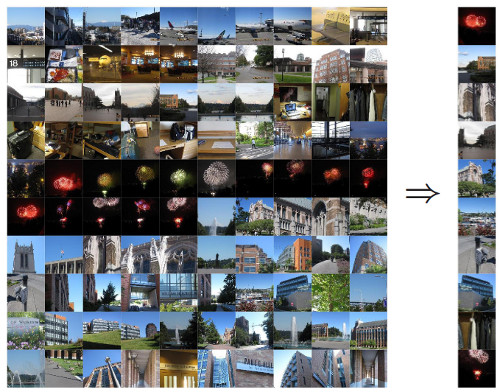

Deep ensembles have shown remarkable empirical success in quantifying uncertainty, albeit at considerable computational cost and memory footprint. Meanwhile, deterministic single-network uncertainty methods have proven as computationally effective alternatives, providing uncertainty estimates based on distributions of latent representations. While those methods are successful at out-of-domain detection, they exhibit poor calibration under distribution shifts. In this work, we propose a method that provides calibrated uncertainty by utilizing particle-based variational inference in function space. Rather than using full deep ensembles to represent particles in function space, we propose a single multi-headed neural network that is regularized to preserve bi-Lipschitz conditions. Sharing a joint latent representation enables a reduction in computational requirements, while prediction diversity is maintained by the multiple heads. We achieve competitive results in disentangling aleatoric and epistemic uncertainty for active learning, detecting out-of-domain data, and providing calibrated uncertainty estimates under distribution shifts while significantly reducing compute and memory requirements.

Read the full article.

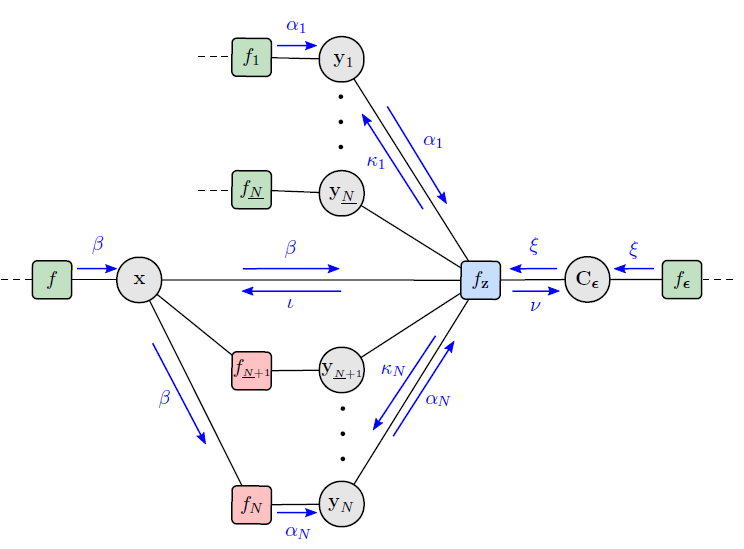

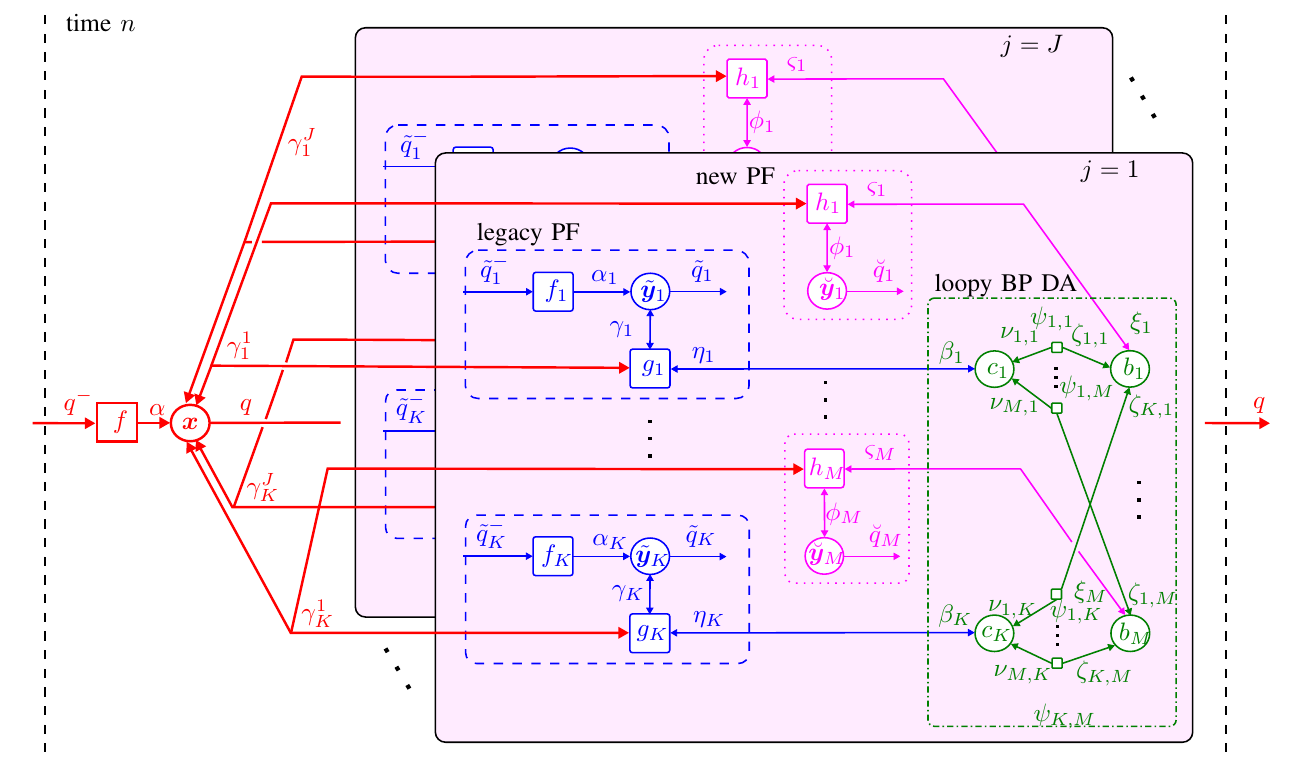

In this work, we develop a multipath-based simultaneous localization and mapping (SLAM) method that can directly be applied to received radio signals. In existing multipath-based SLAM approaches, a channel estimator is used as a preprocessing stage that reduces data flow and computational complexity by extracting features related to multipath components (MPCs). We aim to avoid any preprocessing stage that may lead to a loss of relevant information. The presented method relies on a new statistical model for the data generation process of the received radio signal that can be represented by a factor graph. This factor graph is the starting point for the development of an efficient belief propagation (BP) method for multipath-based SLAM that directly uses received radio signals as measurements. Simulation results in a realistic scenario with a single-input single-output (SISO) channel demonstrate that the proposed direct method for radio-based SLAM outperforms state-of-the-art methods that rely on a channel estimator.

Read the full article.

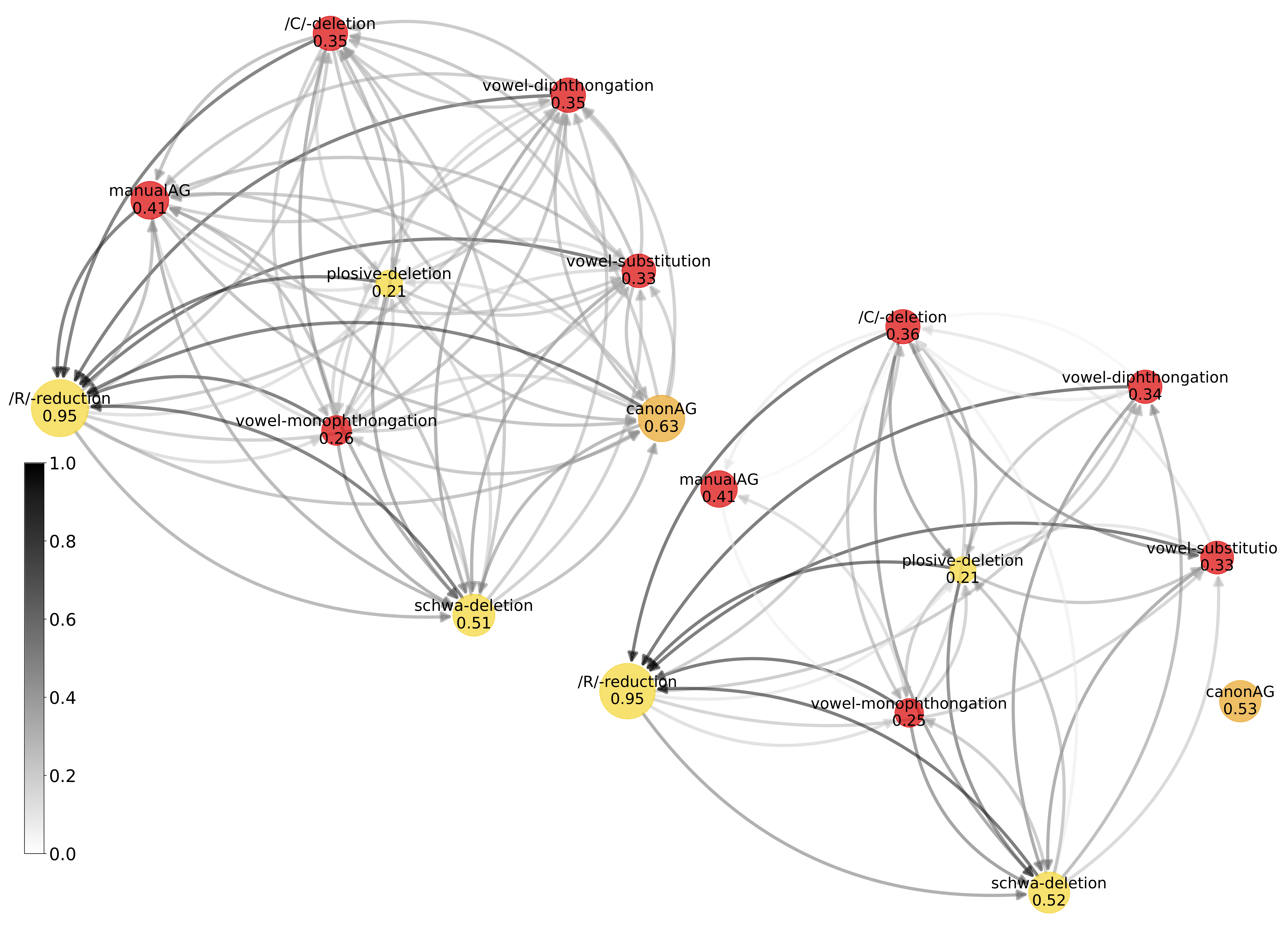

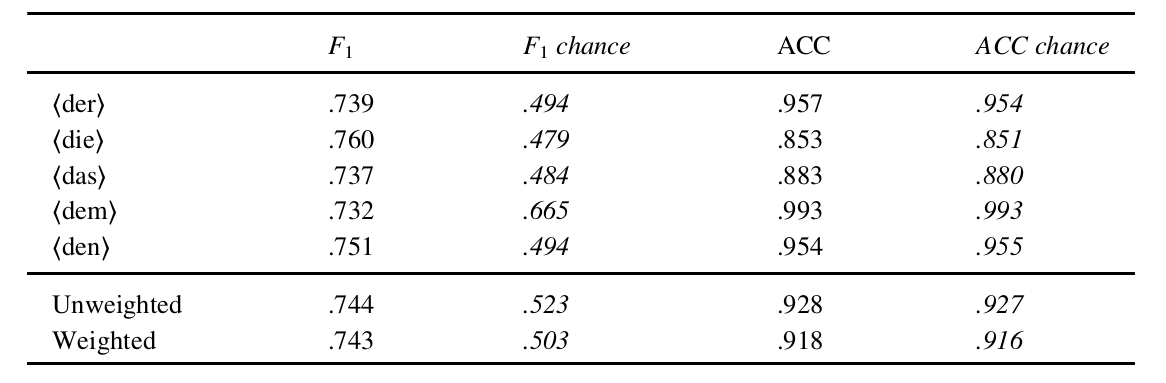

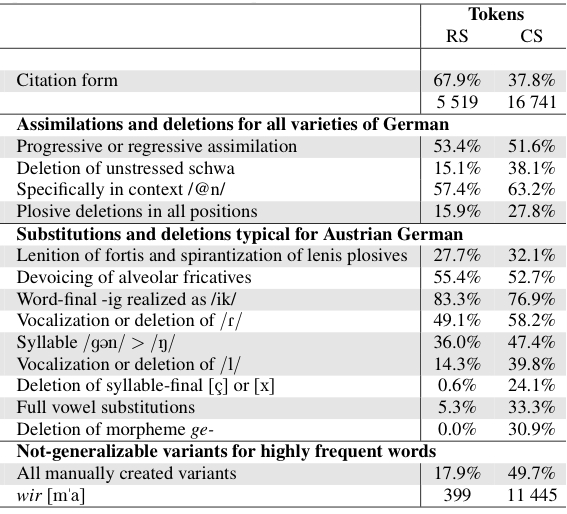

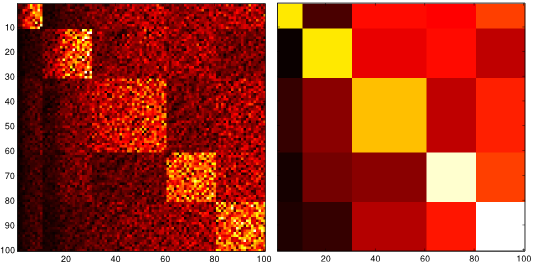

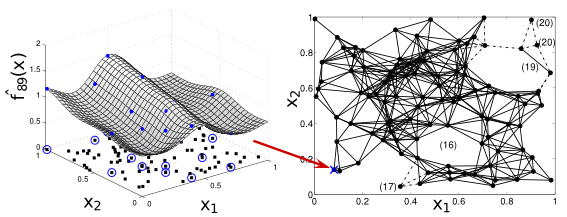

Given the development of automatic speech recognition based techniques for creating phonetic annotations of large speech corpora, there has been a growing interest in investigating the frequencies of occurrence of phonological and reduction processes. Given that most studies have analyzed these processes separately, they did not provide insights about their co-occurrences. This paper contributes with introducing graph theory methods for the analysis of pronunciation variation in GRASS, a large corpus of Austrian German conversational speech. More specifically, we investigate how reduction processes that are typical for spontaneous German in general (figure: yellow) co-occur with phonological processes typical for the Austrian German variety (figure: red). Whereas our concrete findings are of special interest to scientists investigating variation in German, the approach presented opens new possibilities to analyze pronunciation variation in large corpora of across speakers and across speaking styles in any language.

Read the full article.

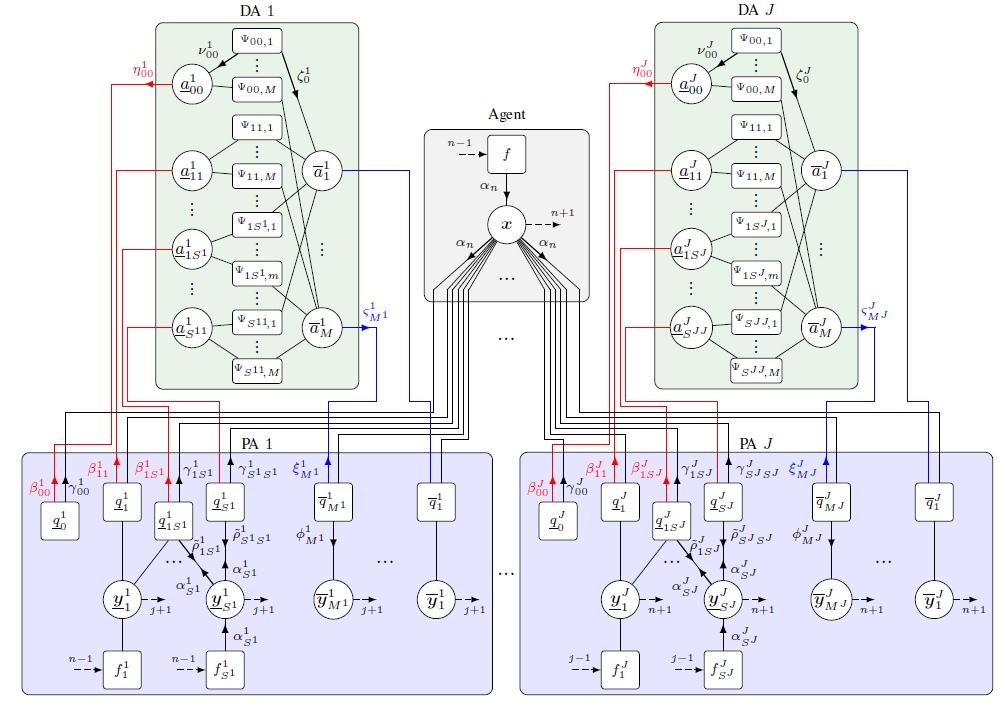

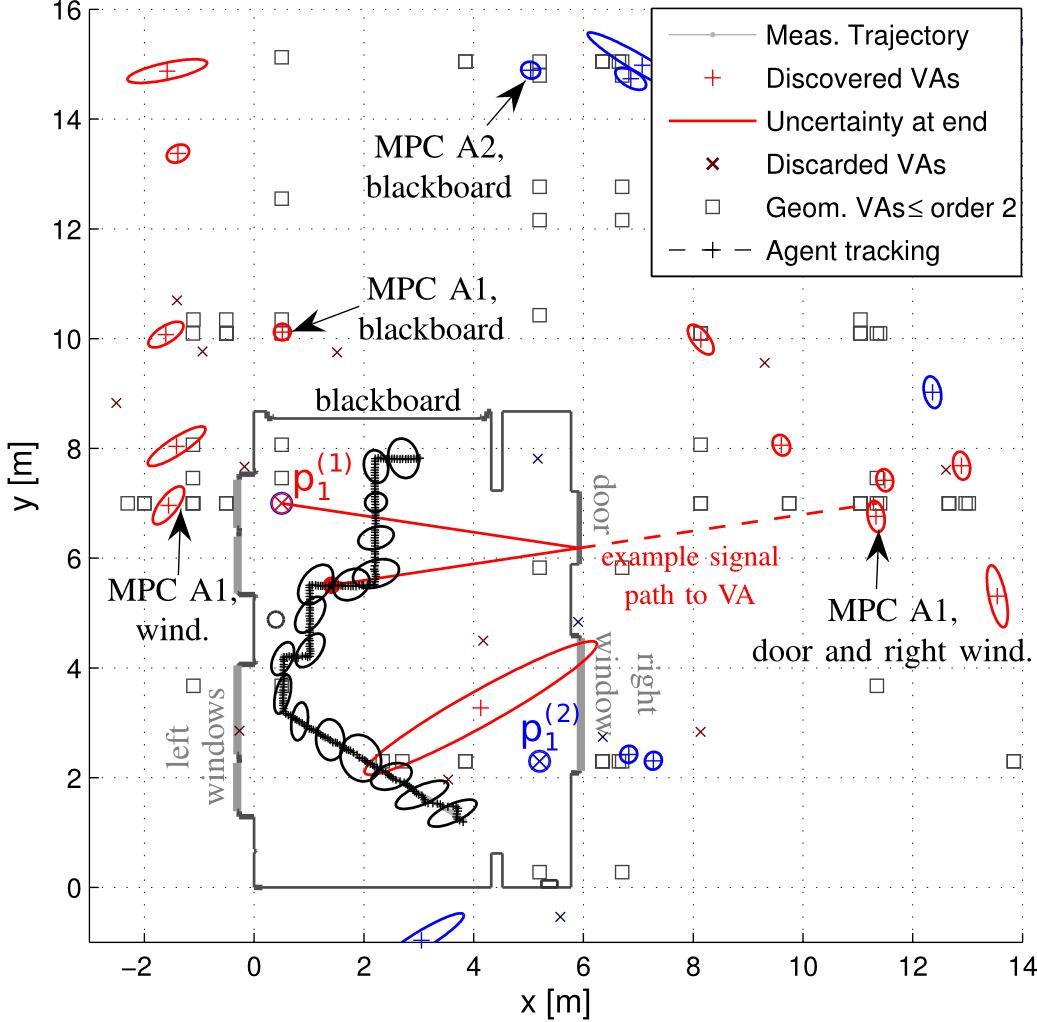

Multipath-based simultaneous localization and mapping (SLAM) is a promising approach to obtain position information of transmitters and receivers as well as information regarding the propagation environments in future mobile communication systems. Usually, specular reflections of the radio signals occurring at flat surfaces are modeled by virtual anchors (VAs) that are mirror images of the physical anchors (PAs). In existing methods for multipath-based SLAM, each VA is assumed to generate only a single measurement. However, due to imperfections of the measurement equipment such as non-calibrated antennas or model mismatch due to roughness of the reflective surfaces, there are potentially multiple multipath components (MPCs) that are associated to one single VA. In this paper, we introduce a Bayesian particle-based sum-product algorithm (SPA) for multipath-based SLAM that can cope with multiplemeasurements being associated to a single VA. Furthermore, we introduce a novel statistical measurement model that is strongly related to the radio signal. It introduces additional dispersion parameters into the likelihood function to capture additional MPCs-related measurements. We demonstrate that the proposed SLAM method can robustly fuse multiple measurements per VA based on numerical simulations.

Read the full article.

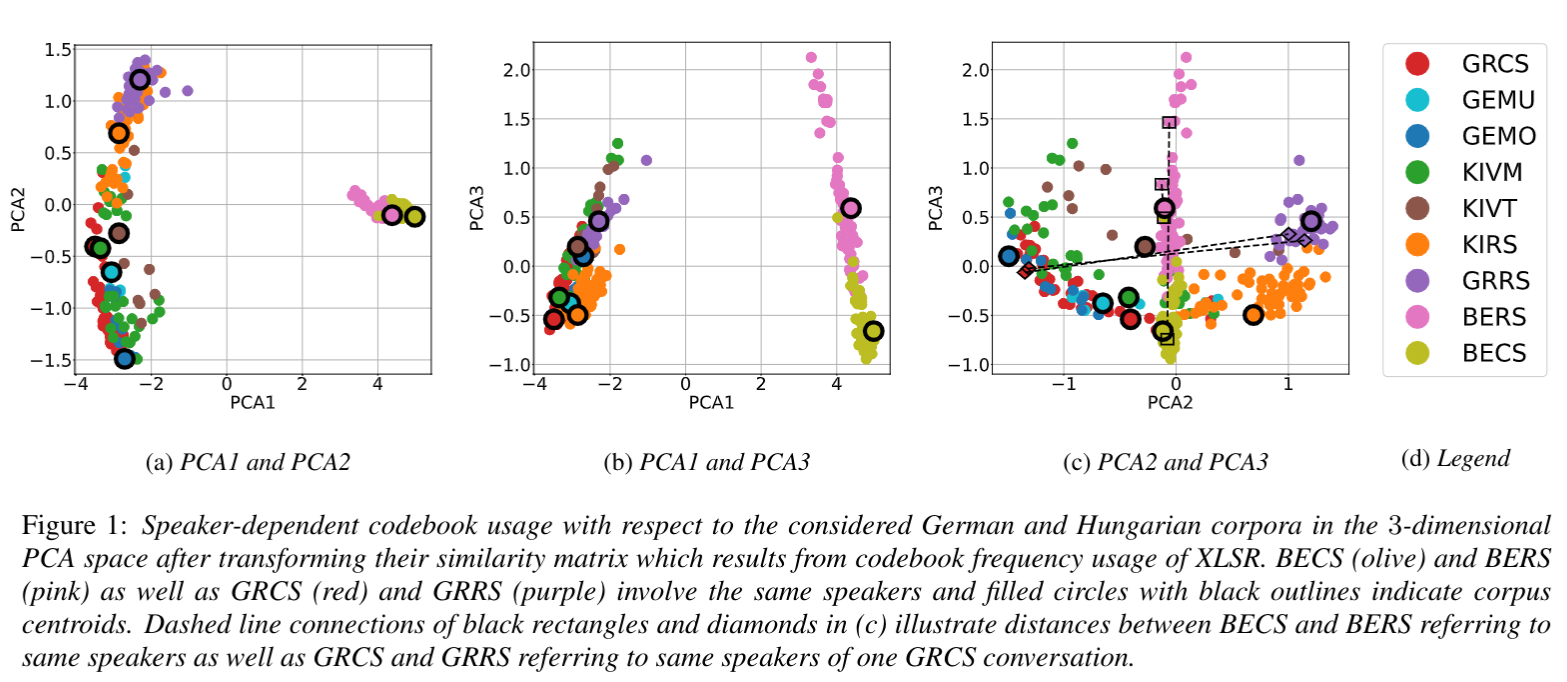

Automatic speech recognition systems based on self-supervised learning yield excellent performance for read, but not so for conversational speech. This work contributes insights into how corpora from different languages and speaking styles are encoded in shared discrete speech representations (based on wav2vec2 XLSR). We analyze codebook entries of data from two languages from different language families (i.e., German and Hungarian), of data from different varieties from the same language (i.e., German and Austrian German) and of data from different speaking styles (read and conversational speech). We find that – as expected – the two languages are clearly separable. With respect to speaking style, conversational Austrian German has the highest similarity with a corpus of similar spontaneity from a different German variety, and speakers differ more among themselves when using different speaking styles than from other speakers of a different region when using the same speaking style.

Read the full article.

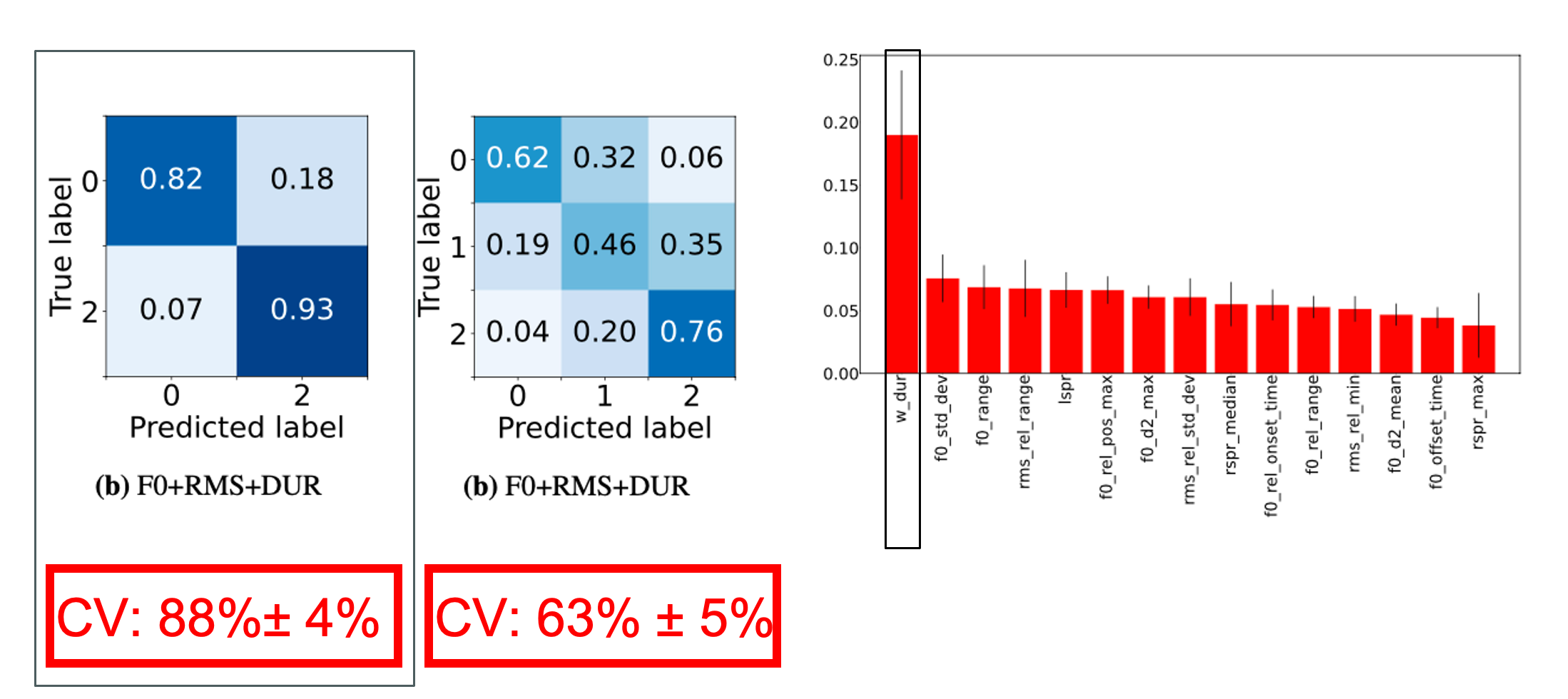

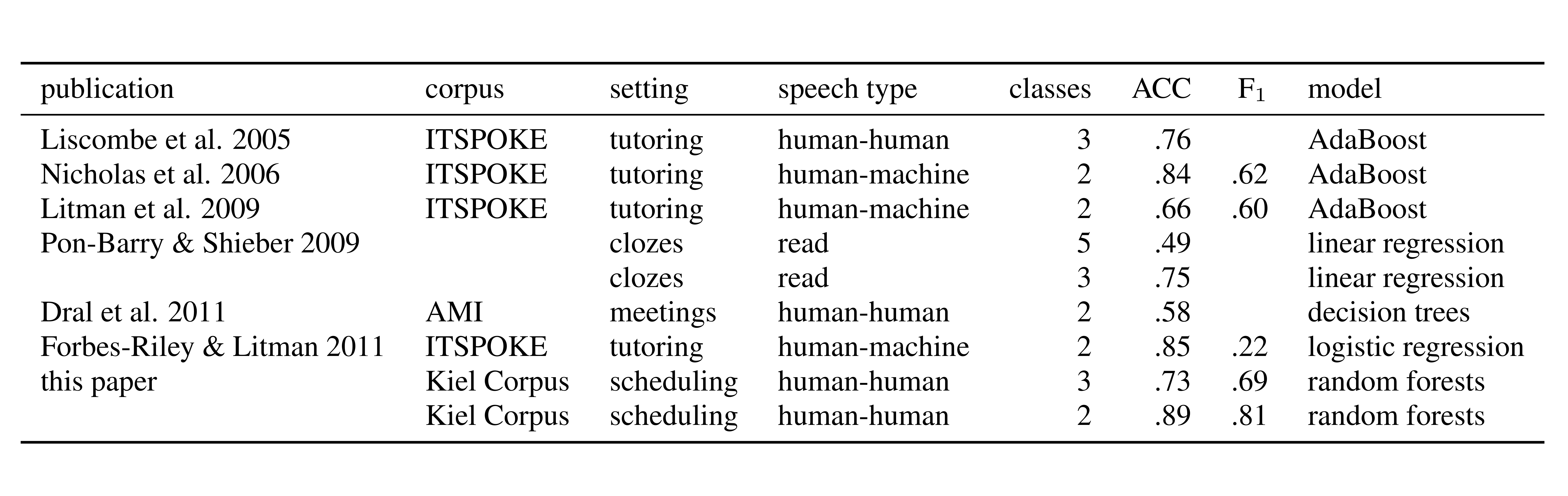

Left: Confusion matrices from experiments with 15 best features (F0+RMS+DUR). Right: Random Forest feature importances for 3 class problem with F0, RMS and DUR.

Read the full article.

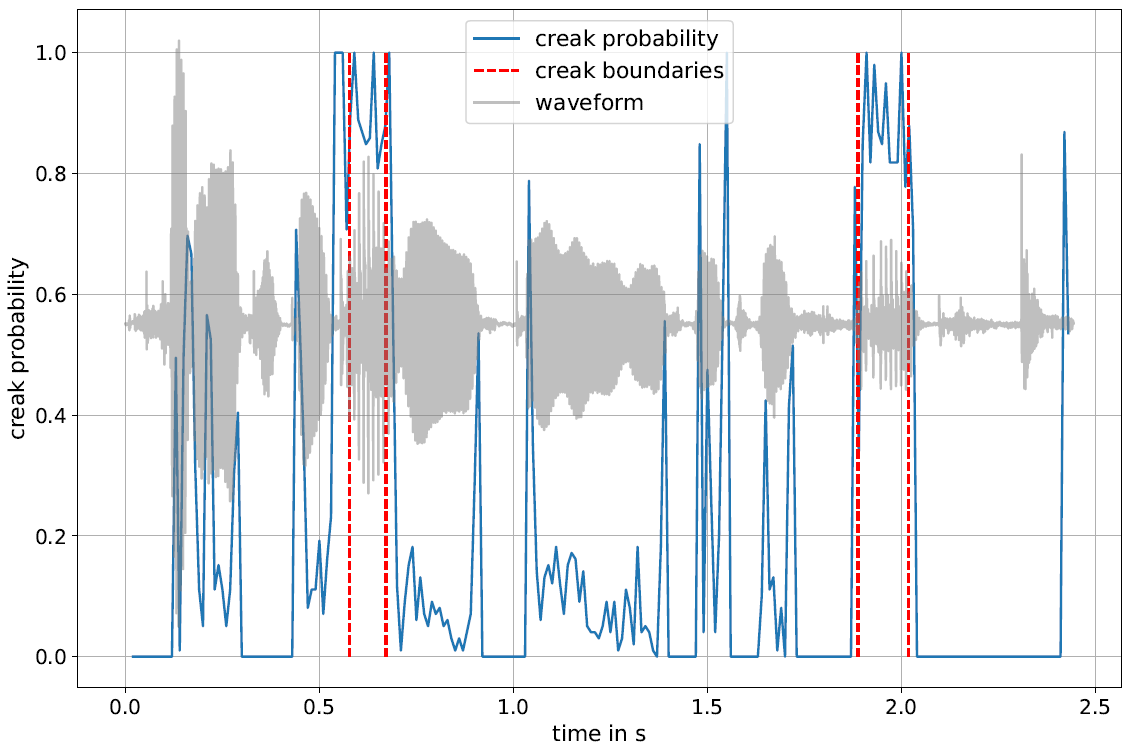

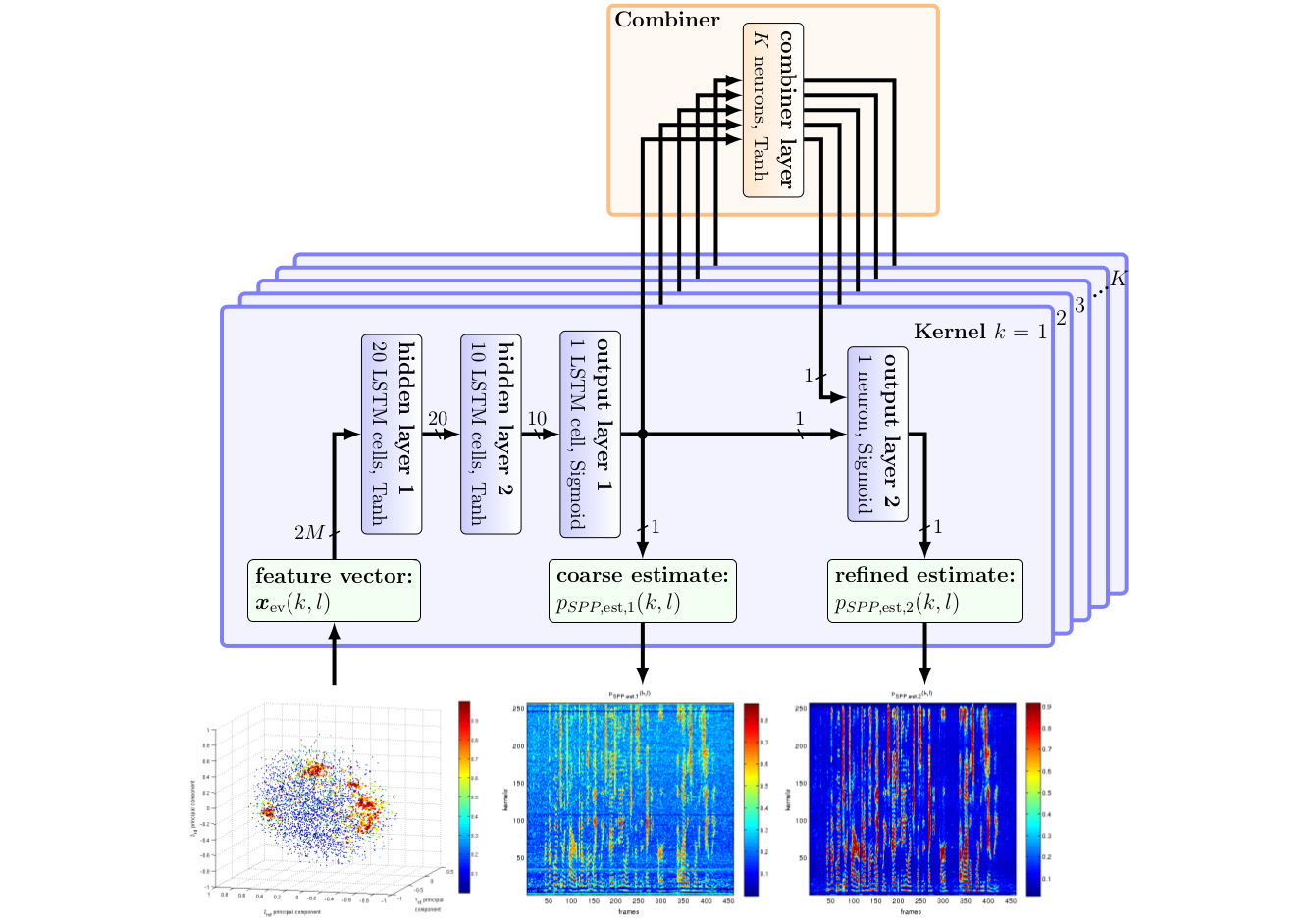

The annotation of creaky voice is relevant for various linguistic topics, from phonological analyses to the investigation of turn-taking, but manual annotation is a time-consuming process. In this paper, we present creapy, a Python-based tool to detect creaky intervals in speech signals. creapy does not require prior phonetic segmentation and supports the export of Praat TextGrid files, allowing for manual revision of the automatically labelled intervals. creapy was developed and tested using Austrian German conversational speech. It was optimised for recall to facilitate a semi-automatic annotation process, and it achieved a better performance for men’s (recall: .79) than for women’s voices (recall: .60).

Read the full article.

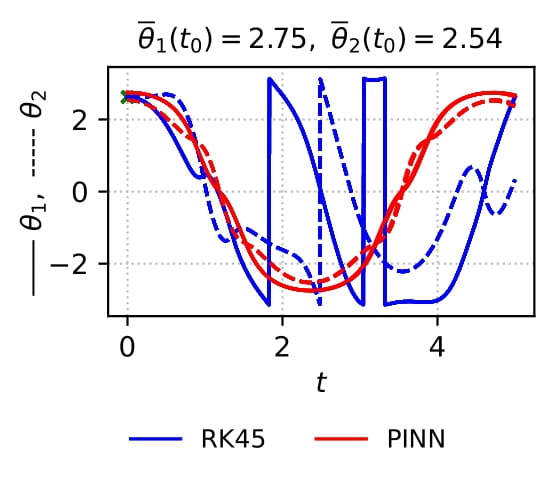

Physics-informed neural networks are a deep learning approach to solving differential equations given only information about the initial and boundary conditions. PINNs are easy to implement and have many desirable properties, such as being mesh-free. Unfortunately, it has been shown that training PINNs is not so straightforward - convergence problems often arise when simulating dynamical systems with high-frequency components, chaotic or turbulent behavior. In this work, we have focused on understanding the underlying reasons for the difficulties in training PINNs by performing experiments on the double pendulum. Our results show that PINNs are not sensitive to perturbations in the initial condition. Instead, the PINN optimization consistently converges to physically correct solutions that only marginally violate the initial condition, but diverge significantly from the desired solution due to the chaotic nature of the system. We hypothesize that the PINNs “cheat” by shifting the initial conditions to values that correspond to physically correct solutions that are easier to learn. Initial experiments suggest that domain decomposition, combined with an appropriate loss weighting scheme, mitigates this effect and allows convergence to the desired solution.

Read the full article.

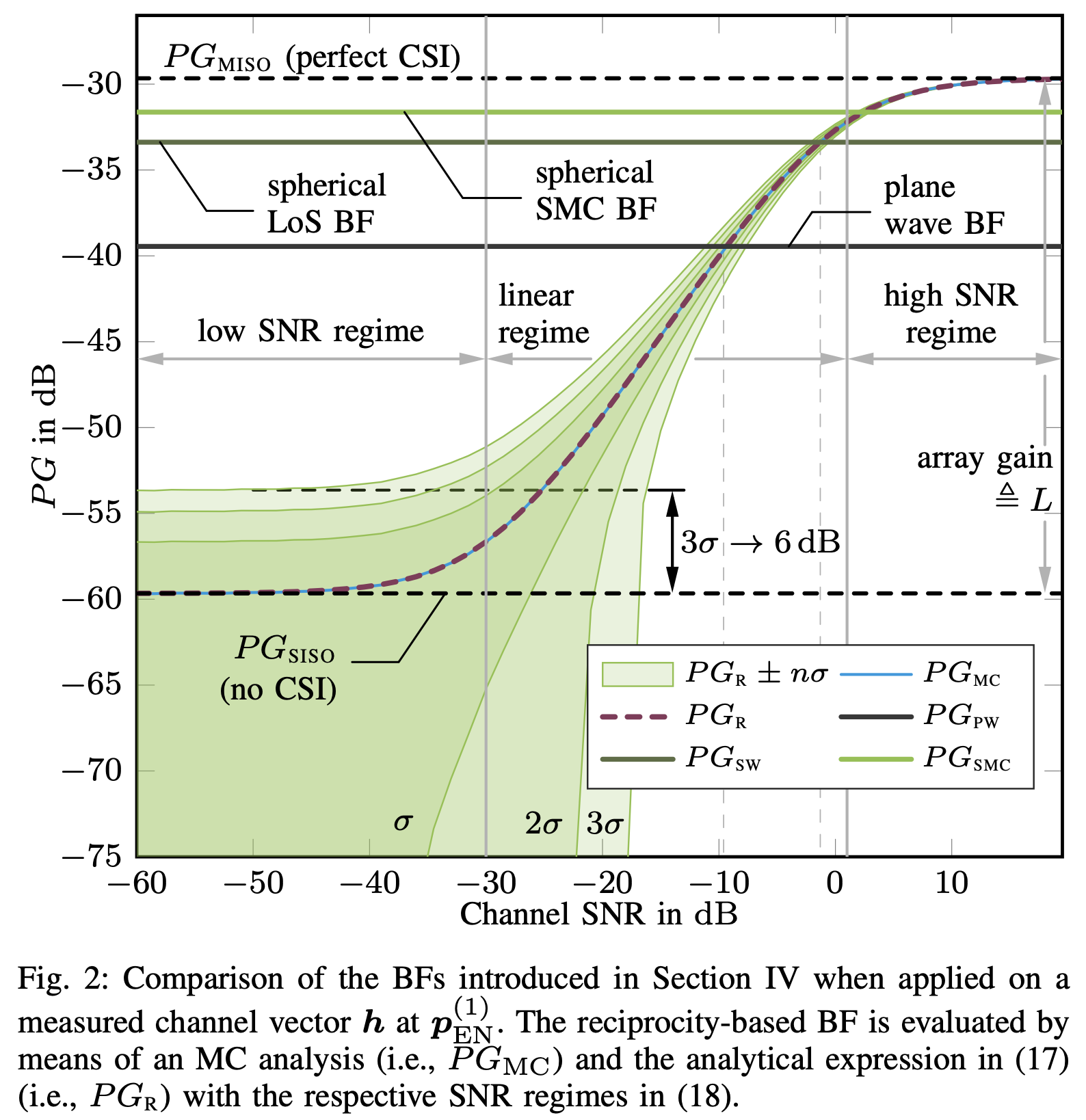

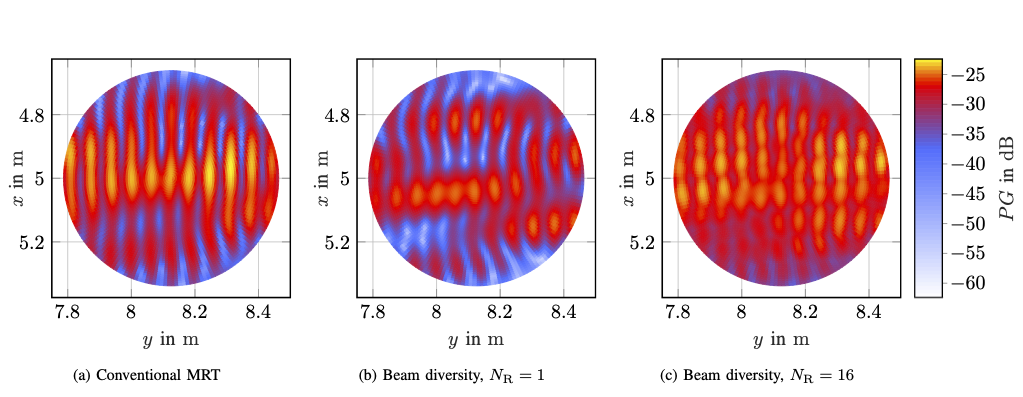

Massive antenna arrays form physically large apertures with a beam-focusing capability, leading to outstanding wireless power transfer (WPT) efficiency paired with low radiation levels outside the focusing region. However, leveraging these features requires accurate knowledge of the multipath propagation channel and overcoming the (Rayleigh) fading channel present in typical application scenarios. For that, reciprocity-based beamforming is an optimal solution that estimates the actual channel gains from pilot transmissions on the uplink. But this solution is unsuitable for passive backscatter nodes that are not capable of sending any pilots in the initial access phase. Using measured channel data from an extremely large-scale MIMO (XL-MIMO) testbed, we compare geometry-based planar wavefront and spherical wavefront beamformers with a reciprocity-based beamformer, to address this initial access problem. We also show that we can predict specular multipath components (SMCs) based only on geometric environment information. We demonstrate that a transmit power of 1W is sufficient to transfer more than 1mW of power to a device located at a distance of 12.3m when using a (40x25) array at 3.8GHz. The geometry-based beamformer exploiting predicted SMCs suffers a loss of only 2dB compared with perfect channel state information.

Read the full article.

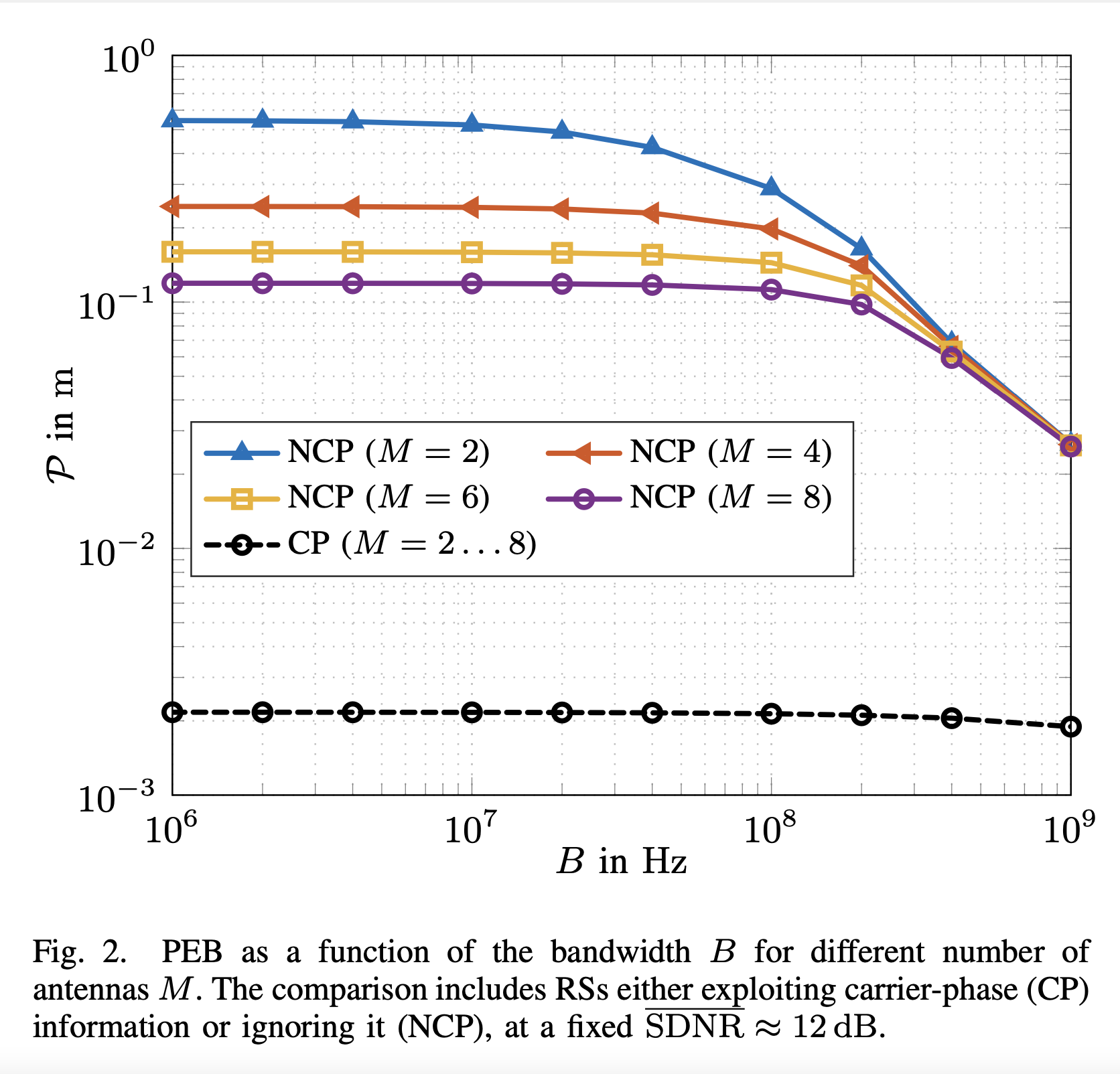

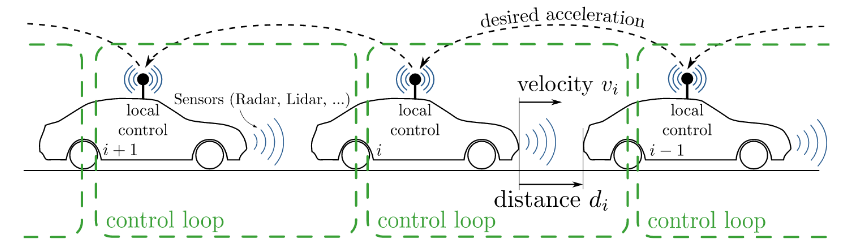

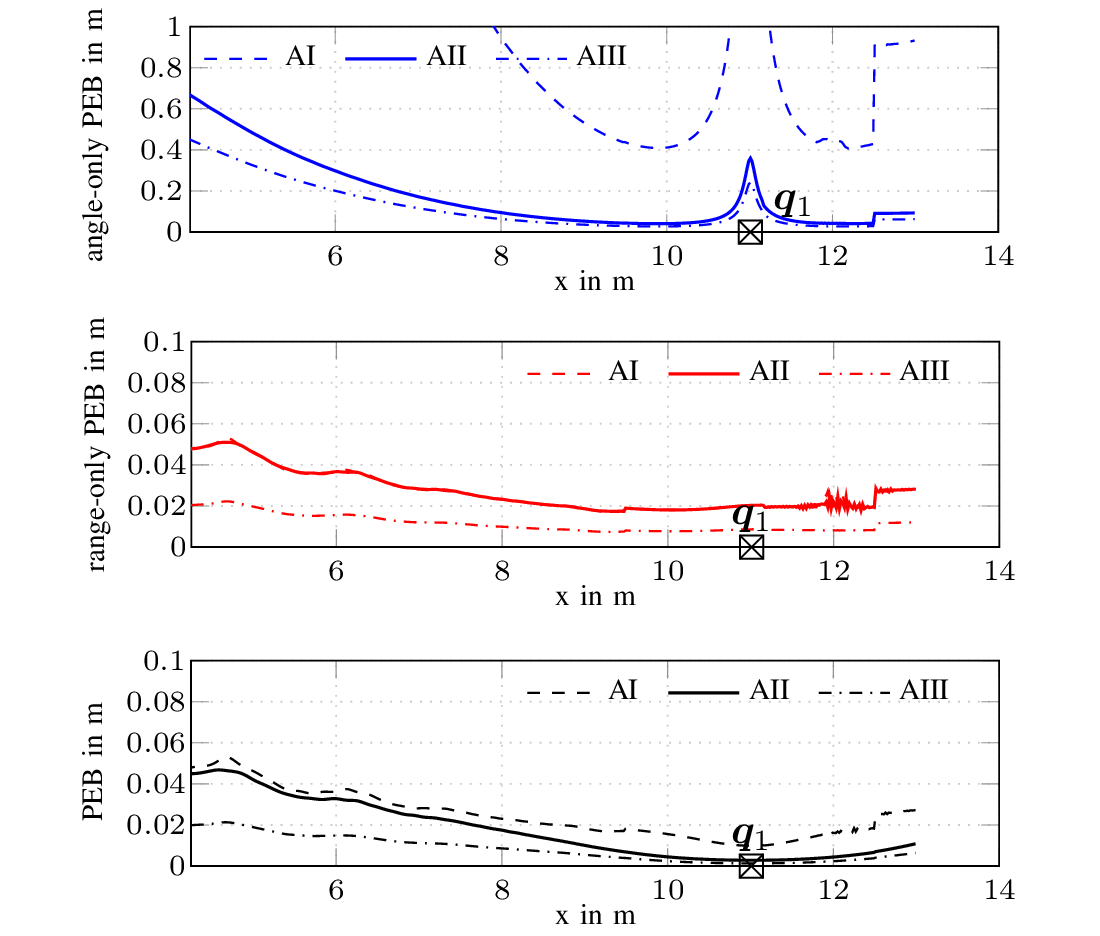

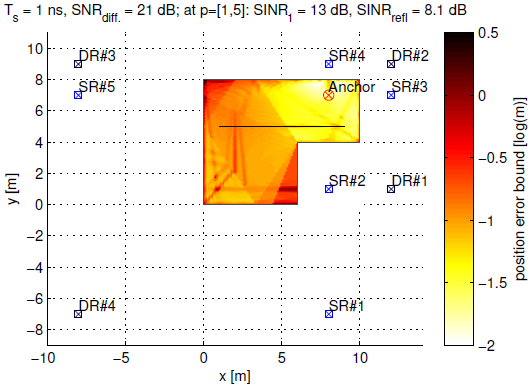

Radio stripes (RSs) is an emerging technology in beyond 5G and 6G wireless networks to support the deployment of cell-free architectures. This joint work investigates the potential use of RSs to enable joint positioning and synchronization in the uplink channel at sub-6 GHz bands. The considered scenario consists of a single-antenna user equipment (UE) that communicates with a network of multiple-antenna RSs distributed over a wide area. The UE is assumed to be unsynchronized to the RSs network, while individual RSs are time- and phase-synchronized. We formulate the problem of joint estimation of position, clock offset, and phase offset of the UE and derive the corresponding maximum-likelihood (ML) estimator, both with and without exploiting carrier phase information. Our team at the SPSC Lab contributed a Fisher information analysis to gain fundamental insights into the achievable performance and to inspect the theoretical lower bounds numerically. Simulation results demonstrate that a promising positioning and synchronization performance can be obtained in cell-free architectures supported by RSs, revealing at the same time the benefits of carrier phase exploitation through phase-synchronized RSs.

Read the full article.

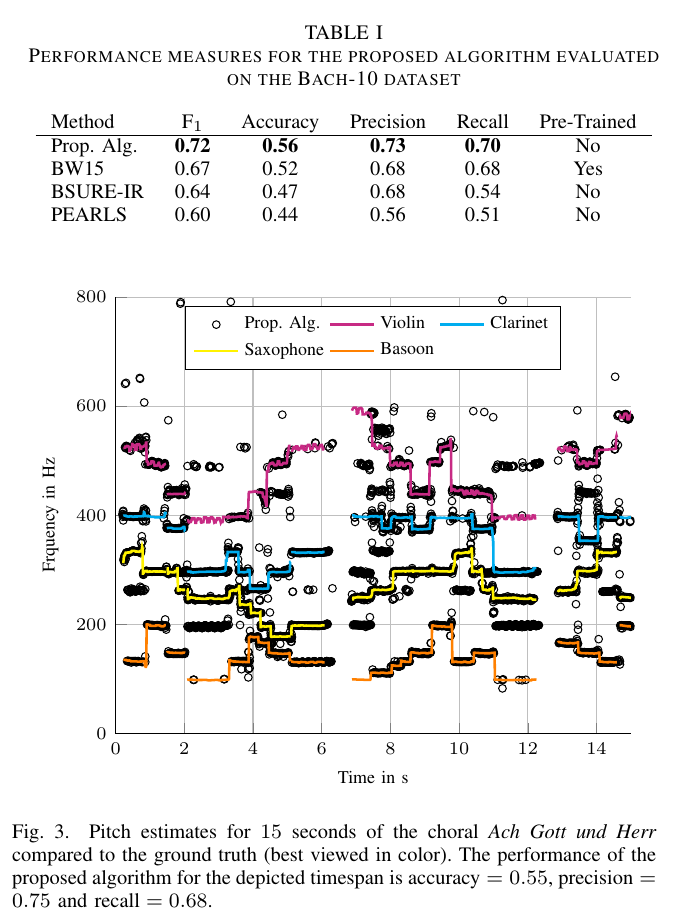

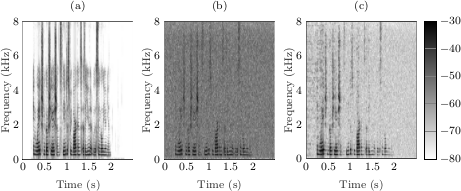

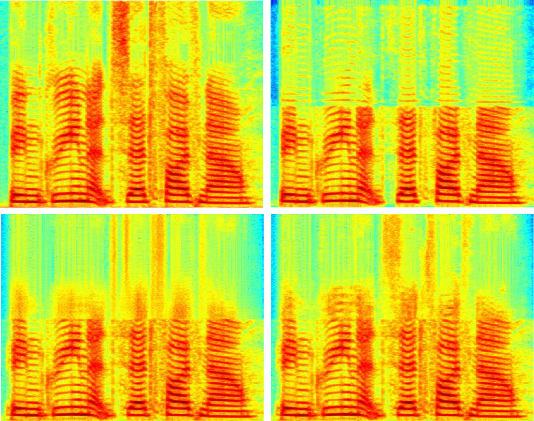

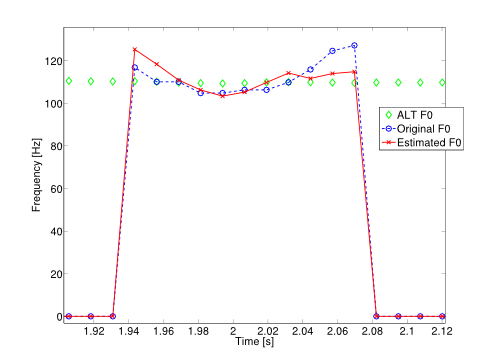

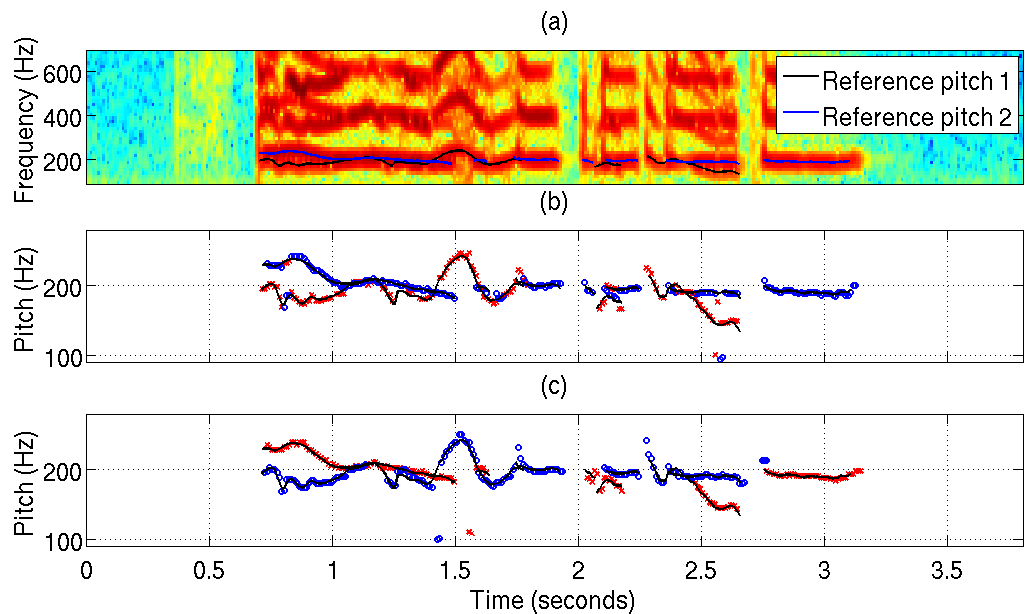

We developed a variational Bayesian inference algorithm for structured line spectra that actively exploits the structure that naturally occurs in many applications to improve estimation performance. For example, consider the audio signal produced by several notes played together in a chord. Each note is a line spectrum with a harmonic structure, i.e. each line is at a multiple of some fundamental frequency - the pitch of the note. When several notes are played together, the result is a linespectrum that is a mixture of several harmonic spectra. By explicitly considering the structure in each harmonic spectrum, our proposed method is able to outperform state-of-the-art multi-pitch estimation methods on the Bach-10 dataset, even machine learning methods pre-trained on the instruments in the dataset. An example of the detected pitch for several seconds of the chorale “Ach Gott und Herr” from the dataset is shown in the figure. Structured line spectra occur (approximately) in many other applications, such as the detection and estimation of extended objects using radar signals or variational mode decomposition. In both examples, we were able to outperform other state-of-the-art algorithms, demonstrating the versatility of the developed method.

Read the full article.

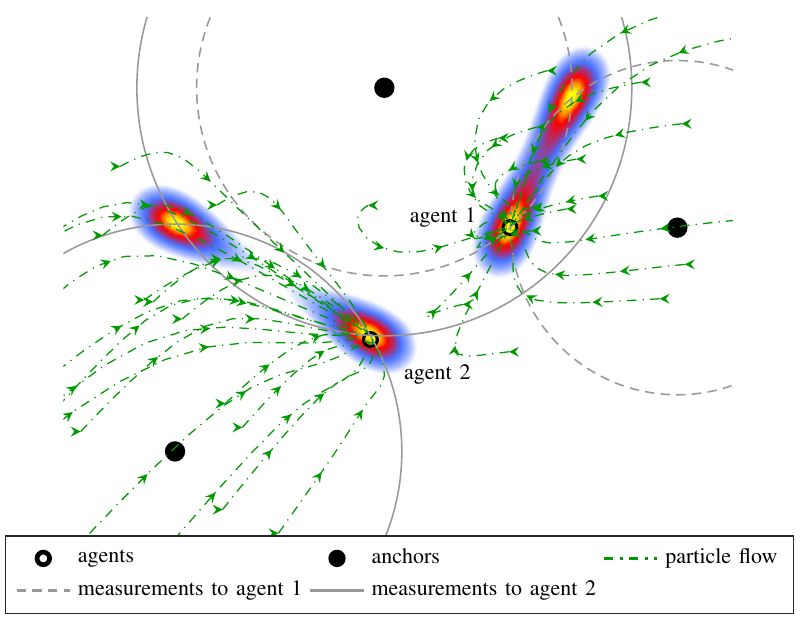

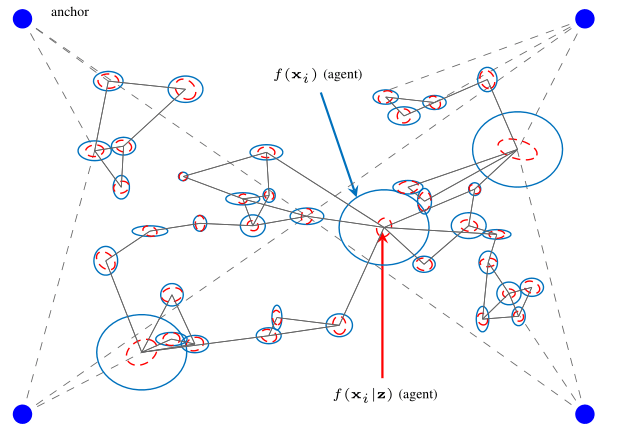

This paper derives the messages of belief propagation (BP) for cooperative localization by means of particle flow, leading to the development of a distributed particle-based message-passing algorithm which avoids particle degeneracy. Our combined particle flow-based BP approach allows the calculation of highly accurate proposal distributions for agent states with a minimal number of particles. It outperforms conventional particle-based BP algorithms in terms of accuracy and runtime. Furthermore, we compare the proposed method to a centralized particle flow-based implementation, known as the exact Daum-Huang filter, and to sigma point BP in terms of position accuracy, runtime, and memory requirement versus the network size. We further contrast all methods to the theoretical performance limit provided by the posterior Cramer-Rao lower bound. Based on three different scenarios, we demonstrate the superiority of the proposed method.

Read the full article.

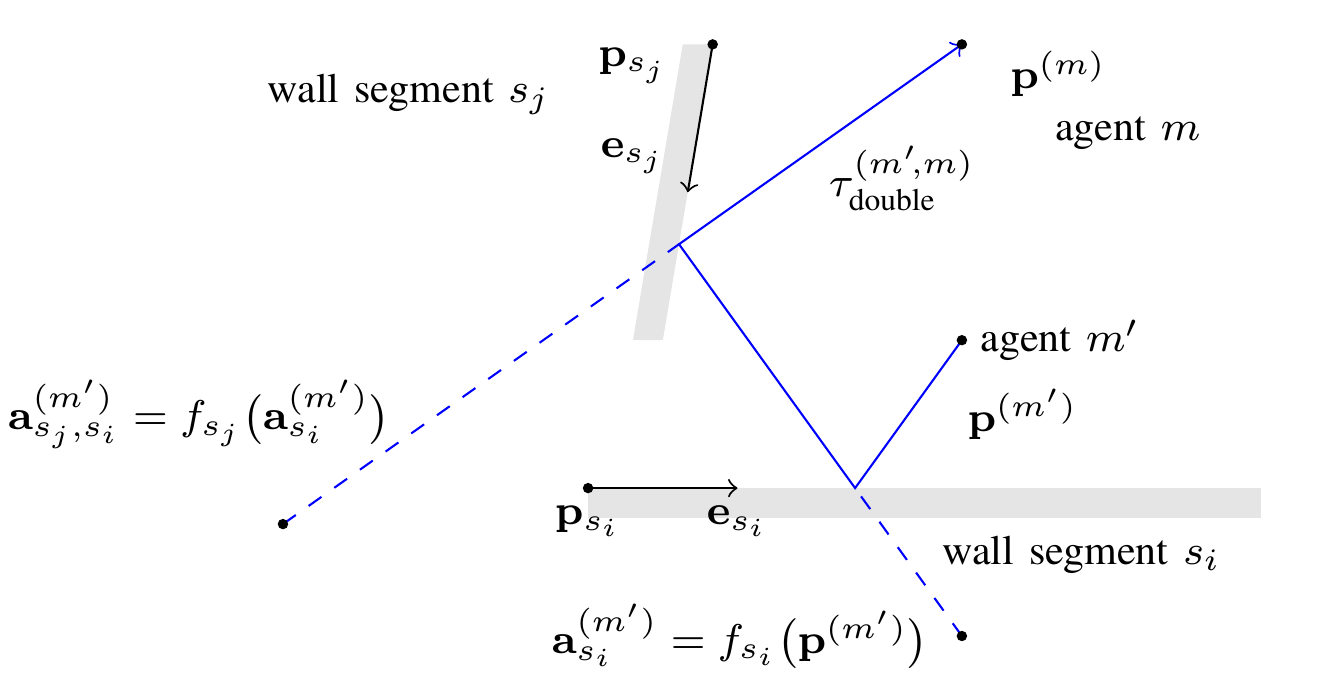

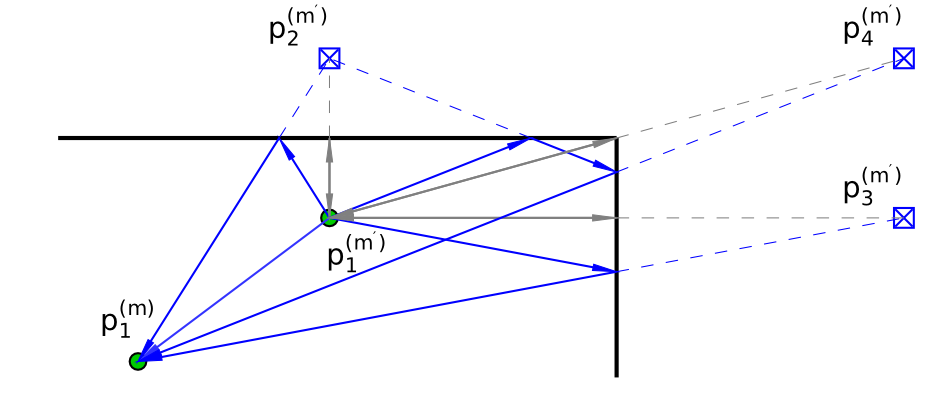

Multipath-based simultaneous localization and mapping (SLAM) is an emerging paradigm for accurate indoor localization with limited resources. The goal of multipath-based SLAM is to detect and localize radio reflective surfaces to support the estimation of time-varying positions of mobile agents. Radio reflective surfaces are typically represented by so-called virtual anchors (VAs), which are mirror images of base stations at the surfaces. In existing multipath-based SLAM methods, a VA is introduced for each propagation path, even if the goal is to map the reflective surfaces. The fact that not every reflective surface but every propagation path is modeled by a VA, complicates a consistent combination “fusion” of statistical information across multiple paths and base stations and thus limits the accuracy and mapping speed of existing multipath-based SLAM methods. In this paper, we introduce an improved statistical model and estimation method that enables data fusion for multipath-based SLAM by representing each surface by a single master virtual anchor (MVA). We further develop a particle-based sum-product algorithm (SPA) that performs probabilistic data association to compute marginal posterior distributions of MVA and agent positions efficiently. A key aspect of the proposed estimation method based on MVAs is to check the availability of singlebounce and double-bounce propagation paths at a specific agent position by means of ray-launching. The availability check is directly integrated into the statistical model by providing detection probabilities for probabilistic data association. Our numerical simulation results demonstrate significant improvements in estimation accuracy and mapping speed compared to state-of-the-art multipath-based SLAM methods.

Read the full article.

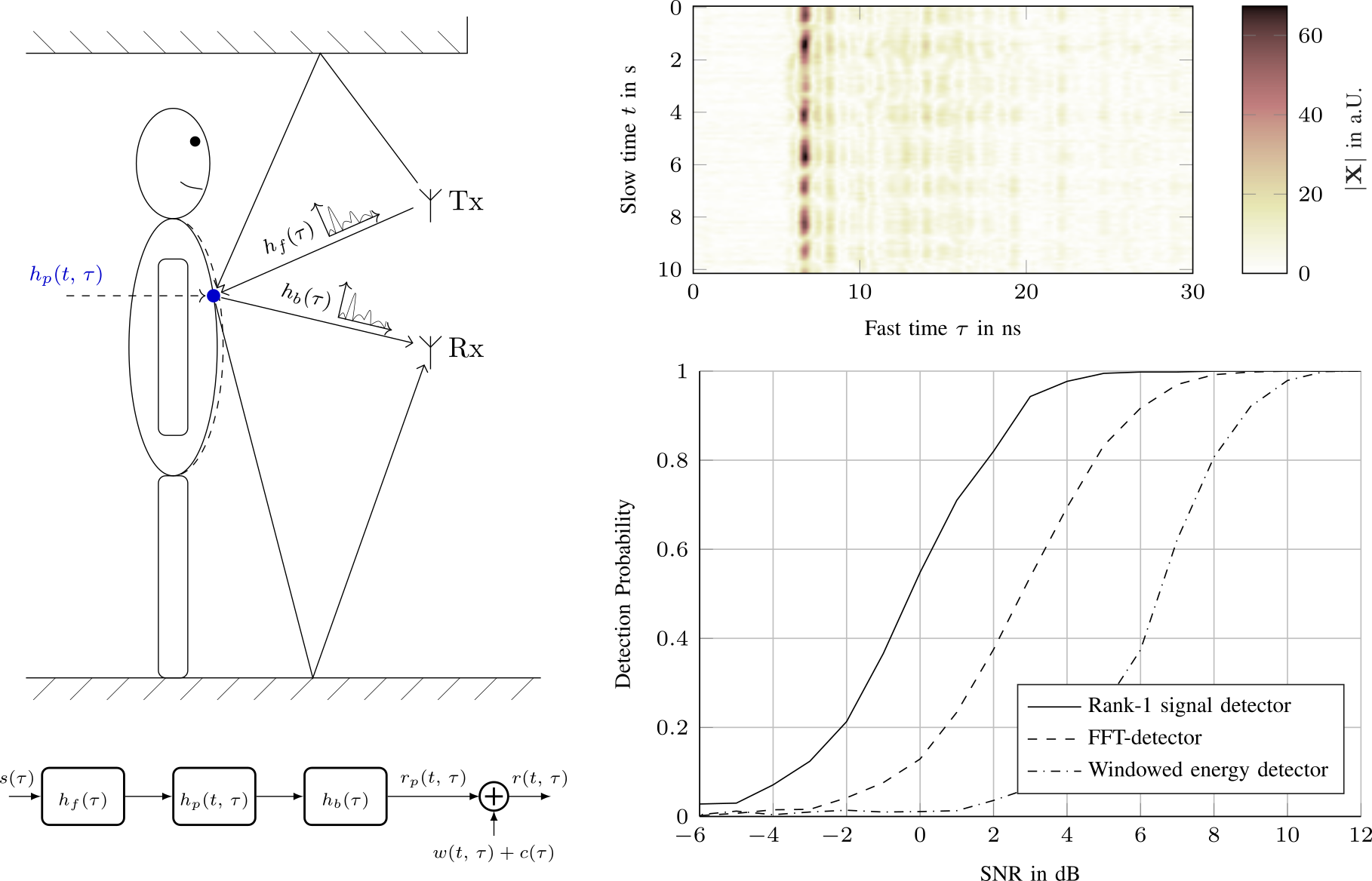

We apply an variational message passing scheme in order to detect the presence of children im parked cars using multistatic UWB radar.

Read the full article.

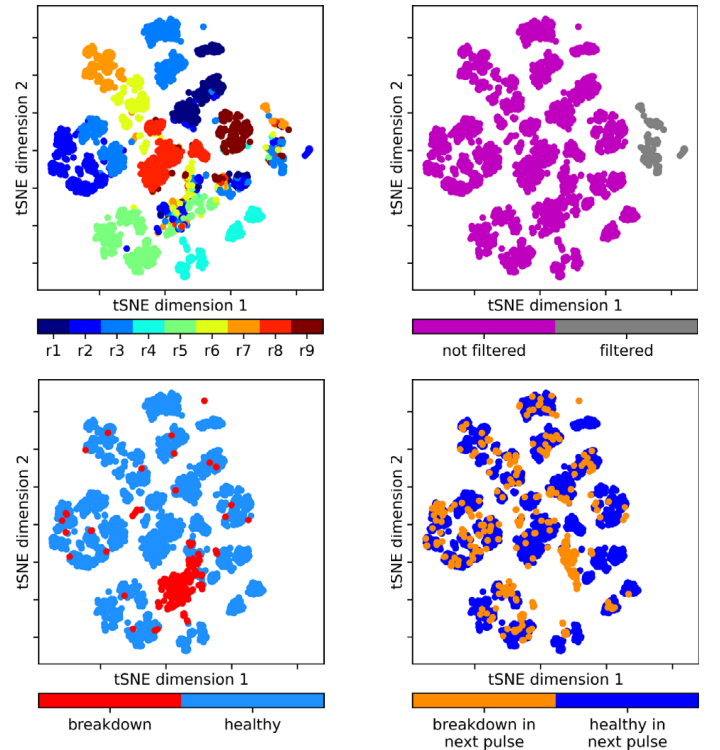

In our latest project with CERN, we used machine learning to analyze breakdowns in a test bench for the CLIC accelerator. In particle accelerators, one of the most prevalent limits on high-gradient operation is the occurrence of vacuum arcs, commonly known as radio frequency (RF) breakdowns. During a breakdown, field enhancement, associated with small deformations on the cavity surface, results in electrical arcs which may irreparably damage the RF cavity surface. In the project, supervised and unsupervised methods were used for data analysis and a breakdown prediction study. ‘Explainable-AI’ made it possible to interpret learned model parameters and to reverse engineer physical properties in the test bench. Similar models could be applied to cancer treatment, light sources, and CERN next generation high energy physics facilities.

Read the full article.

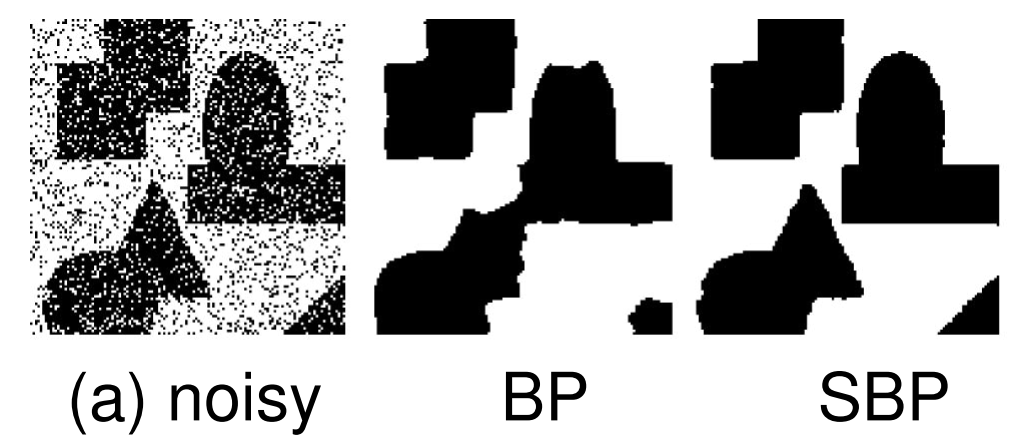

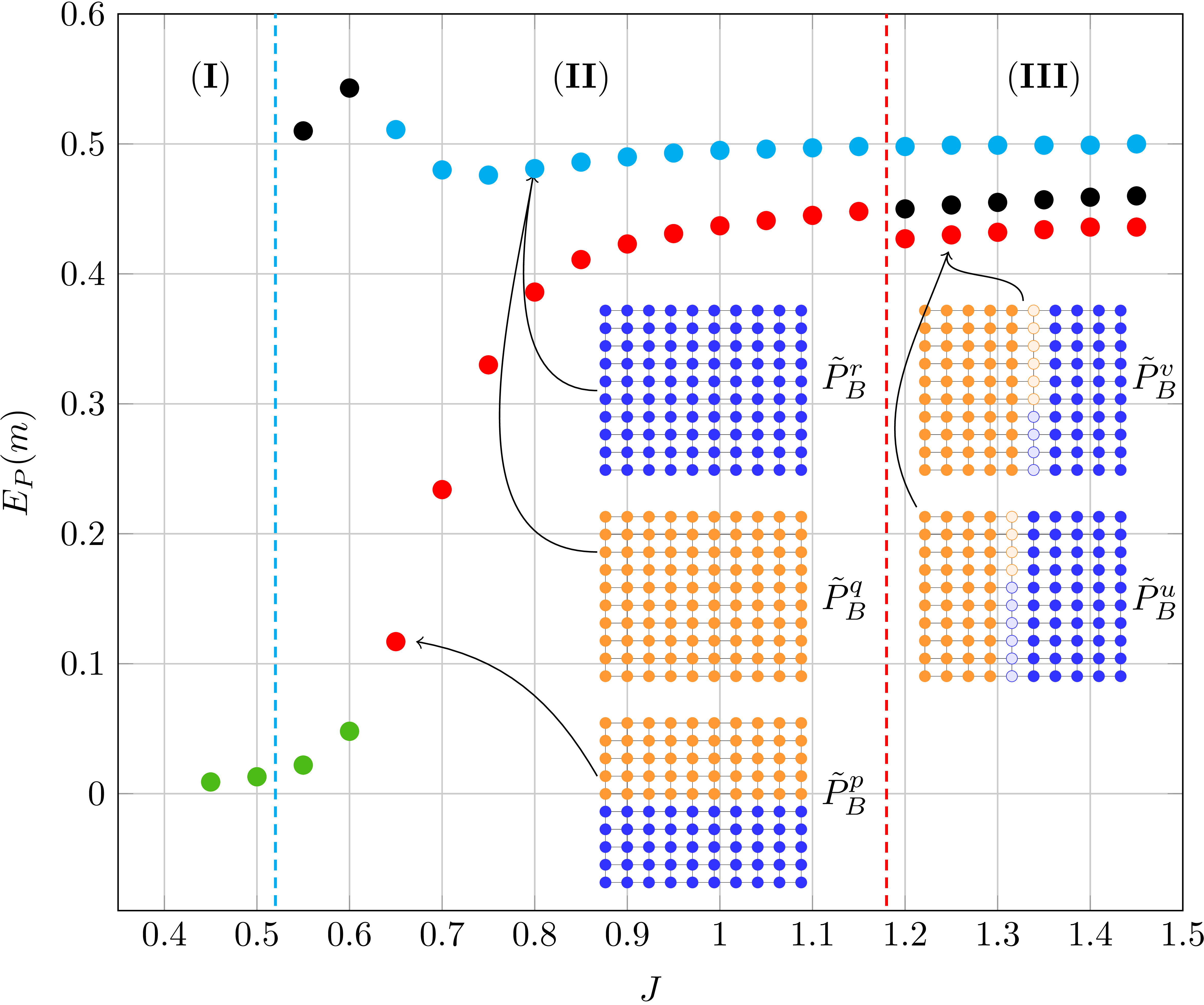

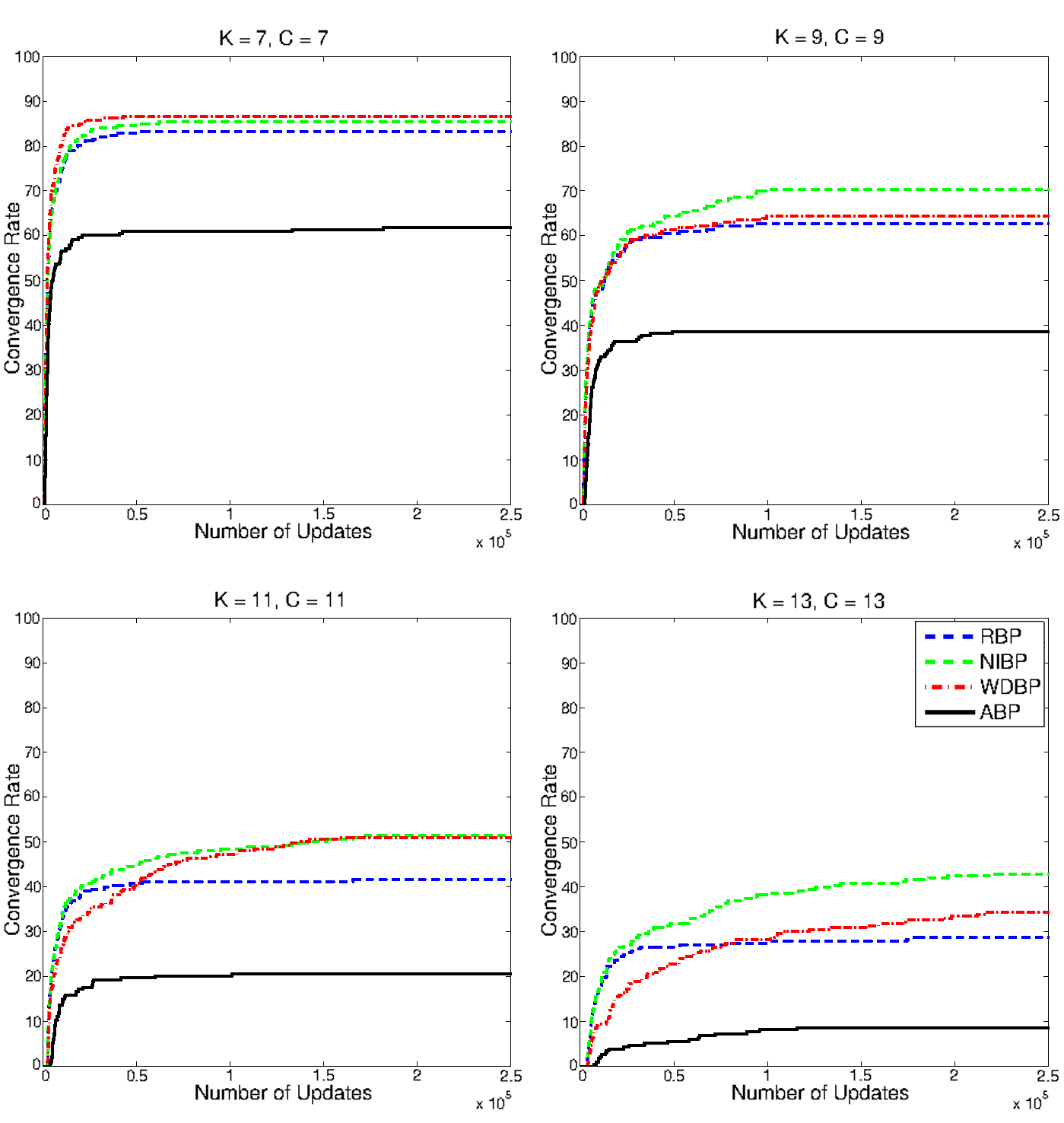

Belief propagation (BP) is a popular method for performing probabilistic inference on graphical models. In this work we show how one can improve the performance of BP by solving a sequence of models that starts with independent variables. We term this approach self-guided belief propagation (SBP) and theoretically demonstrate that SBP finds the global optimum of the Bethe approximation for attractive models where all variables favor the same state .Moreover, we apply SBP to various graphs (random ones, and graphs corresponding to problems in wireless communications and computer vision) and show that (i) SBP is superior in terms of accuracy whenever BP converges, and (ii) SBP obtains a unique, stable, and accurate solution whenever BP does not converge.

Read the full article.

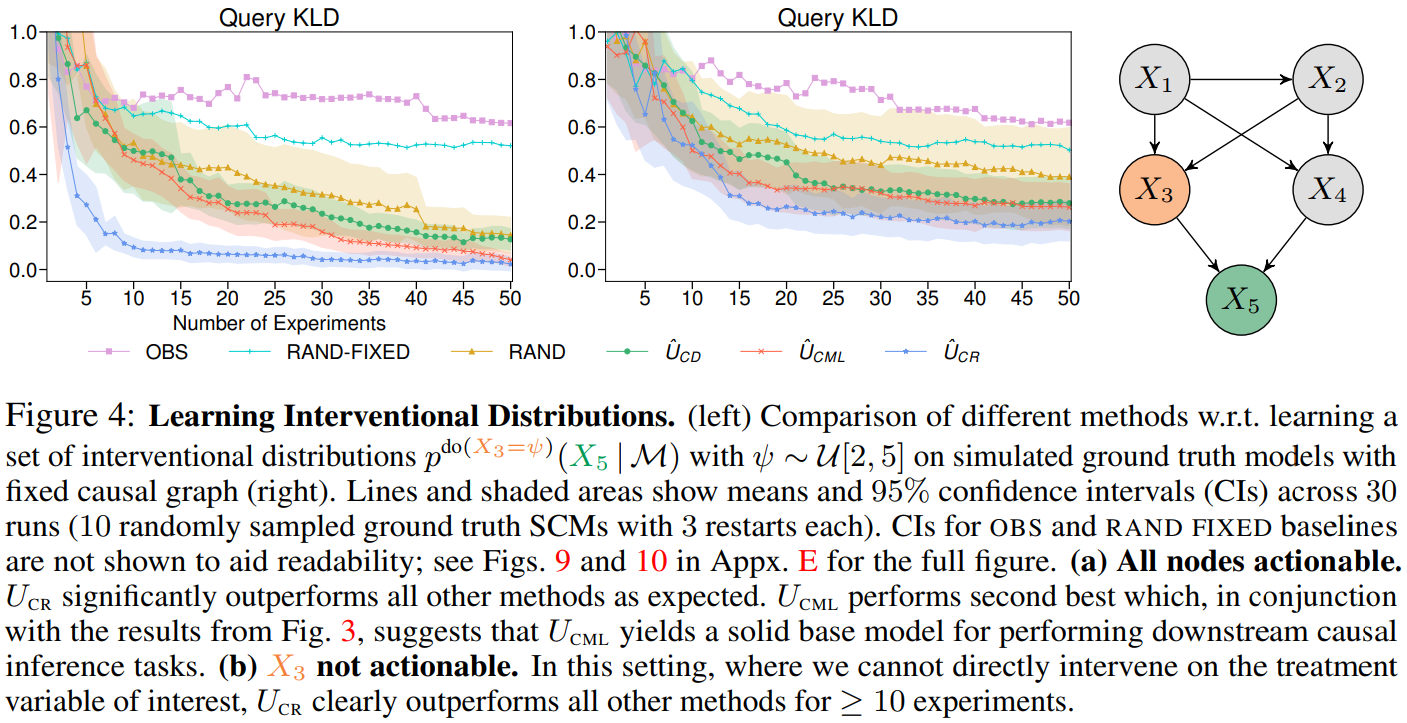

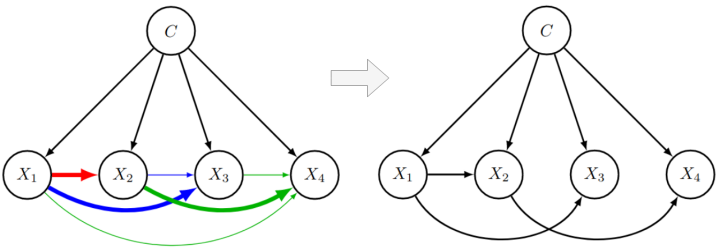

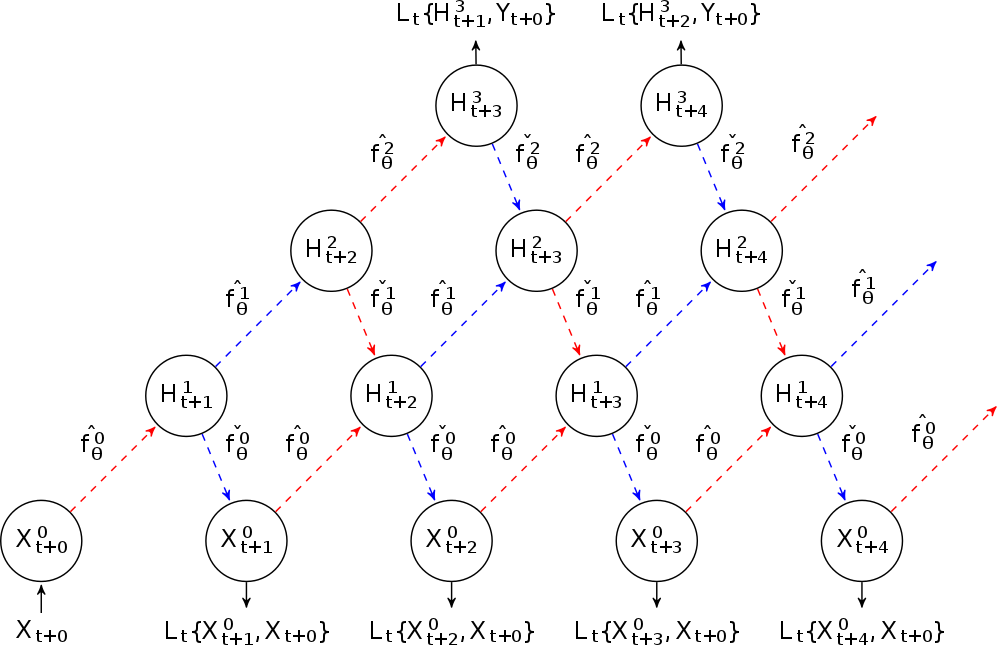

Causal discovery and causal reasoning are classically treated as separate and consecutive tasks: one first infers the causal graph, and then uses it to estimate causal effects of interventions. However, such a two-stage approach is uneconomical, especially in terms of actively collected interventional data, since the causal query of interest may not require a fully-specified causal model. From a Bayesian perspective, it is also unnatural, since a causal query (e.g., the causal graph or some causal effect) can be viewed as a latent quantity subject to posterior inference—other unobserved quantities that are not of direct interest (e.g., the full causal model) ought to be marginalized out in this process and contribute to our epistemic uncertainty. In this work, we propose Active Bayesian Causal Inference (ABCI), a fully-Bayesian active learning framework for integrated causal discovery and reasoning, which jointly infers a posterior over causal models and queries of interest. In our approach to ABCI, we focus on the class of causally-sufficient, nonlinear additive noise models, which we model using Gaussian processes. We sequentially design experiments that are maximally informative about our target causal query, collect the corresponding interventional data, and update our beliefs to choose the next experiment. Through simulations, we demonstrate that our approach is more data-efficient than several baselines that only focus on learning the full causal graph. This allows us to accurately learn downstream causal queries from fewer samples while providing well-calibrated uncertainty estimates for the quantities of interest.

Read the full article.

Within the REINDEER H2020 project, we investigate the potential of using physically large, or distributed antenna arrays to transmit power wirelessly to batteryless energy neutral (EN) devices. An enabling milestone to make the technology feasible is solving the initial-access problem, i.e., waking up an EN device with unknown channel state information (CSI).

Read the full article.

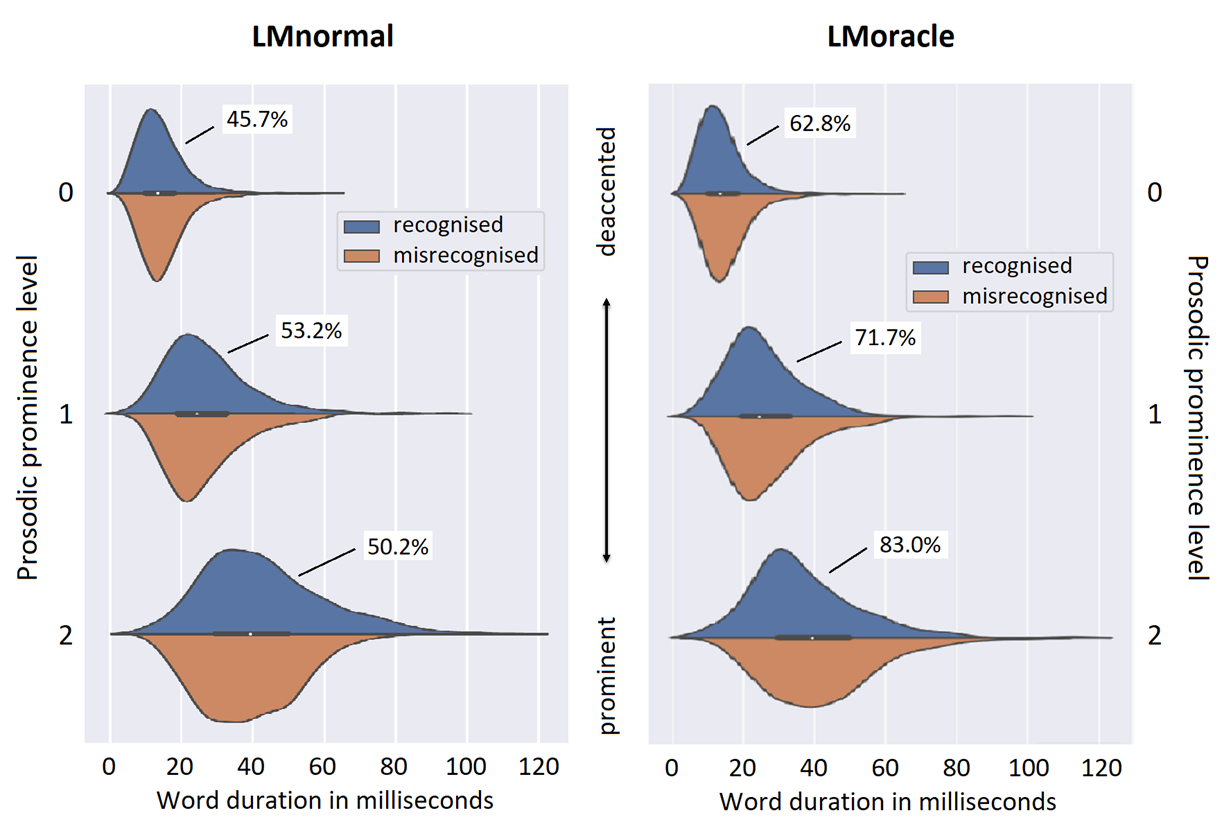

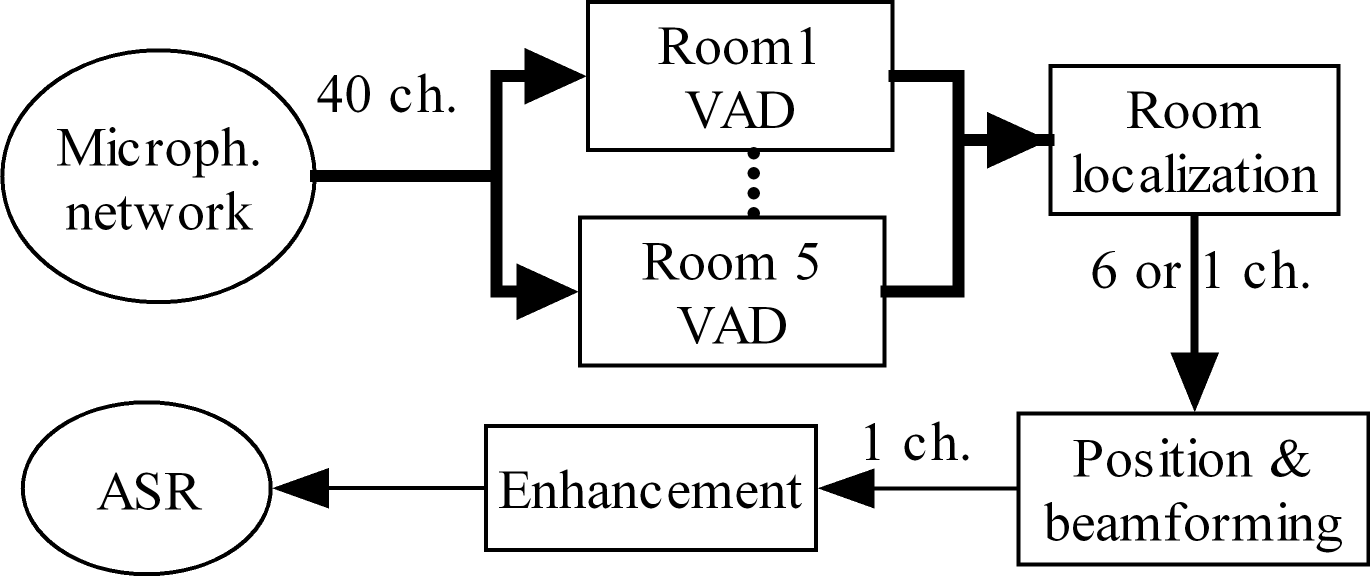

The performance of Automatic Speech Recognition (ASR) systems varies with the speaking style of the data that is to be recognised. Where read speech, voice commands and also broadcast news are nowadays well recognised by standard ASR systems, conversational speech remains to be challenging for multiple reasons.

Read the full article.

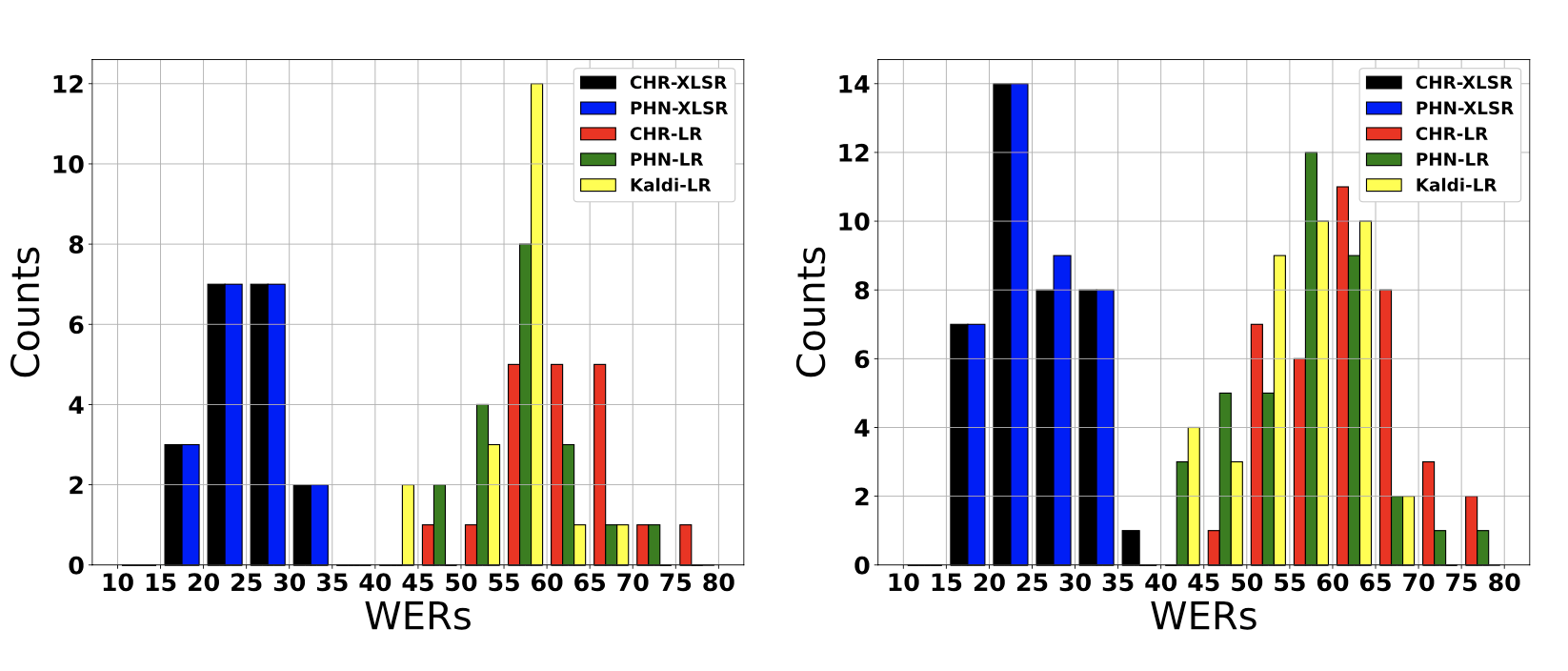

Left: Histogram showing conversation-dependent WERs of low-resource (LR) and data-driven (XLSR) 4-gram models. Right: Histogram showing speaker-dependent WERs of low-resource (LR) and data-driven (XLSR) 4-gram models.

Read the full article.

We show, that the UWB nodes of the keyless-access system of a car can be used as radar sensors to detect if the car is occupied.

Read the full article.

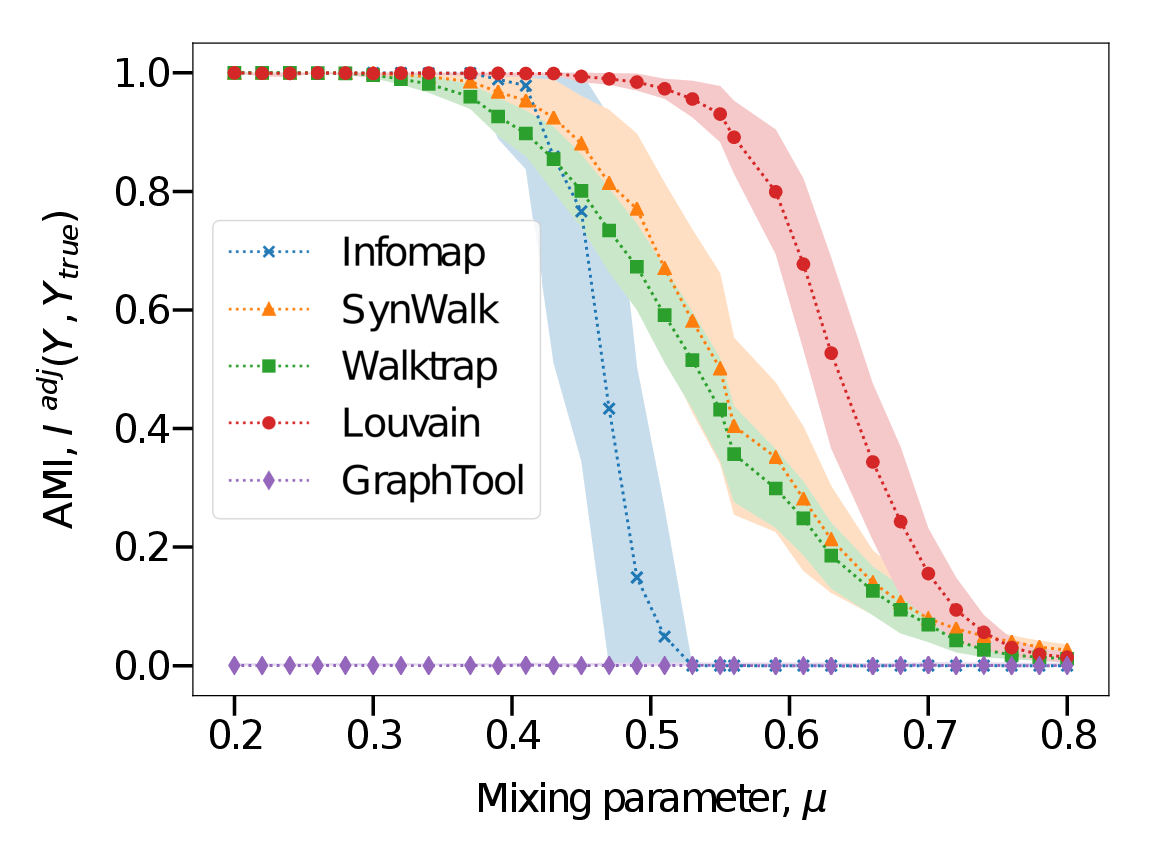

Complex systems, abstractly represented as networks, are ubiquitous in everyday life. Analyzing and understanding these systems requires, among others, tools for community detection. As no single best community detection algorithm can exist, robustness across a wide variety of problem settings is desirable. In this work, we present Synwalk, a random walk-based community detection method. Synwalk builds upon a solid theoretical basis and detects communities by synthesizing the random walk induced by the given network from a class of candidate random walks. We thoroughly validate the effectiveness of our approach on synthetic and empirical networks, respectively, and compare Synwalk’s performance with the performance of Infomap and Walktrap (also random walk-based), Louvain (based on modularity maximization) and stochastic block model inference. Our results indicate that Synwalk performs robustly on networks with varying mixing parameters and degree distributions. We outperform Infomap on networks with high mixing parameter, and Infomap and Walktrap on networks with many small communities and low average degree. Our work has a potential to inspire further development of community detection via synthesis of random walks and we provide concrete ideas for future research.

Read the full article.

Uncertainty estimation and out-of-distribution robustness are vital aspects of modern deep learning. Predictive uncertainty supplements model predictions and enables improved functionality of downstream tasks including various resource-constrained embedded and mobile applications. Popular examples are virtual reality (VR), augmented reality (AR), sensor fusion, perception, and health applications including fitness indicators, arrhythmia detection, and skin lesion detection. Robust and reliable predictions with uncertainty estimates are increasingly important when operating on noisy in-the-wild data from sensory inputs. A large variety of deep learning architectures have been applied to various tasks with great success in terms of prediction quality, however, producing reliable and robust uncertainty without additional computational and memory overhead remains a challenge. This issue is further aggravated due to the limited computational and memory budget available in typical battery-powered mobile devices.

Read the full article.

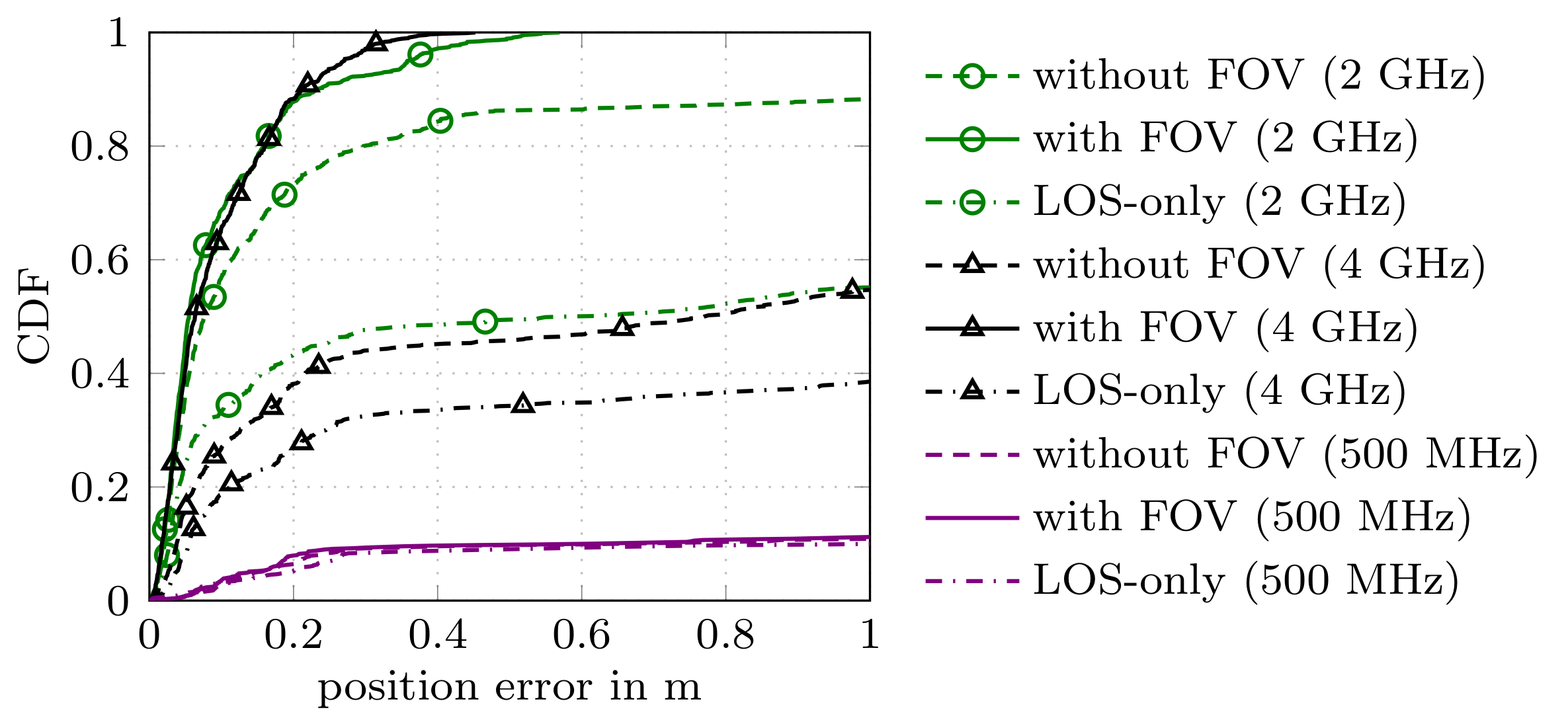

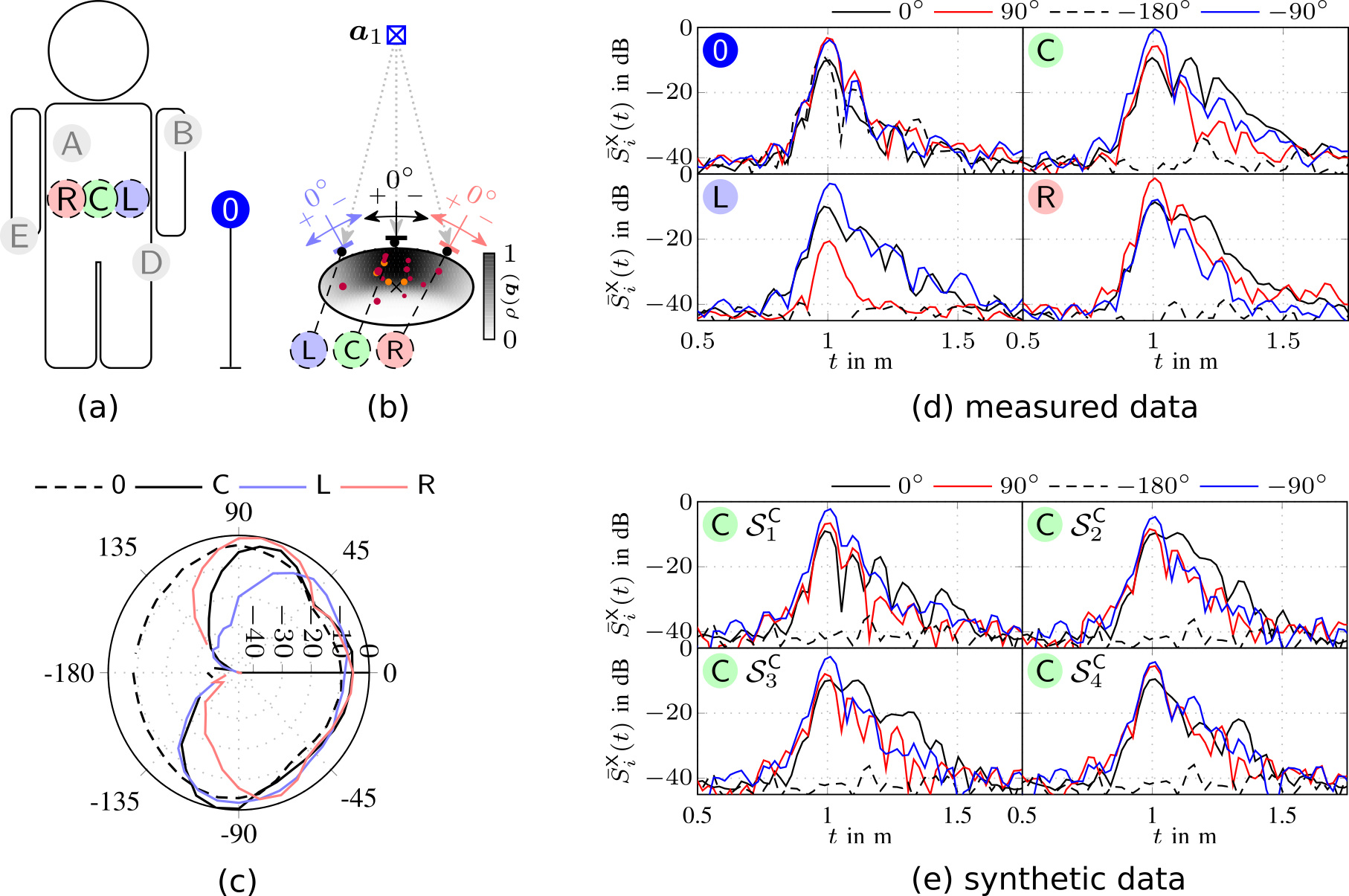

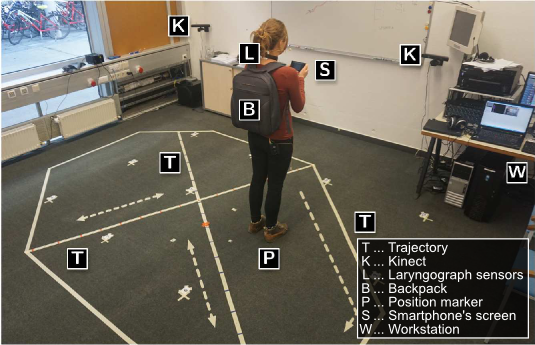

In this work we consider multipath-based positioning and tracking in off-body channels. We analyse the effects introduced by the human body and the resulting effects that are of interest in positioning and tracking based on channel measurements obtained in an indoor scenario. As the signal bandwidth is known to effect the achievable accuracy in positioning, the bandwidth dependency of the field of view (FOV) induced by human body via shadowing and the number of multipath components (MPCs) detected and estimated by a deterministic maximum likelihood (ML) algorithm is investigated. A multipath-based positioning and tracking algorithm is proposed that associates estimated MPC parameters with floor plan features and exploits a human body-dependent FOV function. The proposed algorithm is able to provide accurate position estimates even for an off-body radio channel in a multipath-prone environment with the signal bandwidth found to be a limiting factor.

Read the full article.

In cooperative localization applications, measurement-model related model parameters are often assumed to be known even though they can depend strongly on the environment. This assumption can lead to a reduced localization accuracy due to parameter mismatch. In this paper, we propose an adaptive factor-graph-based algorithm for joint cooperative localization and orientation estimation which inherently estimates all unknown model parameters as well as the measurement uncertainty. We use RSS radio measurements and account for the directivity of the antennas with a parametric antenna pattern. We validate our proposed methods with simulations in a static scenario and show that there is only a small loss in positioning accuracy compared to known model parameters and measurement noise.

Read the full article.

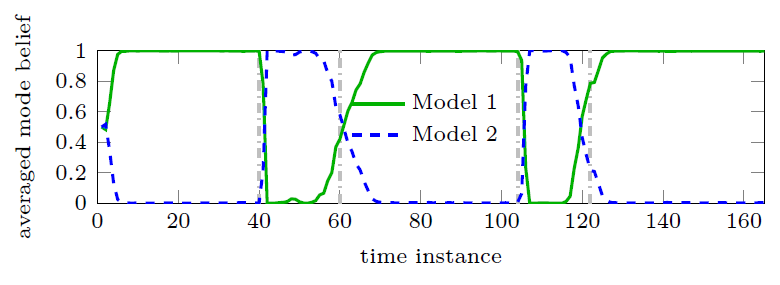

In this work, we present a Bayesian multipath-based simultaneous localization and mapping (SLAM) algorithm that continuously adapts interacting multiple models (IMM) parameters to describe the mobile agent state dynamics. The time-evolution of the IMM parameters is described by a Markov chain and the parameters are incorporated into the factor graph structure that represents the statistical structure of the SLAM problem. The proposed belief propagation (BP)-based algorithm adapts, in an online manner, to time-varying system models by jointly inferring the model parameters along with the agent and map feature states. The performance of the proposed algorithm is finally evaluating with a simulated scenario. Our numerical simulation results show that the proposed multipath-based SLAM algorithm is able to cope with strongly changing agent state dynamics.

Read the full article.

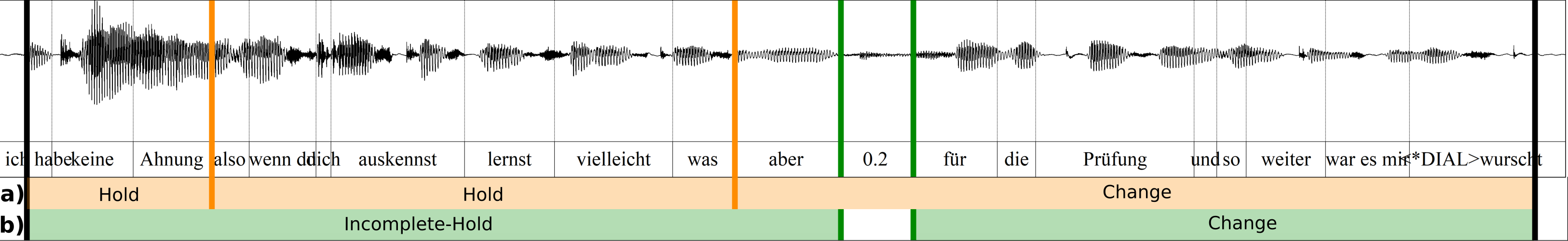

The investigation of conversational speech requires the close collaboration of linguists and speech technologists to develop new modeling techniques that allow the incorporation of various knowledge sources. This paper presents a progress report on the ongoing interdisciplinary project “Cross-layer language models for conversational speech” with a focus on the development of an annotation system for communicative functions. We discuss the requirements of such a system for the application in ASR as well as for the use in phonetic studies of talk-in-interaction, and illustrate emerging issues with the example of turn management.

Read the full article.

This work provides an initial investigation on the application of convolutional neural networks (CNNs) for fingerprint-based positioning using measured massive MIMO channels. When represented in appropriate domains, massive MIMO channels have a sparse structure which can be efficiently learned by CNNs for positioning purposes. We evaluate the positioning accuracy of state-of-the-art CNNs with channel fingerprints generated from a channel model with a rich clustered structure: the COST 2100 channel model. We find that moderately deep CNNs can achieve fractional-wavelength positioning accuracies, provided that an enough representative data set is available for training.

Read the full article.

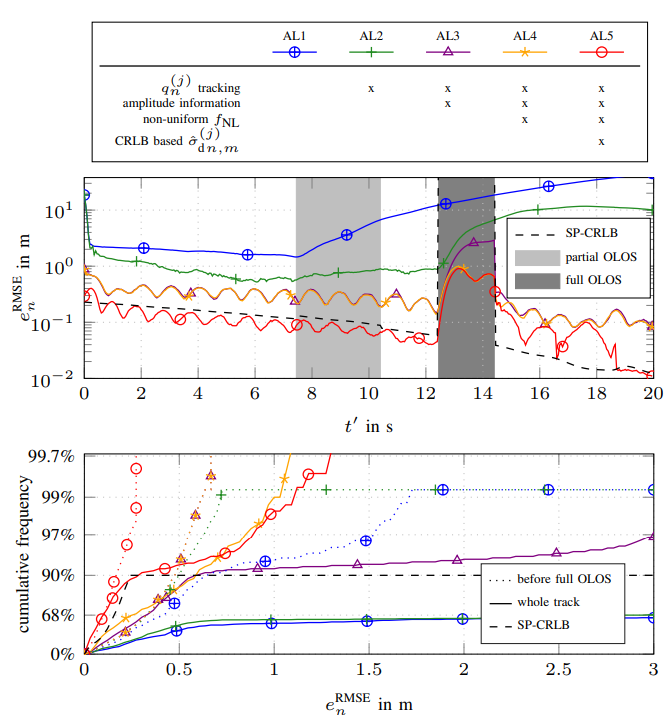

We present a message passing algorithm for localization and tracking in multipath-prone environments that implicitly considers obstructed line-of-sight situations. The proposed adaptive probabilistic data association algorithm infers the position of a mobile agent using multiple anchors by utilizing delay and amplitude of the multipath components (MPCs) as well as their respective uncertainties. By employing a nonuniform clutter model, we enable the algorithm to facilitate the position information contained in the MPCs to support the estimation of the agent position without exact knowledge about the environment geometry. Our algorithm adapts in an online manner to both, the time-varying signal-to-noise-ratio and line-of-sight (LOS) existence probability of each anchor. In a numerical analysis we show that the algorithm is able to operate reliably in environments characterized by strong multipath propagation, even if a temporary obstruction of all anchors occurs simultaneously

Read the full article.

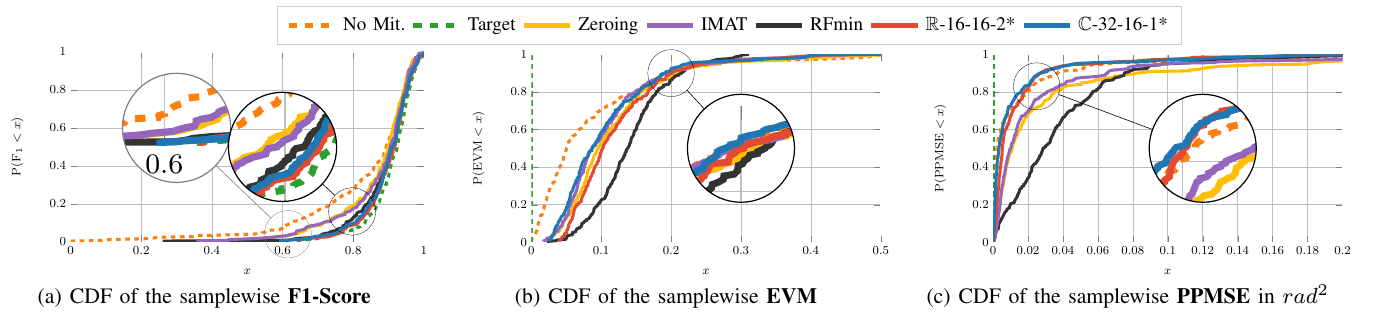

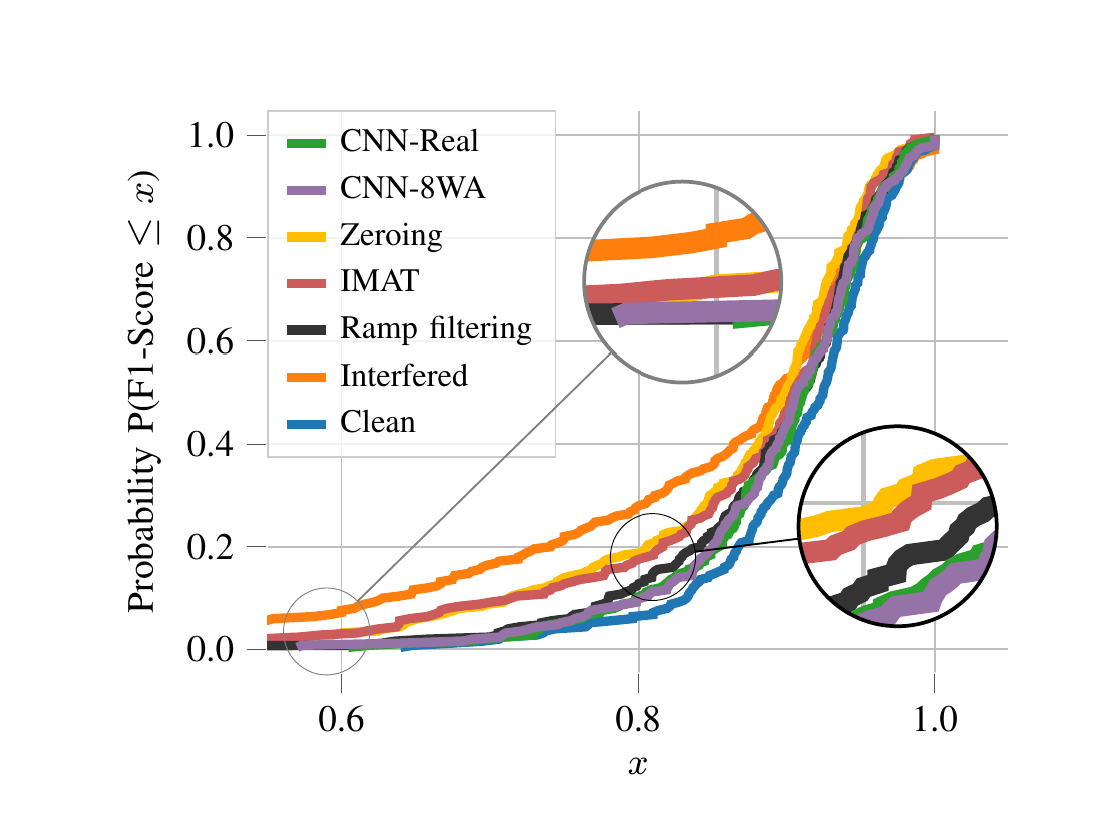

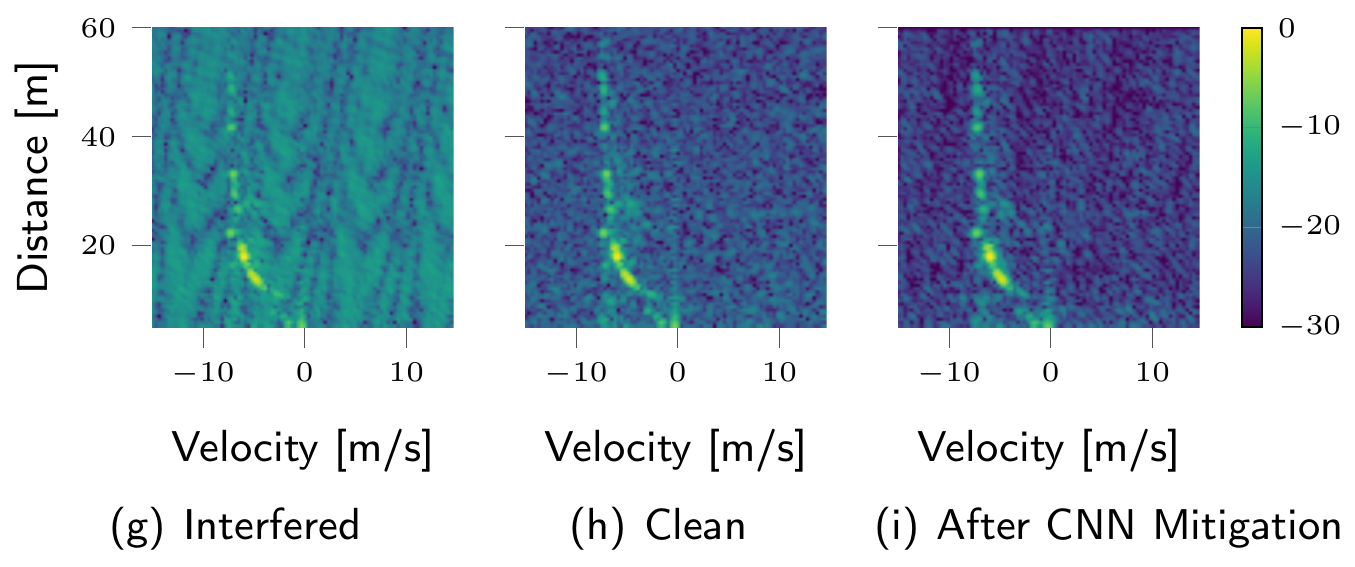

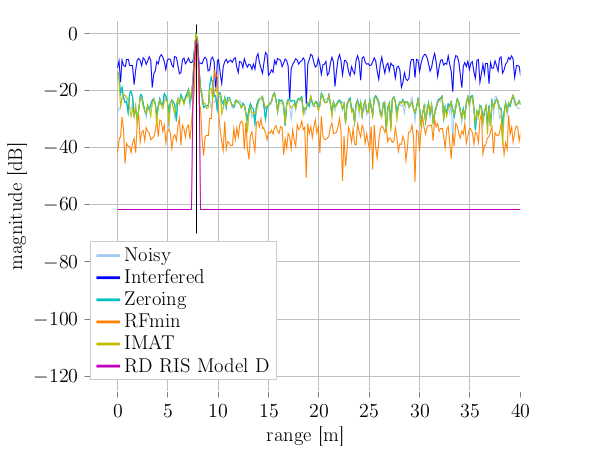

Autonomous driving highly depends on capable sensors to perceive the environment and to deliver reliable information to the vehicles’ control systems. To increase its robustness, a diversified set of sensors is used, including radar sensors. Radar is a vital contribution of sensory information, providing high resolution range as well as velocity measurements. The increased use of radar sensors in road traffic introduces new challenges. As the so far unregulated frequency band becomes increasingly crowded, radar sensors suffer from mutual interference between multiple radar sensors. This interference must be mitigated in order to ensure a high and consistent detection sensitivity.

Read the full article.

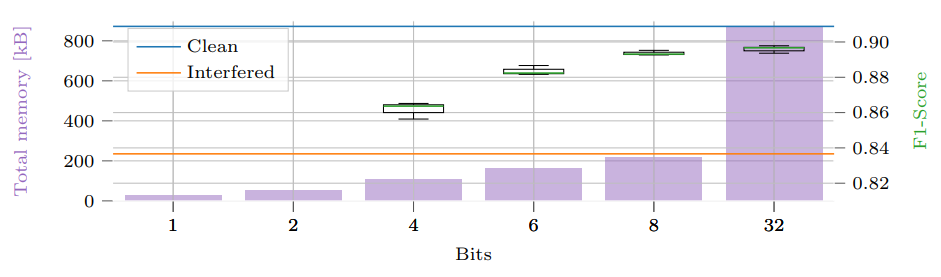

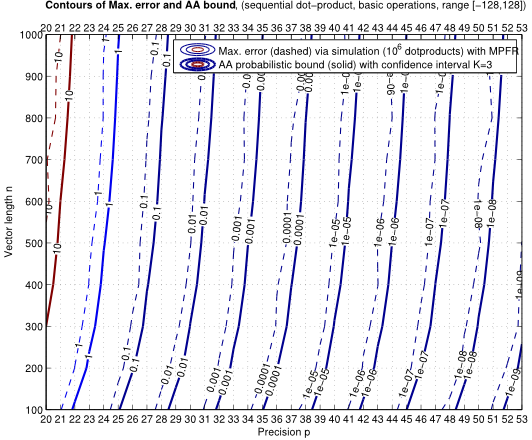

Radar sensors are crucial for environment perception of driver assistance systems as well as autonomous vehicles. Key performance factors are weather resistance and the possibility to directly measure velocity. With a rising number of radar sensors and the so-far unregulated automotive radar frequency band, mutual interference is inevitable and must be dealt with. Algorithms and models operating on radar data in early processing stages are required to run directly on specialized hardware, i.e. the radar sensor. This specialized hardware typically has strict resource constraints, i.e. a low memory capacity and low computational power.

Read the full article.

Multipath-based simultaneous localization and mapping (SLAM) algorithms can detect and localize radio reflective surfaces and jointly estimate the time-varying position of mobile agents. A promising approach is to represent radio reflective surfaces by so called virtual anchors (VAs). In existing multipathbased SLAM algorithms, VAs are modeled and inferred for each physical anchor (PA) and each propagation path individually, even if multiple VAs represent the same physical surface. This limits timeliness and accuracy of mapping and agent localization. In this paper, we introduce an improved statistical model and estimation method that enables data fusion for multipath-based SLAM by representing each surface with a single master virtual anchor (MVA). Our numerical simulation results show that the proposed multipath-based SLAM algorithm can significantly increase map convergence speed and reduce the mapping error compared to a state-of-the-art method.

Read the full article.

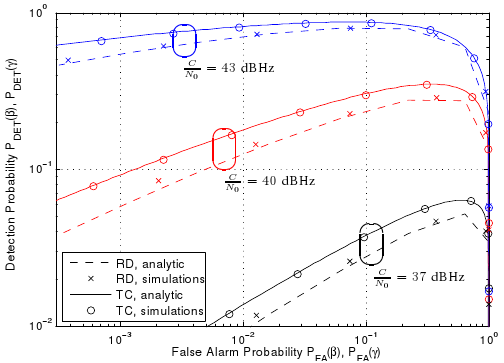

We consider the problem of detecting and estimating radio channel dispersion parameters of a single specular multipath component (SMC) embedded in spatially correlated noise from observations collected using a MIMO measurement setup. The corresponding detection threshold versus the false alarm probability is derived from $\chi^2$-random field with two degrees of freedom defined over a 5-dimensional dispersion space using the theoretical framework of the expected Euler characteristic of random excursion sets. We show that the probability of false alarm is in excellent accordance with the relative-frequency of estimating false alarms using a maximum likelihood estimator and detector for acquiring the 5-dimensional dispersion parameter vector of the SMC.

Read the full article.

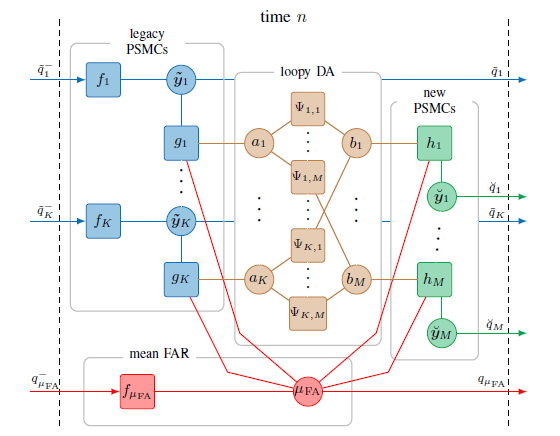

In this work we present a belief propagation (BP) algorithm with probabilistic data association (DA) for detection and tracking of specular multipath components (MPCs). In real dynamic measurement scenarios, the number of MPCs reflected from visible geometric features, the MPC dispersion parameters, and the number of false alarm contributions are unknown and time-varying. We develop a Bayesian model for specular MPC detection and joint estimation problem, and represent it by a factor graph which enables the use of BP for efficient computation of the marginal posterior distributions. A parametric channel estimator is exploited to estimate at each time step a set of MPC parameters, which are further used as noisy measurements by the BP-based algorithm. The algorithm performs probabilistic DA, and joint estimation of the time-varying MPC parameters and mean false alarm rate. Preliminary results using synthetic channel measurements demonstrate the excellent performance of the proposed algorithm in a realistic and very challenging scenario. Furthermore, it is demonstrated that the algorithm is able to cope with a high number of false alarms originating from the prior estimation stage.

Read the full article.

Title: Differentiable TAN Structure Learning for Bayesian Network Classifiers

Read the full article.

Radar sensors are crucial for environment perception of driver assistance systems as well as autonomous vehicles. Key performance factors are weather resistance and the possibility to directly measure velocity. With a rising number of radar sensors and the so far unregulated automotive radar frequency band, mutual interference is inevitable and must be dealt with. Algorithms and models operating on radar data in early processing stages are required to run directly on specialized hardware, i.e. the radar sensor. This specialized hardware typically has strict resource-constraints, i.e. a low memory capacity and low computational power.

Read the full article.

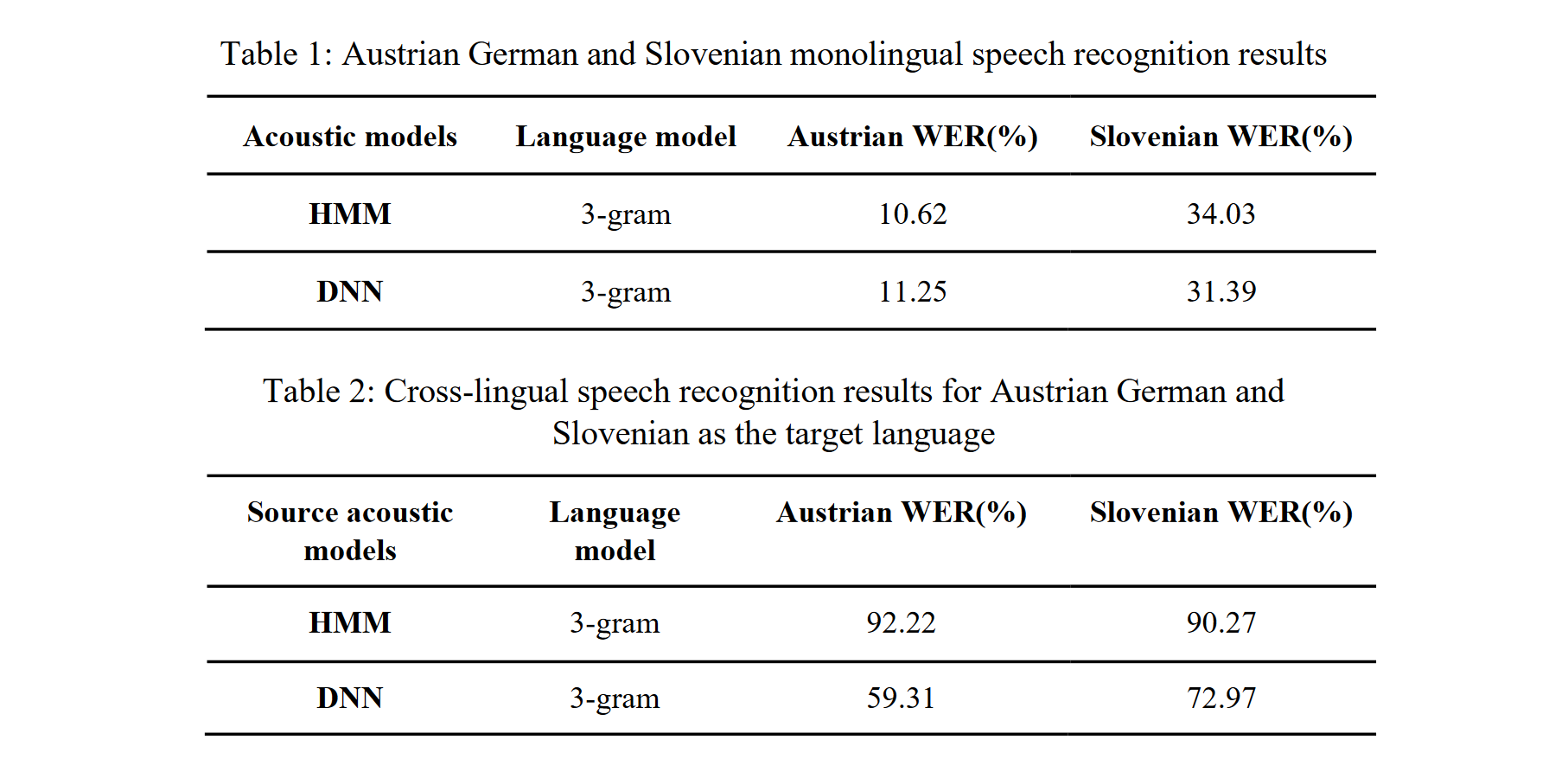

Methods of cross-lingual speech recognition have a high potential to overcome limitations on resources of spoken language in under-resourced languages. Not only can they be applied to build automatic speech recognition (ASR) systems for such languages, they can also be utilized to generate further resources of spoken language. This paper presents a cross-lingual ASR system based on data from two languages, Slovenian and Austrian German. Both were used as a source and target language for cross-lingual transfer (i.e., the acoustic models were trained on material from the source language, and recognition was tested on material from the target language). The cross-lingual mapping between the Slovenian phone set (40 phones) and the Austrian German phone set (33 phones) was carried out using expert knowledge about the acoustic-phonetic properties of the phones. For the experiments, we used data from two speech corpora: the Slovenian BNSI Broadcast News speech database and the Austrian German GRASS corpus. We trained HMM and DNN acoustic models for monolingual and cross-lingual speech recognition. Evaluating the results (Table 1,2), it became clear that the DNN acoustic models outperformed the HMM models. The speech recognition results (Table 2) for Austrian German as the target language clearly outperformed those with Slovenian as the target language. Possible explanations for this difference in performance are: 1) The higher number of phones in the Slovenian language, 2) The speaking style discrepancies of the databases (i.e., a mix of read and spontaneous speech in the Slovenian data vs. read speech only in the Austrian data), and 3) the recording quality mismatch (i.e., GRASS is recorded under better conditions than BNSI). The full version of the paper can be found on The Phonetician.

Read the full article.

In this paper we describe a simple and intuitive model for the effects of the human body of a user close by a receiver. We specifically investigate the UWB channel in off-body condition, where the agent device is located directly on the human body, and another device functioning as anchor is located in the environment. Due to the high time resolution of UWB signals, the effect of the human body can be modeled by means of a extended object producing multiple scattered paths.

Read the full article.

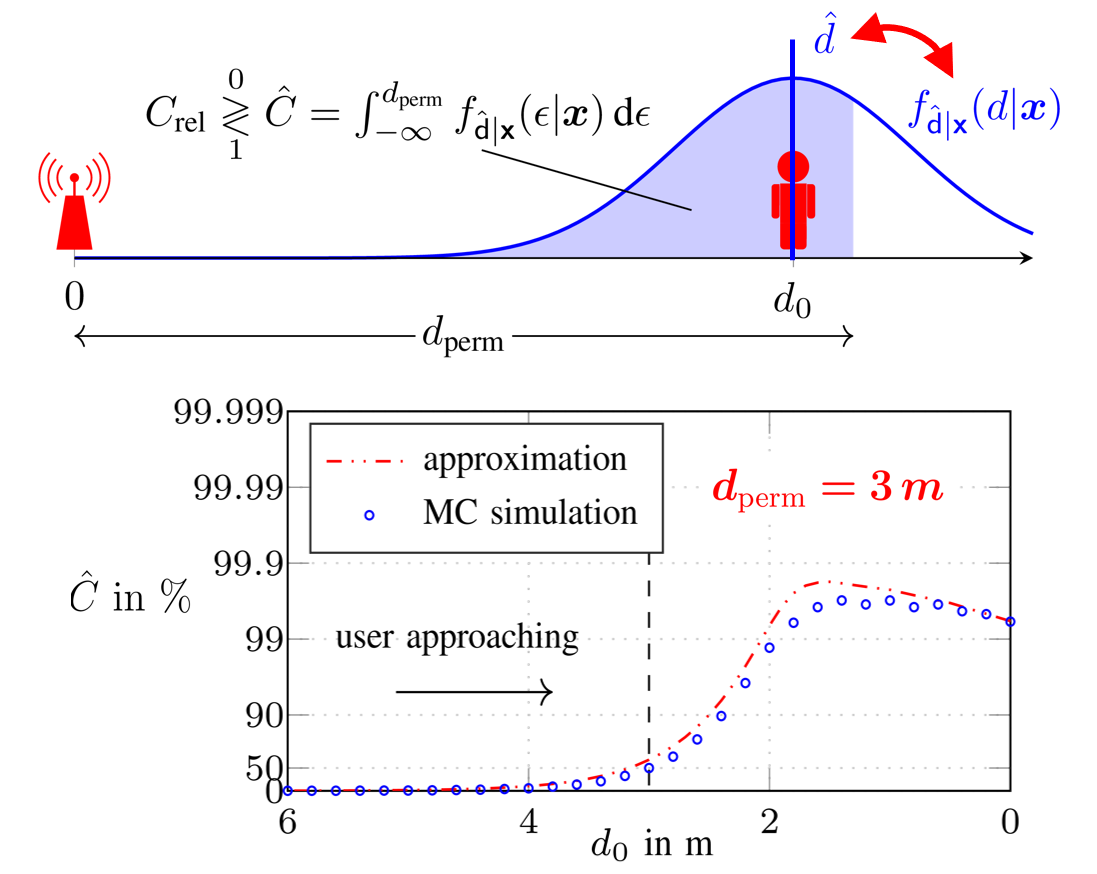

This work, we investigate the reliability of time-of-arrival (TOA) based ranging using maximum-likelihood (ML) estimation in a dense multipath (DM) channel in terms of both the conventional mean squared error (MSE) as well as confidence bounds. We show that in the presence of DM the ML estimator distorts the signal mainlobe due to its whitening property, resulting in a bandwidth (BW) dependent bias, even before the outlier driven threshold region is reached.

Read the full article.

In this work, we propose a Bayesian agent network planning algorithm for information-criterion-based measurement selection for cooperative localization in static networks with anchors. This allows to increase the accuracy of the agent positioning while keeping the number of measurements between agents to a minimum. The proposed algorithm is based on minimizing the conditional differential entropy (CDE) of all agent states to determine the optimal set of measurements between agents. Such combinatorial optimization problems have factorial runtime and quickly become infeasible, even for a rather small number of agents. Therefore, we propose a Bayesian agent network planning algorithm that performs a local optimization for each state. Experimental results demonstrate a performance improvement compared to a random measurement selection strategy, significantly reducing the position RMSE at a smaller number of measurements between agents.

Read the full article.

Title: CNNs for Interference Mitigation and Denoising in Automotive Radar Using Real-World Data

Read the full article.

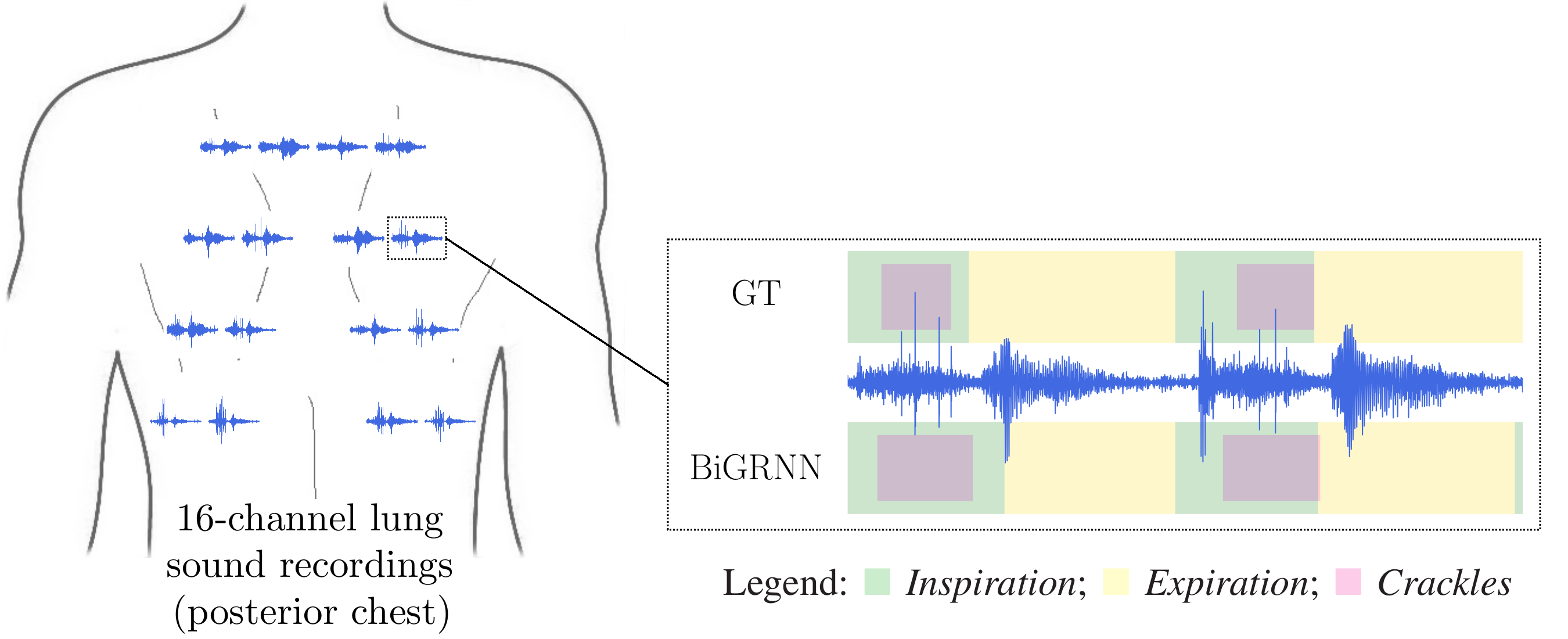

Computational methods for the analysis of lung sounds are beneficial for computer-supported diagnosis, digital storage and monitoring in critical care. Pathological changes of the lung are tightly connected to characteristic sounds enabling a fast and inexpensive diagnosis. Traditional auscultation with a stethoscope has several disadvantages: subjectiveness, i.e. the lung sounds are evaluated depending on the experience of the physician, cannot provide continuous monitoring and a trained expert is required. Furthermore, the characteristics of the sounds are in the low frequency range, where the human hearing has limited sensitivity and is susceptible to noise artifacts.

Read the full article.

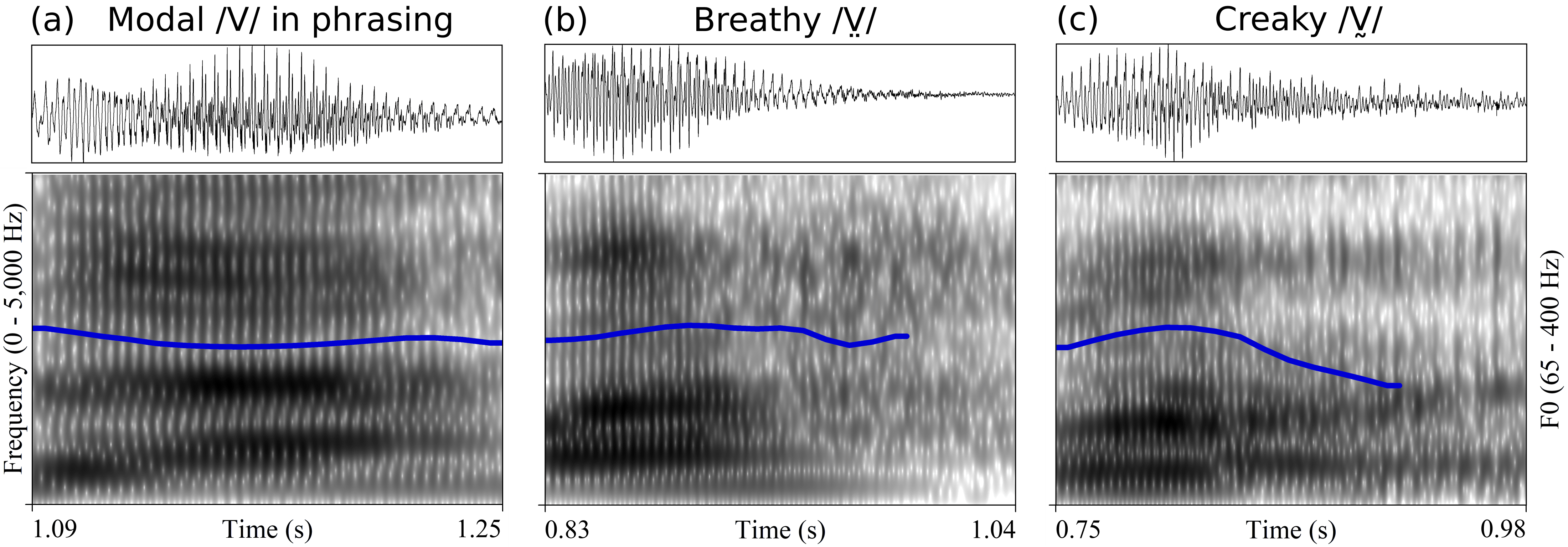

Chichimec (Otomanguean) has two tones, high and low, and a phonological three-way phonation contrast: modal /V/, breathy /V̤/ and creaky /V̰/. Tone and phonation type contrasts are used independently. This paper investigates the acoustic realization of modal, breathy and creaky vowels, the timing of phonation in non-modal vowels, and the production of tone in combination with different phonation types. The results of Cepstral Peak Prominence and three spectral tilt measures showed that phonation type contrasts are not distinguished by the same acoustic measures for women and men. In line with expectations for laryngeally complex languages, phonetic modal and non-modal phonation are sequenced in phonological breathy and creaky vowels. With respect to the timing pattern, however, the results show that non-modal phonation is not, as previously reported, mainly located in the middle of the vowel. Non-modal phonation is instead predominantly realized in the second half of phonological breathy and creaky vowels. Tone is distinguished in all three phonation types, and non-modal vowels do not exhibit distinct F0 ranges, except for creaky vowels produced by women, in which F0 declines in the creaky portion. The results of the acoustic analysis provide additional insights to phonological accounts of laryngeal complexity in Chichimec.

Read the full article.

Models play an essential role in the design process of cyber-physical systems. They form the basis for simulation and analysis and help in identifying design problems as early as possible. However, the construction of models that comprise physical and digital behavior is challenging. Consequently, there is considerable interest in learning the behavior of such systems using machine learning. However, the performance of the machine learning techniques depends crucially on sufficient and representative training data covering the behavior of the system adequately not only in standard situations, but also in edge cases that are often particularly important.

Read the full article.

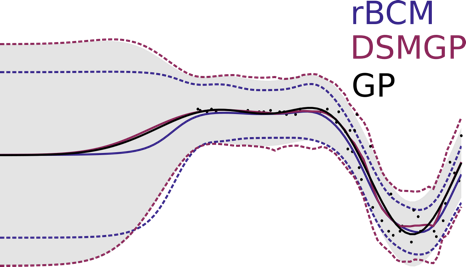

Gaussian Processes (GPs) are powerful non-parametric Bayesian regression models that allow exact posterior inference, but exhibit high computational and memory costs. In order to improve scalability of GPs, approximate posterior inference is frequently employed, where a prominent class of approximation techniques is based on local GP experts. However, the local-expert techniques proposed so far are either not well-principled, come with limited approximation guarantees, or lead to intractable models.

Read the full article.

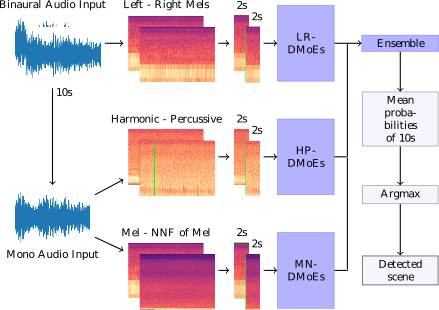

We propose a heterogeneous system of Deep Mixture of Experts (DMoEs) models using different Convolutional Neural Networks (CNNs) for acoustic scene classification (ASC). Each DMoEs module is a mixture of different parallel CNN structures weighted by a gating network. All CNNs use the same input data. The CNN architectures play the role of experts extracting a variety of features. The experts are pre-trained, and kept fixed (frozen) for the DMoEs model. The DMoEs is post-trained by optimizing weights of the gating network, which estimates the contribution of the experts in the mixture. In order to enhance the performance, we use an ensemble of three DMoEs modules each with different pairs of inputs and individual CNN models. The input pairs are spectrogram combinations of binaural audio and mono audio as well as their pre-processed variations using harmonic-percussive source separation (HPSS) and nearest neighbor filters (NNFs). The classification result of the proposed system is 72.1% improving the baseline by around 12% (absolute) on the development data of DCASE 2018 challenge task 1A.

Read the full article.

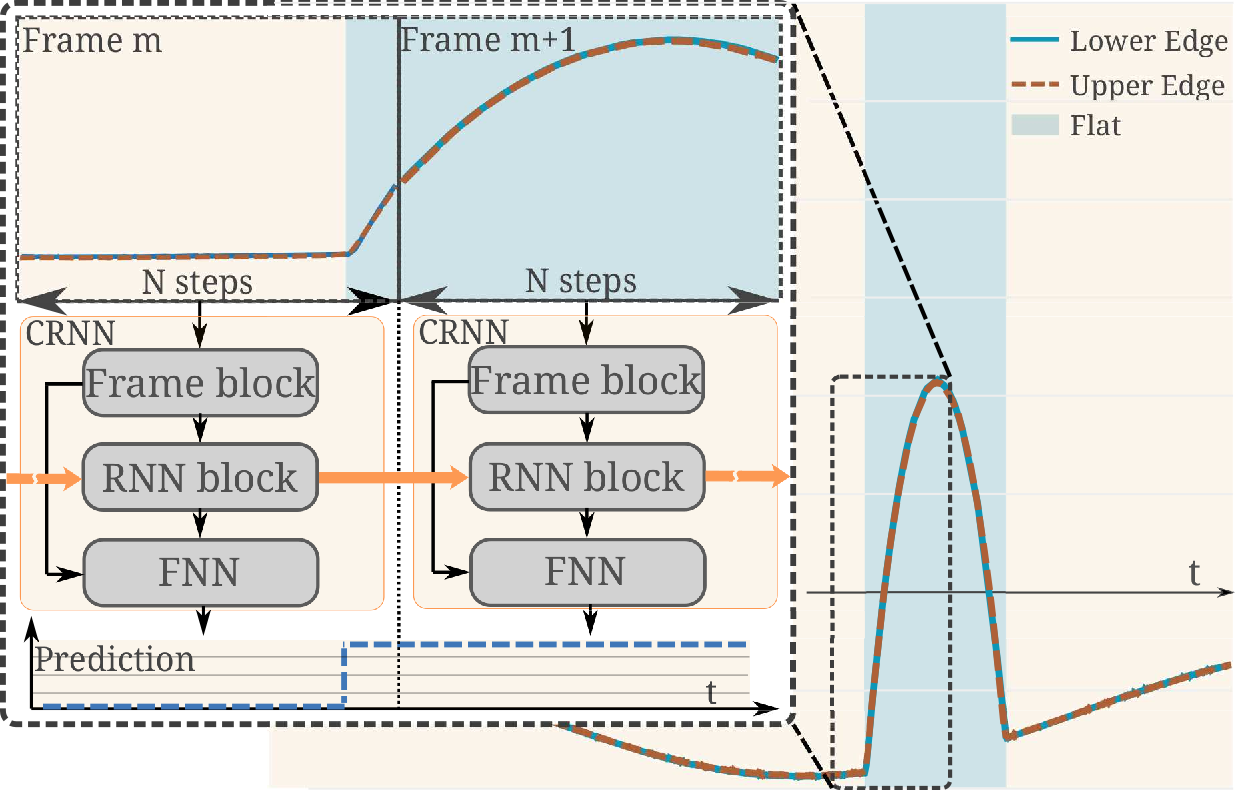

Efficient real-time segmentation and classification of time-series data is key in many applications, including sound and measurement analysis. We propose an efficient convolutional recurrent neural network (CRNN) architecture that is able to deliver improved segmentation performance at lower computational cost than plain RNN methods. We develop a CNN architecture, using dilated DenseNet-like kernels and implement it within the proposed CRNN architecture. For the task of online wafer-edge measurement analysis, we compare our proposed methods to standard RNN methods, such as Long Short Term Memory (LSTM) and Gated Recurrent Units (GRUs). We focus on small models with a low computational complexity, in order to run our model on an embedded device. We show that frame-based methods generally perform better than RNNs in our segmentation task and that our proposed recurrent dilated DenseNet achieves a substantial improvement of over 1.1 % F1-score compared to other frame-based methods.

Read the full article.

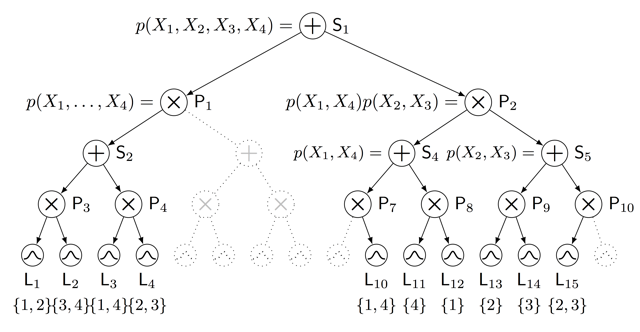

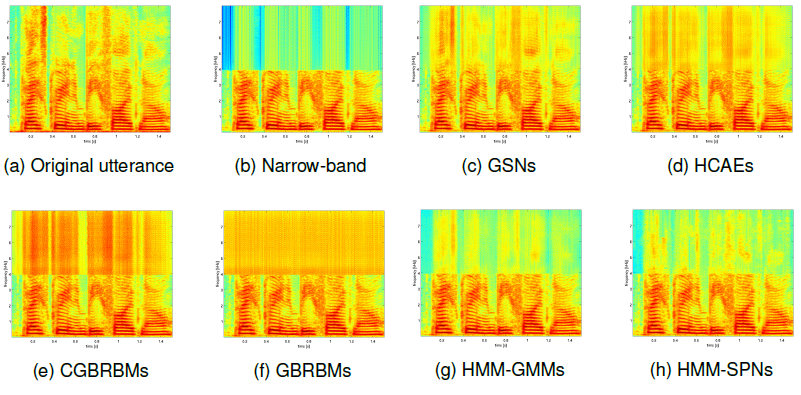

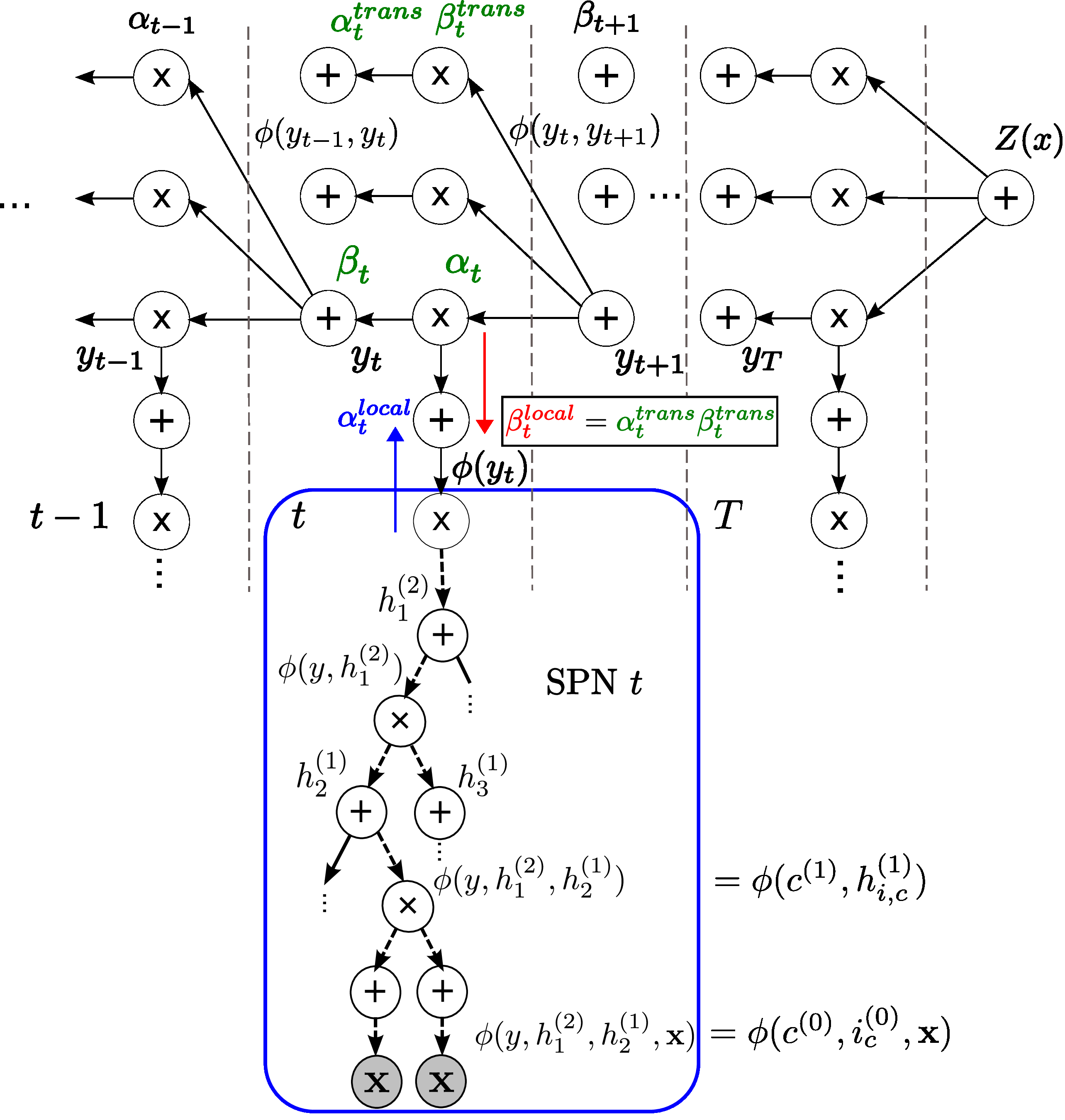

Sum-product networks (SPNs) are flexible density estimators and have received significant attention, due to their attractive inference properties. While parameter learning in SPNs is well developed, structure learning leaves something to be desired: Even though there is a plethora of SPN structure learners, most of them are somewhat ad-hoc, and based on intuition rather than a clear learning principle.

Read the full article.

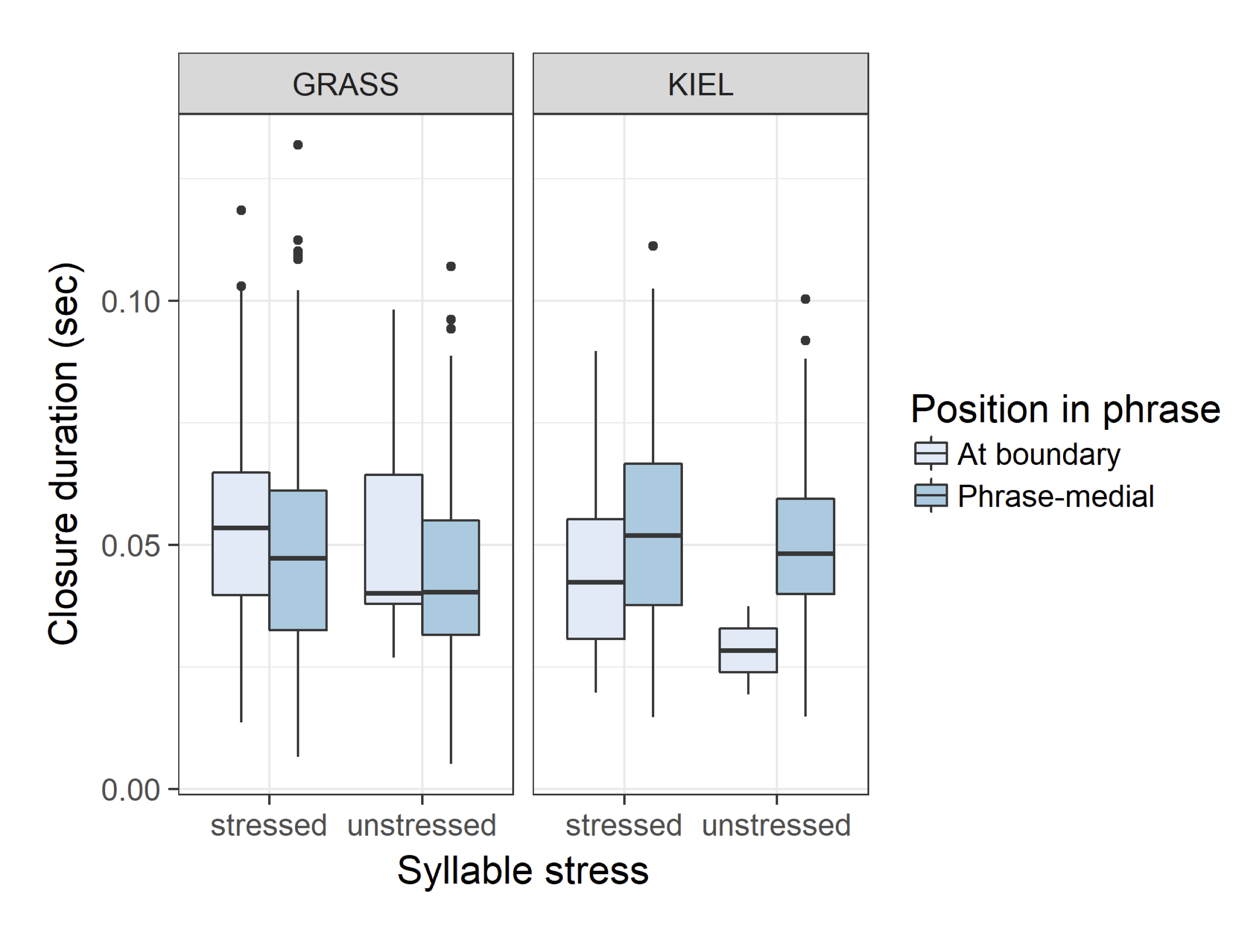

This study investigates the acoustic cues used to mark prosodic boundaries in two varieties of German, with a specific focus on variations in production of fortis and lenis plosives. Based on prosodic-boundary-adjacent and non-boundary-adjacent plosives from GRASS (Austrian German) and the Kiel Corpus of Read Speech (Northern German), we found that closure and burst duration features, as well as duration of a preceding adjacent segment,vary consistently in relationship to the presence or absence of a prosodic boundary, but that the relative weights of these features differ in the two varieties studied. Whereas stress marking in plosives is being driven more by burst duration in the Kiel Corpus data, it is driven more by closure duration in the GRASS data. This study suggests that boundary detection tools require variety-specific training materials, or else information from comparative studies such as the current work, in order to attain optimalfunction in specific varieties or dialects.

Read the full article.

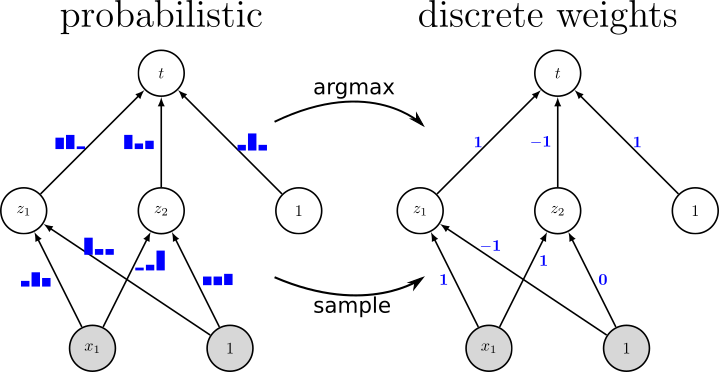

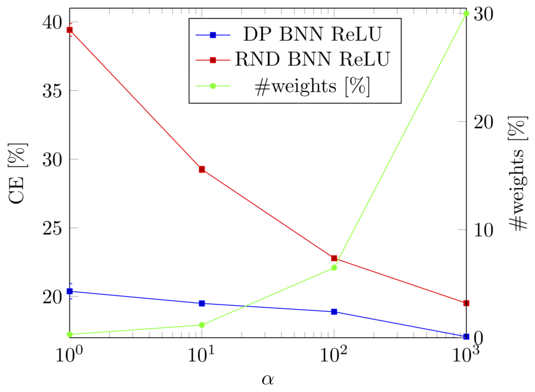

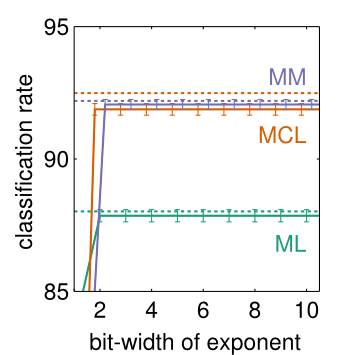

Since resource-constrained devices hardly benefit from the trend towards ever-increasing neural network (NN) structures, there is growing interest in designing more hardware-friendly NNs. In this paper, we consider the training of NNs with discrete-valued weights and sign activation functions that can be implemented more efficiently in terms of inference speed, memory requirements, and power consumption. We build on the framework of probabilistic forward propagations using the local reparameterization trick, where instead of training a single set of NN weights we rather train a distribution over these weights. Using this approach, we can perform gradient-based learning by optimizing the continuous distribution parameters over discrete weights while at the same time perform backpropagation through the sign activation. In our experiments, we investigate the influence of the number of weights on the classification performance on several benchmark datasets, and we show that our method achieves state-of-the-art performance.

Read the full article.

Automotive radar is used to perceive the vehicle’s environment due to its capability to measure distance, velocity and angle of surrounding objects with a high resolution. With an increasing number of deployed radar sensors on the streets and because of missing regulations of the automotive radar frequency band, mutual interference must be dealt with in order to retain a sensitive detection capability.

Read the full article.

The marginals and the partition function can be estimated in a straight-forward manner for tree-structured models but require efficient approximation methods if the graphical model contains loops. One such method is Belief Propagation (BP) that exploits the structure of probabilistic graphical models in order to approximate the marginal distribution and the partition function.

Read the full article.

Simultaneous localization and mapping (SLAM) is important in many fields including robotics, autonomous driving, location-aware communication, and robust indoor localization. Specifically, robustness, i.e. achieving a low probability of localization outage, is still a challenging task in environments with strong multipath propagation. Therefore, new systems supporting multipath channels either take advantage of it by exploiting multipath components (MPCs) for localization [5], [6], [10], exploiting cooperation among agents, and/or exploiting robust signal processing against multipath propagation and clutter measurements in general.

Read the full article.

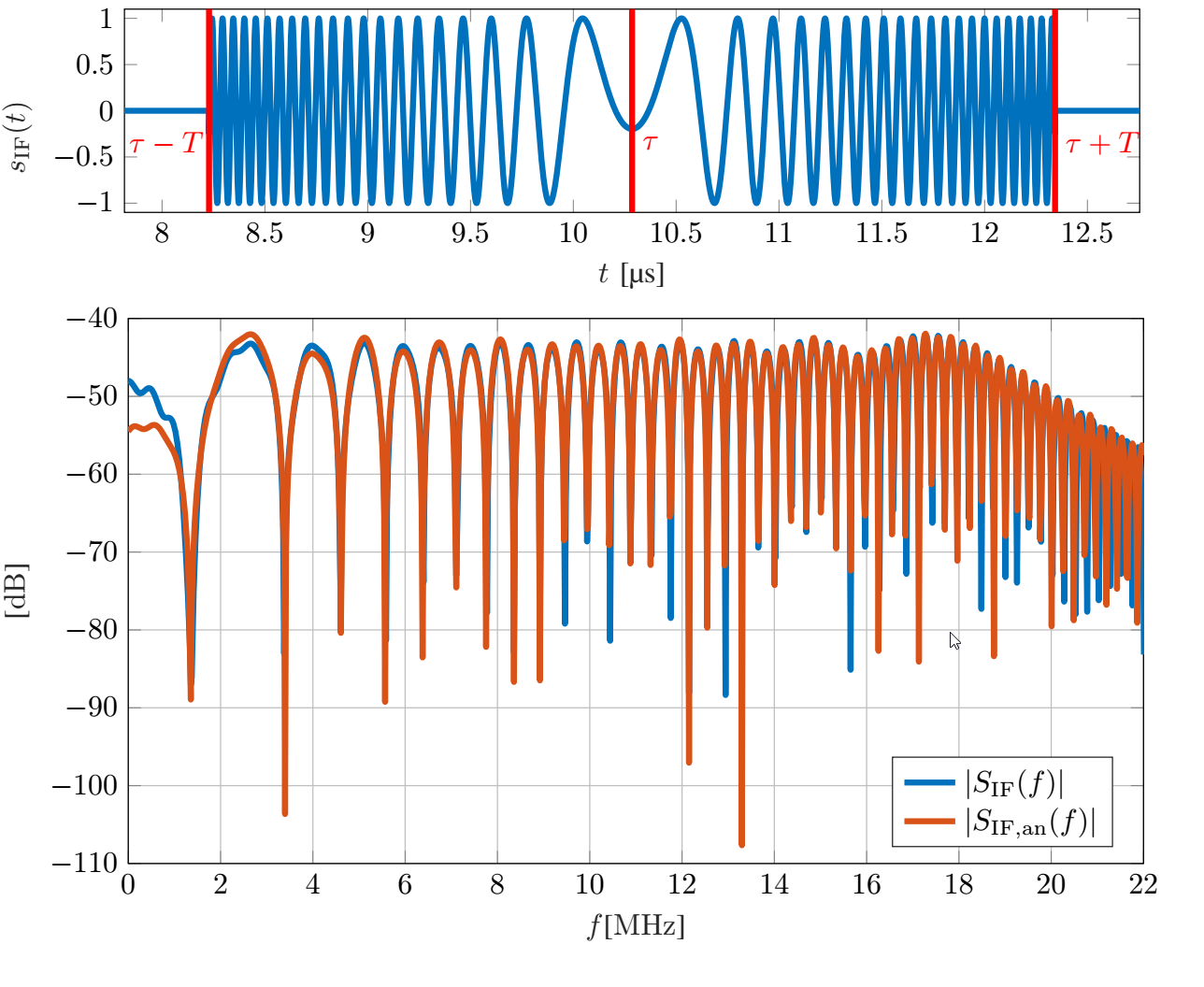

Radar sensors are increasingly utilized in today’s cars. This inevitably leads to increased mutual sensor interference and thus a performance decrease, potentially resulting in major safety risks. Understanding signal impairments caused by interference accurately helps to devise signal processing schemes to combat said performance degradation. For the FMCW radars prevalent in automotive applications, it has been shown that so-called non-coherent interference occurs frequently and results in an increase of the noise floor. In this work we investigate the impact of interference analytically by focusing on its detailed description. We show, among others, that the spectrum of the typical interference signal has a linear phase and a magnitude that is strongly fluctuating with the phase parameters of the time domain interference signal. Analytical results are verified by simulation, highlighting the dependence on the specific phase terms that cause strong deviations from spectral whiteness.

Read the full article.

We extend feed-forward neural networks with a Dirichlet process prior over the weight distribution. This enforces a sharing on the network weights, which can reduce the overall number of parameters drastically. We alternately sample from the posterior of the weights and the posterior of assignments of network connections to the weights. This results in a weight sharing that is adopted to the given data. In order to make the procedure feasible, we present several techniques to reduce the computational burden. Experiments show that our approach mostly outperforms models with random weight sharing. Our model is capable of reducing the memory footprint substantially while maintaining a good performance compared to neural networks without weight sharing.

Read the full article.

Homophones pose serious issues for automatic speech recognition (ASR) as they have the same pronunciation but different meanings or spellings. Homophone disambiguation is usually done within a stochastic language model or by an analysis of the homophonous word’s context. Whereas this method reaches good results in read speech, it fails in conversational, spontaneous speech, where utterances are often short, contain disfluencies and/or are realized syntactically incomplete. Phonetic studies have shown that words that are homophonous in read speech often differ in their phonetic detail in spontaneous speech. Whereas humans use phonetic detail to disambiguate homophones, this linguistic information is usually not explicitly incorporated into ASR systems.

Read the full article.

Highly accurate indoor positioning is still a hard problem due to interference caused by multipath propagation and the resulting high complexity of the infrastructure. We focus on the possibility of exploiting information contained in specular multipath components (SMCs) to increase the positioning accuracy of the system and to reduce the required infrastructure, using a-priori information in form of a floor plan. The system utilizes a single anchor equipped with array antennas and wideband signals to allow separating the SMCs. We derive a closed form of the Cramér-Rao lower bound for array-based multipath-assisted positioning and examine the beneficial effect of spatial aliasing of antenna arrays on the achievable angular resolution and as a direct consequence onto the positioning accuracy. It is shown that ambiguities that arise due to the aliasing can be resolved by exploiting the information contained in SMCs. The theoretic results are validated by simulations.

Read the full article.

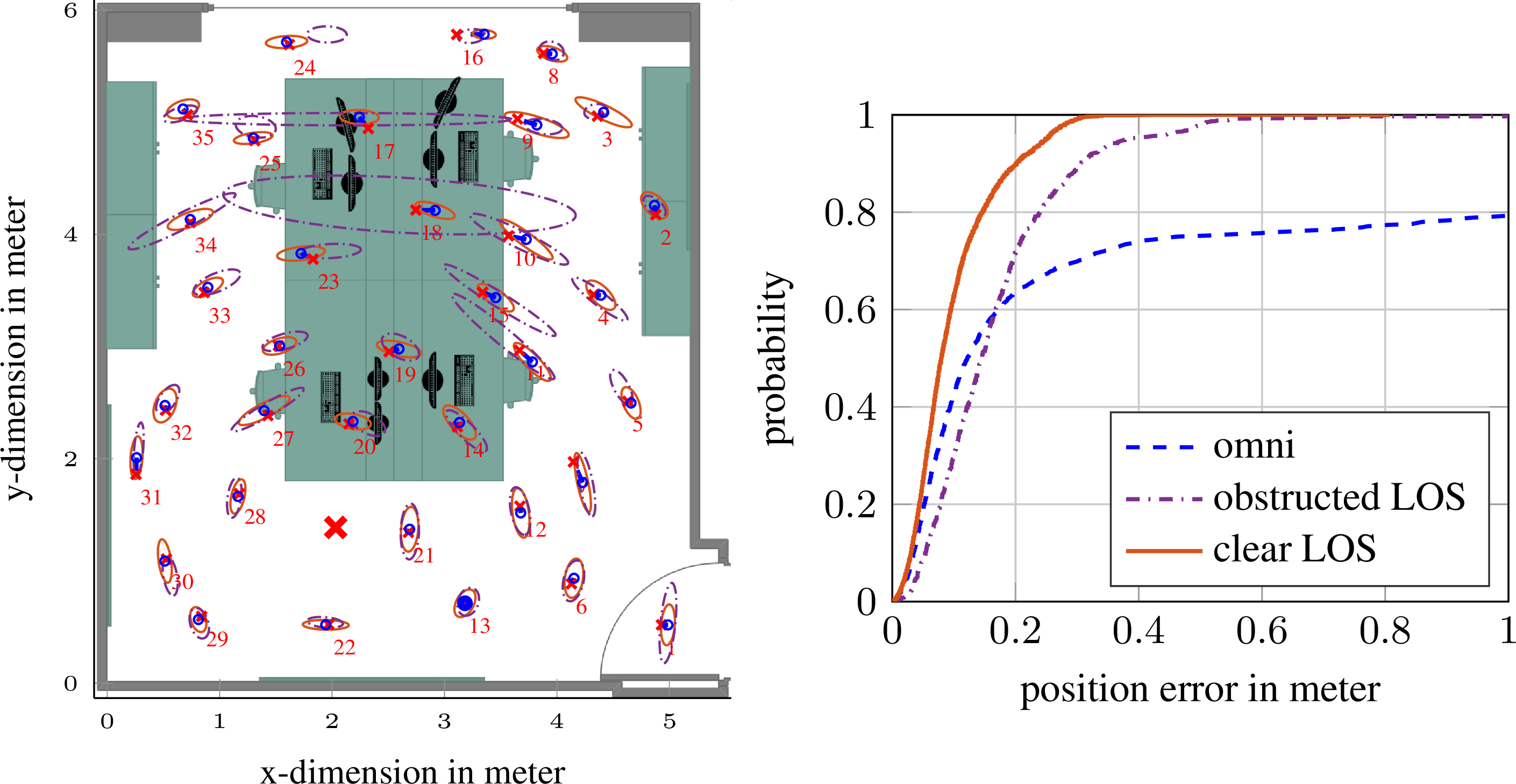

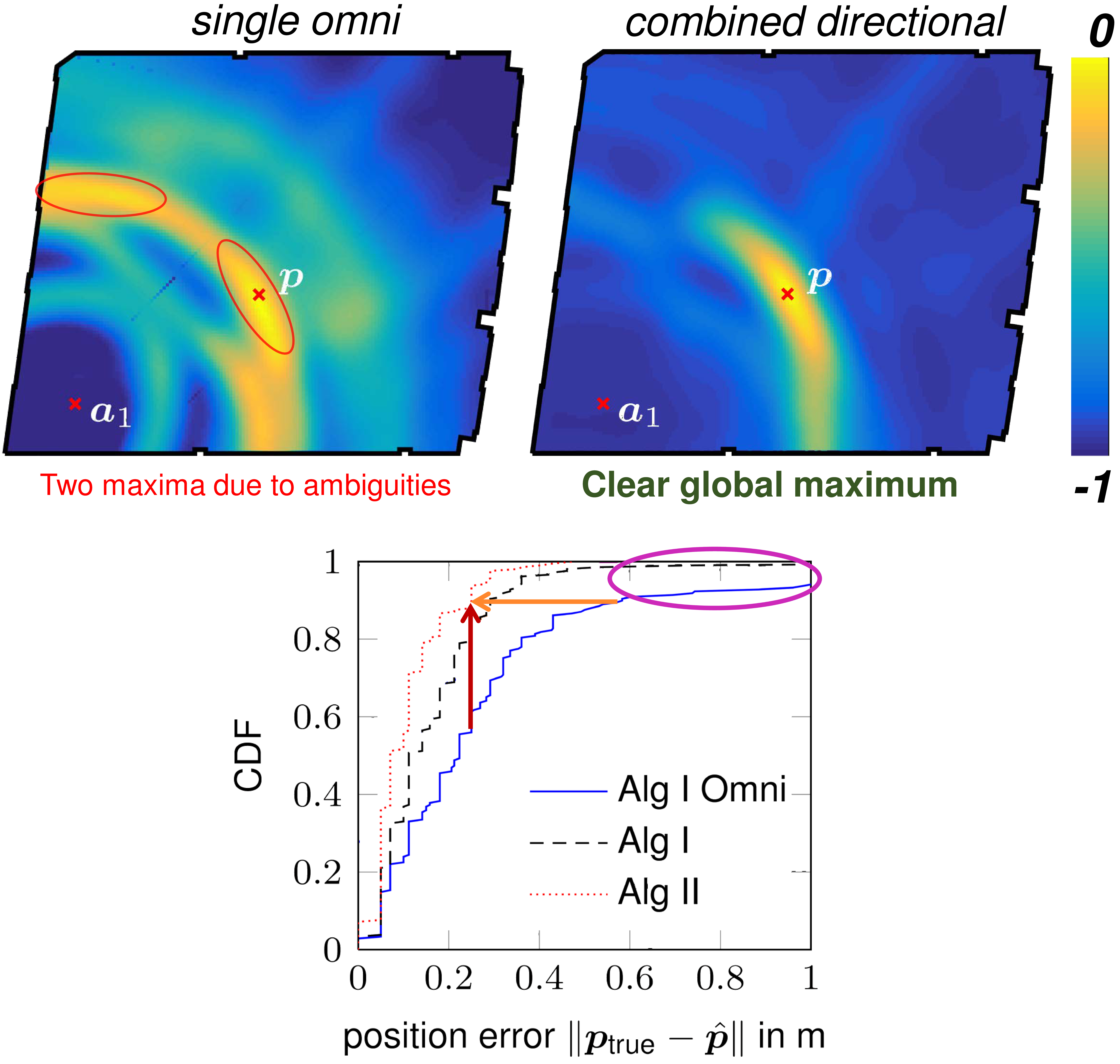

Setting up indoor localization systems is often excessively time-consuming and labor-intensive, because of the high amount of anchors to be carefully deployed or the burdensome collection of fingerprints. In this work, we present SALMA, a novel low-cost ultra-wideband-based indoor localization system that makes use of only one anchor with minimized calibration and training efforts.

The system leverages the gained insights of our previous works, exploiting multipath reflections of radio signals to enhance positioning performance. To this end, only a crude floor plan is needed which enables SALMA to accurately determine the position of a mobile tag using a single anchor, hence minimizing the infrastructure costs, as well as the setup time.

We implement SALMA on off-the-shelf UWB devices based on the Decawave DW1000 transceiver and show that, by making use of multiple directional antennas, SALMA can also resolve ambiguities due to overlapping multipath components.

An experimental evaluation in an office environment with clear line-of-sight (LOS) has shown that 90% of the position estimates obtained using SALMA exhibit less than 20 cm error, with a median below 8 cm. We further study the performance of SALMA in the presence of obstructed line-of-sight (OLOS) conditions, moving objects and furniture, as well as in highly dynamic environments with several people moving around, showing that the system can sustain decimeter-level accuracy with a worst-case average error below 34 cm.

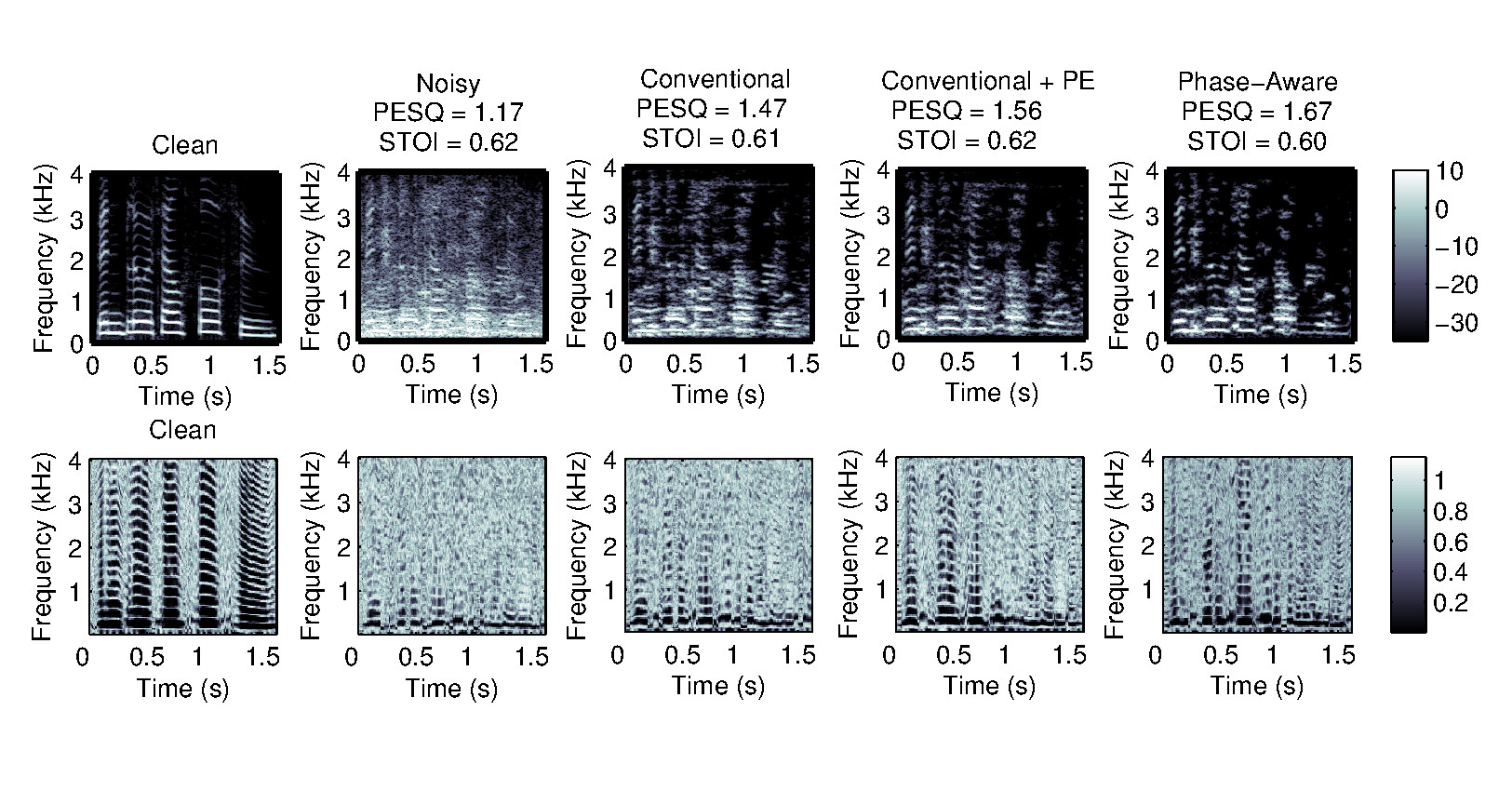

Single-channel speech enhancement refers to the reduction of noise signal components in a single-channel signal composed of both speech and noise. A wide range of single-channel speech enhancement algorithms is formulated in the short-time Fourier transform (STFT). Traditional approaches assume statistical independence between signal components from different time-frequency regions, resulting in estimators that are functions of diagonal covariance matrices. More recent approaches drop this assumption and explicitly model dependencies between STFT bins. Full covariance matrices of speech and noise are required in this case to obtain optimal estimates of the clean speech spectrum, where off-diagonal entries are complex-valued in general. We show that the performance of estimators resulting from such models is highly sensitive to the phase estimation accuracy of these off-diagonal entries. Since it is non-trivial to estimate the covariance phases from noisy speech data, we propose a linear multidimensional short-time spectral amplitude estimator that circumvents the need to estimate them. We evaluate the speech enhancement performance of this approach and compare it to relevant benchmarks that also take into account inter-channel dependencies.

Read the full article.

Early diagnosis of idiopathic pulmonary fibrosis (IPF) is of increasing importance, due to recent success to slow down the disease progression. Auscultation is a helpful mean for early diagnosis of IPF. Auscultatory findings for IPF are fine (or velcro) crackles during inspiration, which are heard over affected areas.

Read the full article.

One main goal of the recently finished FWF funded project “Cross-layer pronunciation models for conversational speech” was to investigate interdisciplinary approaches towards studying pronunciation variation and to show how researchers in the fields of automatic speech recognition, psycholinguistics and phonetics/phonology can profit from integrating findings of the respective fields. Such new approaches, covering all mentioned disciplines, are presented in the book “Rethinking Reduction”. The book contains 11 peer reviewed chapters, of which two are overview chapters written by the editors, and 9 contain original research. With “Reduction” we refer to acoustically reduced words. In natural conversations, for instance, a word like “yesterday” might be pronounced as yeshay, and a word like “haben” might be pronounced like ham. Phonetically reduced forms are extremely plentiful (e.g., 62% of word tokens in spontaneous Austrian German conversations are reduced), theoretically interesting (e.g., how do people learn to produce and understand the multiple reduced pronunciation variants existing per word?), and a key challenge for automatic speech recognition systems (e.g., new methods for acoustic and pronunciation modelling are needed). Despite the high frequency of reduced pronunciation variants, the canonical forms are still central to models of production and perception. Drawing from different fields and diverse languages, this volume brings new insights to the debate on abstractions and canonical forms in linguistics: their psychological reality, descriptive adequacy, and technical implementability.

Read the full article.

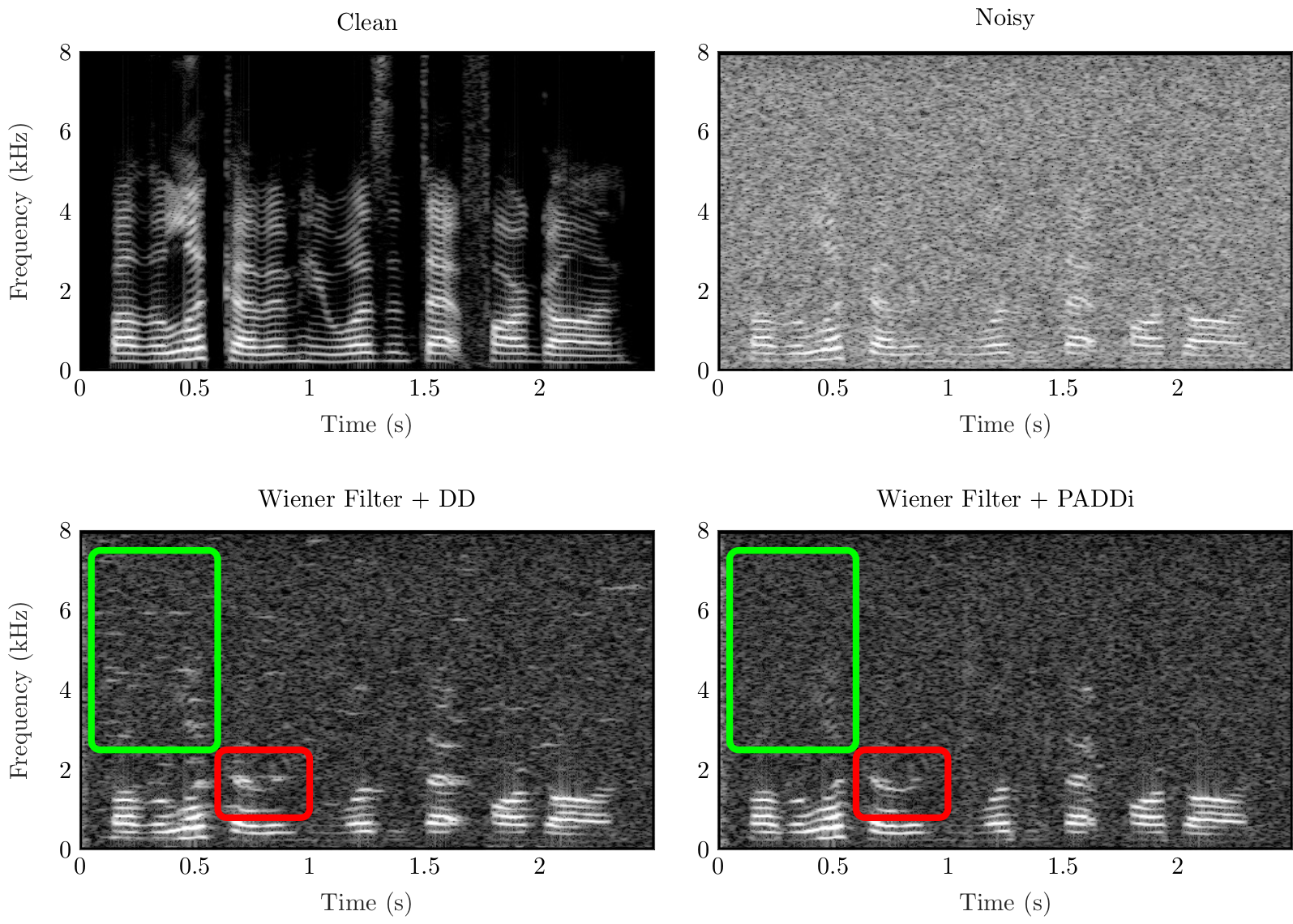

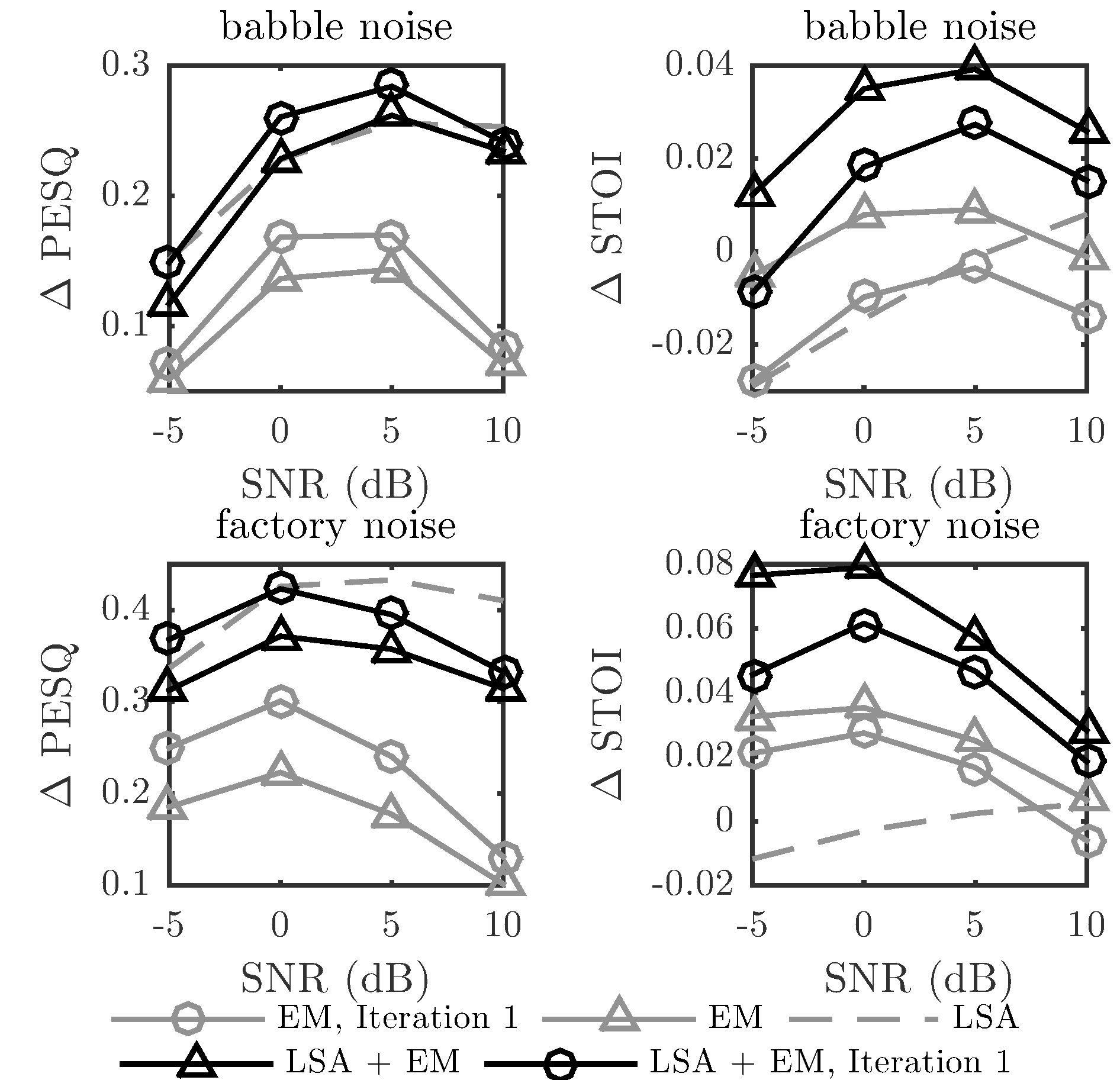

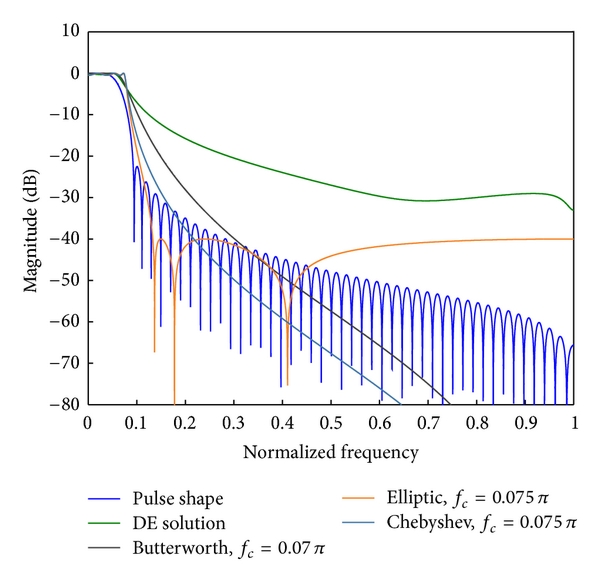

In this work, we address the problem of estimating the a priori SNR for single-channel speech enhancement. Similar to the decision-directed (DD) approach we linearly combine the maximum likelihood estimate of the current a priori SNR with an estimate obtained from the previous frame. Based on the harmonic model for voiced speech we propose to smooth the a priori SNR estimate along harmonic trajectories instead of fixed discrete Fourier transform frequency bins. We interpolate from fixed DFT frequencies to harmonic frequencies by using a pitch-adaptive zero-padding in the time domain. The resulting pitch-adaptive decision-directed (PADDi) method increases the noise attenuation compared to the classical decision-directed approach and outperforms benchmark methods in terms of speech enhancement performance for several noise types at different SNRs, quantified by objective evaluation criteria.

Read the full article.

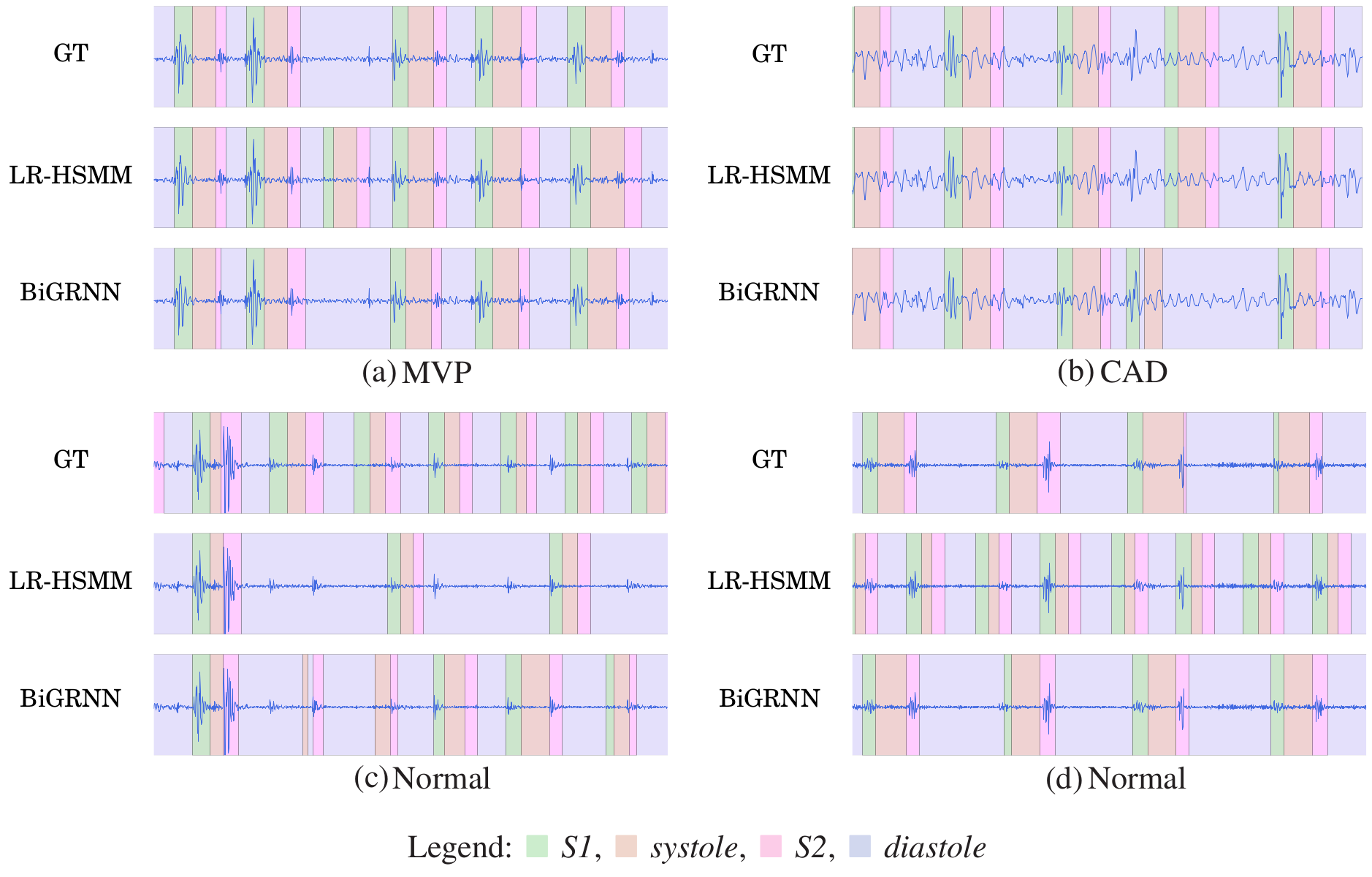

We present a method to accurately detect the state-sequence first heart sound (S1)–systole–second heart sound (S2)–diastole , i.e., the positions of S1 and S2, in heart sound recordings. We propose an event detection approach without explicitly incorporating a priori information of the state duration. This renders it also applicable to recordings with cardiac arrhythmia and extendable to the detection of extra heart sounds (third and fourth heart sound), heart murmurs, as well as other acoustic events. We use data from the 2016 PhysioNet/CinC Challenge, containing heart sound recordings and annotations of the heart sound states. From the recordings, we extract spectral and envelope features and investigate the performance of different deep recurrent neural network (DRNN) architectures to detect the state sequence. We use virtual adversarial training, dropout, and data augmentation for regularization. We compare our results with the state-of-the-art method and achieve an average score for the four events of the state sequence of F1≈96% on an independent test set.

Read the full article.

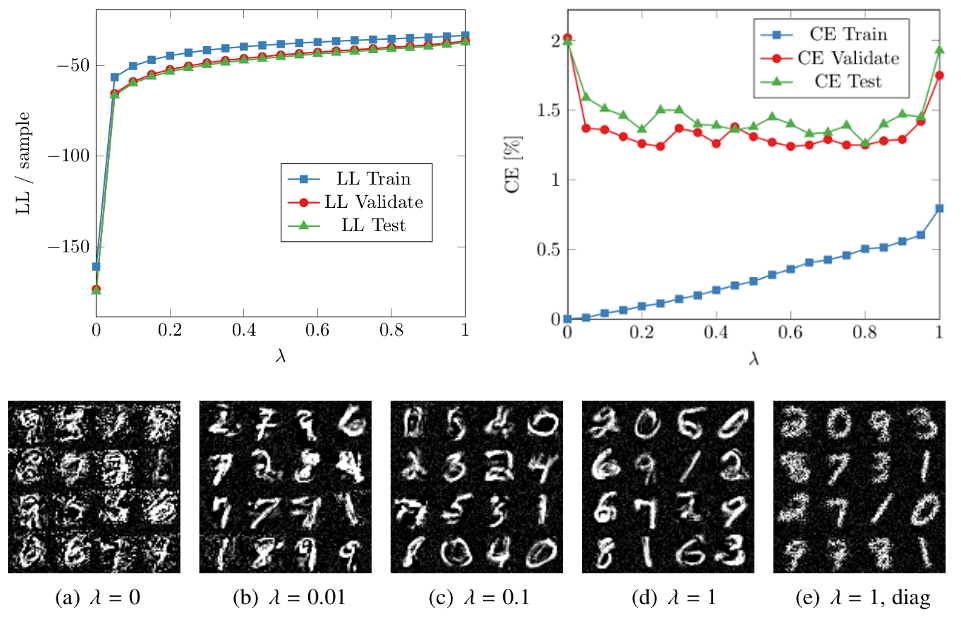

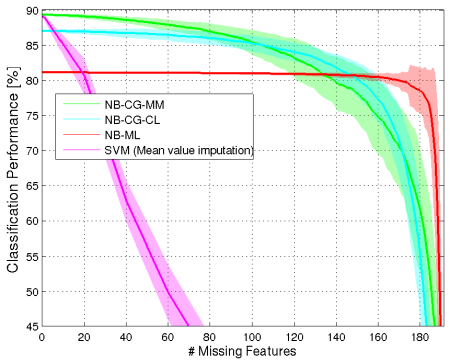

Recent work has shown substantial performance improvements of discriminative probabilistic models over their generative counterparts. However, since discriminative models do not capture the input distribution of the data, their use in missing data scenarios is limited. To utilize the advantages of both paradigms, we present an approach to train Gaussian mixture models (GMMs) in a hybrid generative-discriminative way. This is accomplished by optimizing an objective that trades off between a generative likelihood term and either a discriminative conditional likelihood term or a large margin term using stochastic optimization. Our model substantially improves the performance of classical maximum likelihood optimized GMMs while at the same time allowing for both a consistent treatment of missing features by marginalization, and the use of additional unlabeled data in a semi-supervised setting. For the covariance matrices, we employ a diagonal plus low-rank matrix structure to model important correlations while keeping the number of parameters small. We show that a non-diagonal matrix structure is crucial to achieve good performance and that the proposed structure can be utilized to considerably reduce classification time in case of missing features. The capabilities of our model are demonstrated in extensive experiments on real-world data.

Read the full article.

The accuracy that can be achieved in time of arrival (ToA) estimation strongly depends on the utilized signal bandwidth. In an indoor environment multipath propagation usually causes a degradation of the achievable accuracy due to the overlapping signals. A similar effect can be observed for the angle of arrival (AoA) estimation using arrays. This paper derives a closed-form equation for the Cramér-Rao lower bound (CRLB) of the achievable AoA and the ToA error variances, considering the presence of dense multipath. The Fisher information expressions for both parameters allow an evaluation of the influence of channel parameters and system parameters such as the array geometry. Our results demonstrate that the AoA estimation accuracy is strongly related to the signal bandwidth, due to the multipath influence. The theoretical results are evaluated for experimental data, with simulations performed for ULAs with M=2 and M=16 array elements.

Read the full article.

Accurate indoor radio positioning requires high-resolution measurements to either utilize or mitigate the impact of multipath propagation. This high resolution can be achieved using large signal-bandwidth, leading to superior time resolution and / or multiple antennas, leading to additional angle resolution. To facilitate multiple antennas, phase-coherent measurements are typically necessary. In this work we propose to employ non-phase-coherent measurements obtained from directional antennas for accurate single-anchor indoor positioning. The derived algorithm exploits beampatterns to jointly estimate multipath amplitudes to be used in maximum likelihood position estimation. Our evaluations based on measured and computer generated data demonstrate only a minor degradation in comparison to a phase-coherent processing scheme.

Read the full article.

It is intended to achieve similar acoustic conditions as in an already existing live room. The challenges occurring especially in small spaces are introduced and a number of acoustical absorbers are presented. The types of absorbers capable of damping the low frequency room modes are discussed. The acoustic measurements are evaluated, the reverberation time is selected as a significant criterion and a low, frequency-independent target value is chosen. A 3D-model for the acoustic simulation software is built and on the basis of the simulations, various optimisation measures are developed. Concerning an adequate dampening of the room modes, edge or corner absorbers are selected as the basic concept for the enhancement and compound panel absorbers are planned to be installed on the walls. For prevention of flutter echoes and a sufficient gain of absorption and diffusion, a panel system on the ceiling is designed. Finally, the acoustical measures taken are presented and evaluated, specifically regarding the reverberation time, room modes and reflections.

Read the full article.

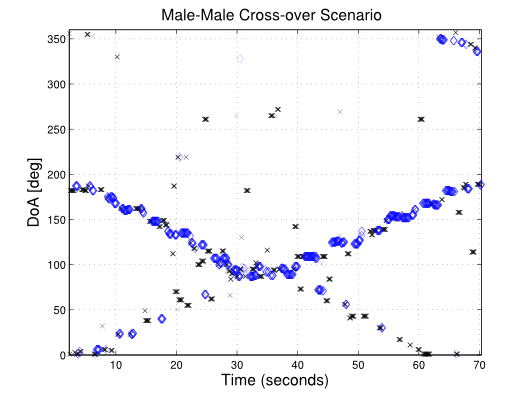

Highly accurate location information is a key facilitator to stimulate future services for the commercial and public sectors. Positioning and tracking of absolute positions of wireless nodes usually requires information provided from technical infrastructure, e.g. satellites or fixed anchor nodes, whose maintenance is costly and whose limited operating coverage narrows the positioning service. In this paper we present an algorithm aiming at tracking of absolute positions without using information from fixed anchors, odometers or inertial measurement units. We perform radio channel measurements in order to exploit position-related information contained in multipath components (MPCs). Tracking of the absolute node positions is enabled by estimation of MPC parameters followed by association of these parameters to a floorplan. To account for uncertainties in the floorplan and for propagation effects like diffraction and penetration, we recursively update the provided floorplan using the measured MPC parameters. We demonstrate the ability to localize two agent nodes without the employment of further infrastructure, using data from ultra-wideband channel measurements. Further, we show the potential performance gain if also one fixed anchor is available and we validate the algorithm for a range of different signal bandwidths and number of nodes.

Read the full article.

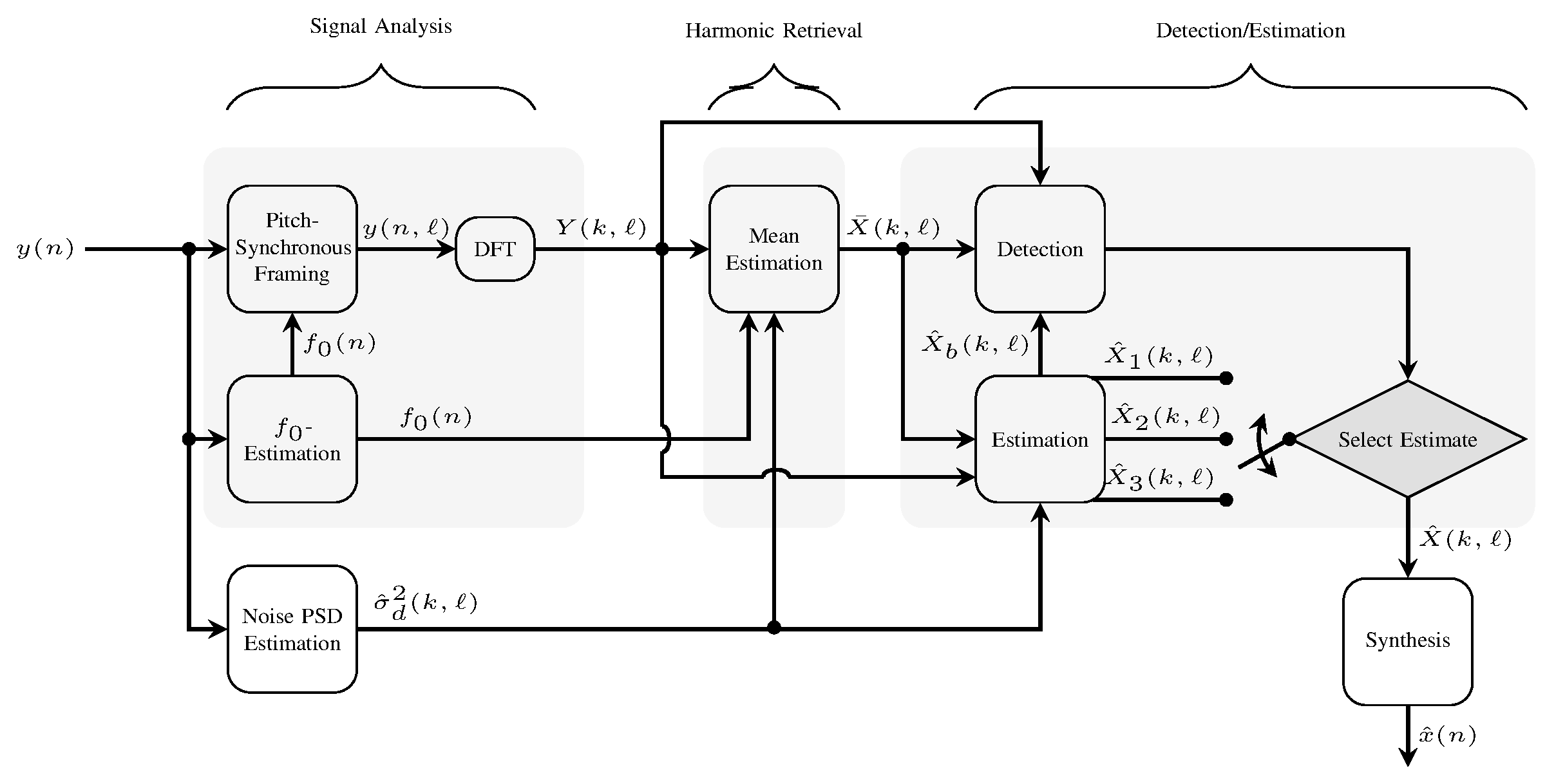

Speech enhancement methods formulated in the STFT domain vary in the statistical assumptions made on the STFT coefficients, in the optimization criteria applied or in the models of the signal components. Recently, approaches relying on a stochastic-deterministic speech model have been proposed. The deterministic part of the signal corresponds to harmonically related sinusoids, often used to represent voiced speech. The stochastic part models signal components that are not captured by the deterministic components. In this work, we consider this scenario under a new perspective yielding three main contributions. First, a pitch-synchronous signal representation is considered and shown to be advantageous for the estimation of the harmonic model parameters. Second, we model the harmonic amplitudes in voiced speech as random variables with frequency bin dependent Gamma distributions. Finally, distinct estimators for the different models of voiced speech, unvoiced speech, and speech absence are derived. To select from the arising estimates, we take into account the mutual impact of detection and estimation by proposing a binary decision framework that is derived from a Bayesian risk function.

Read the full article.

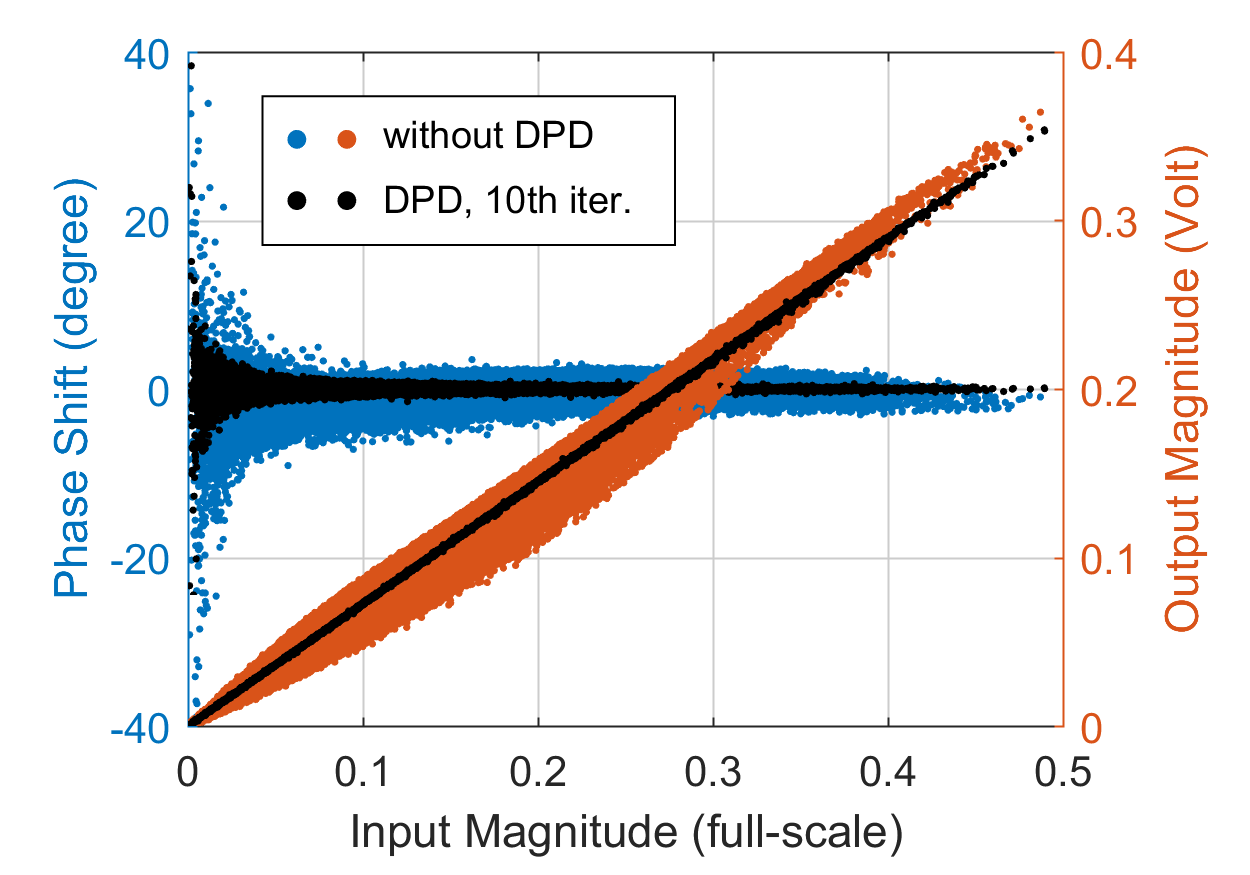

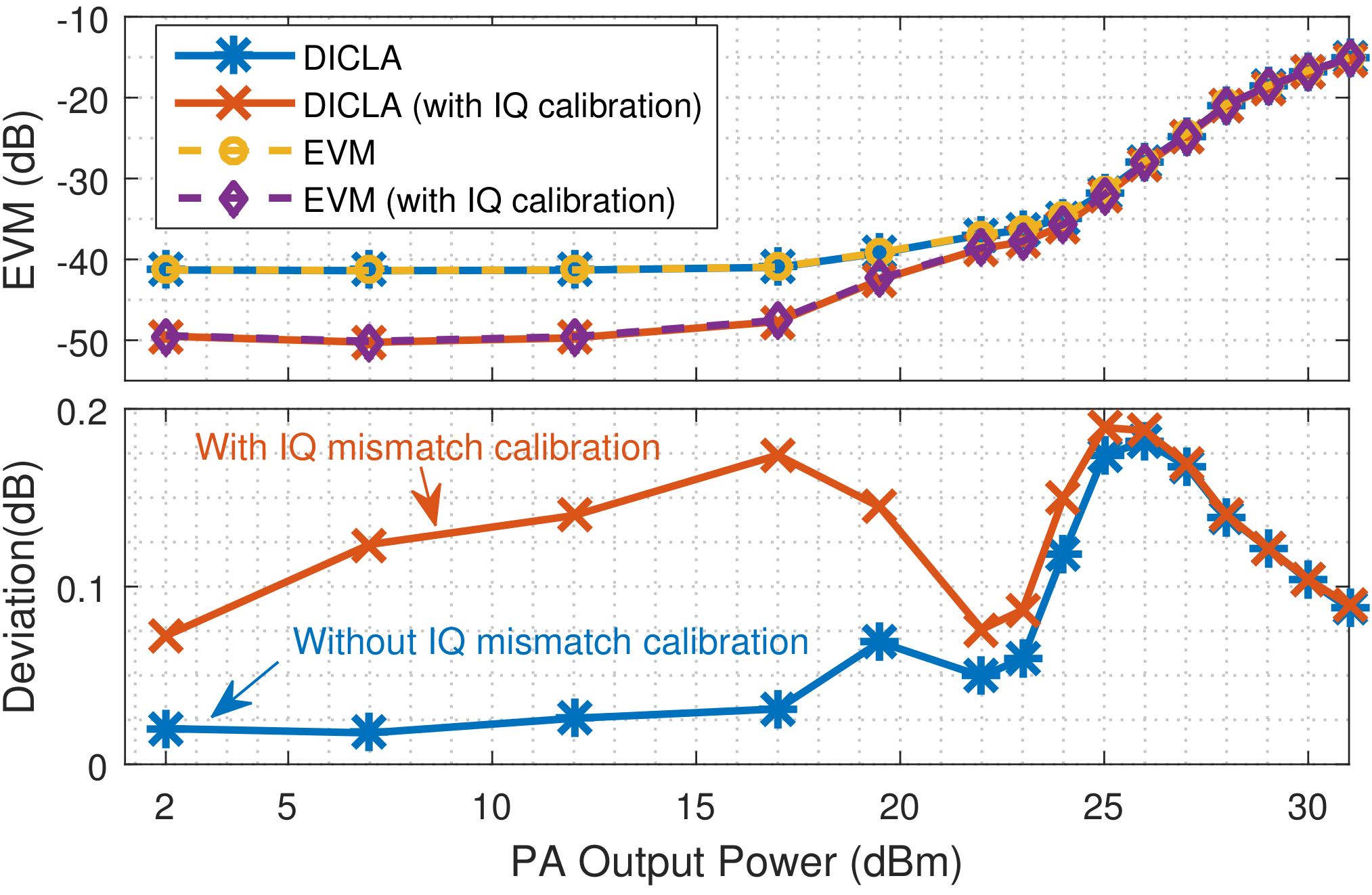

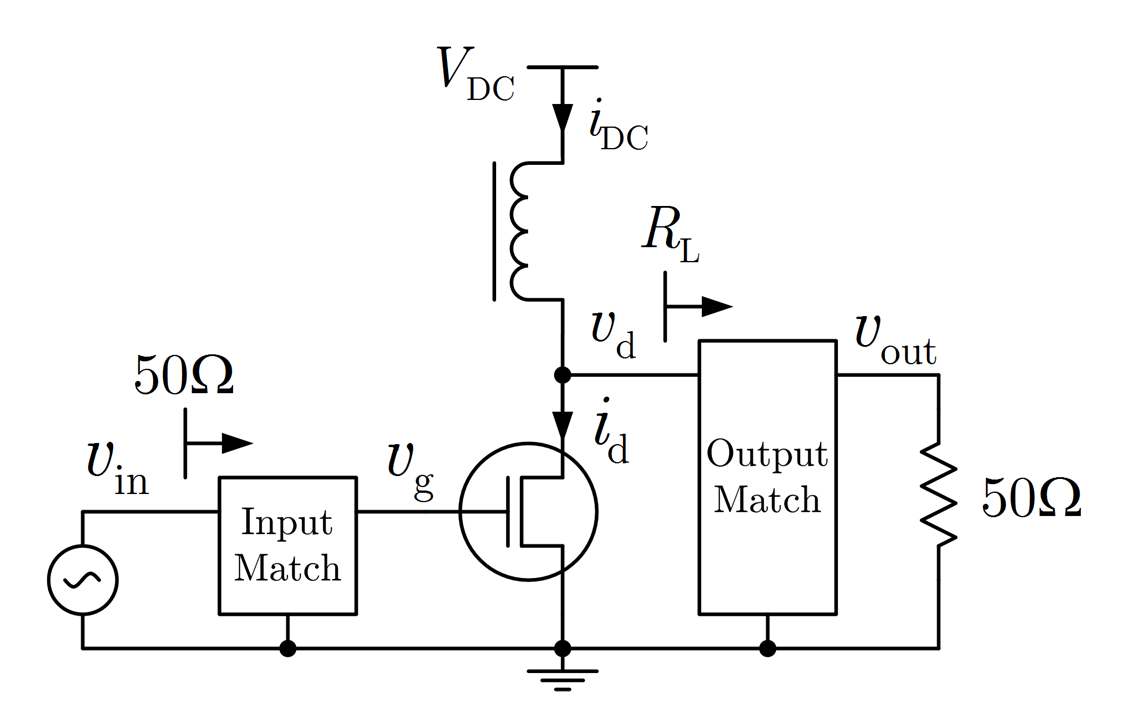

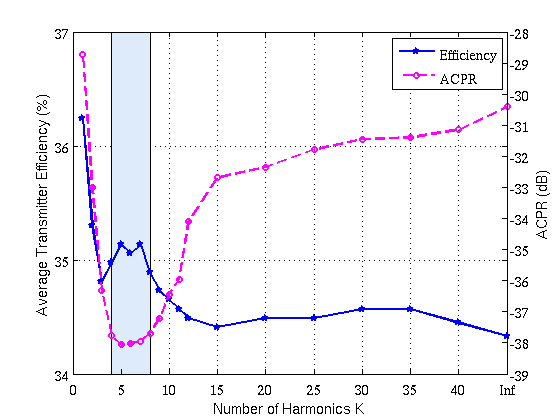

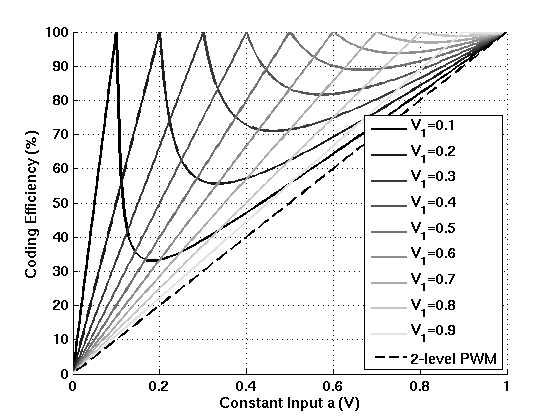

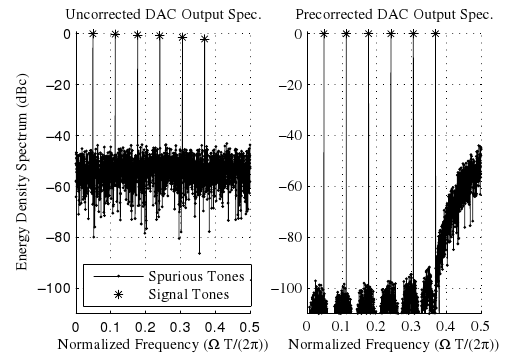

The IMS student design competitions are an annual event at the IEEE MTT-S International Microwave Symposium. In 2017, the SPSC lab members Harald Enzinger and Karl Freiberger won the first prize in the competition “Power Amplifier Linearization through Digital Predistortion”. The aim of this competition was to linearize a highly efficient but nonlinear envelope tracking power amplifier in dual-band operation by means of digital predistortion. The winning solution combines several state-of-the-art methods for crest factor reduction (CFR) and digital predistortion (DPD) with new extensions, developed specifically for this competition. You can find out more about this winning solution in the Jannuary/February issue of the IEEE Microwave Magazine. A preprint version of the paper can be downloaded from the SPSC website.

Read the full article.

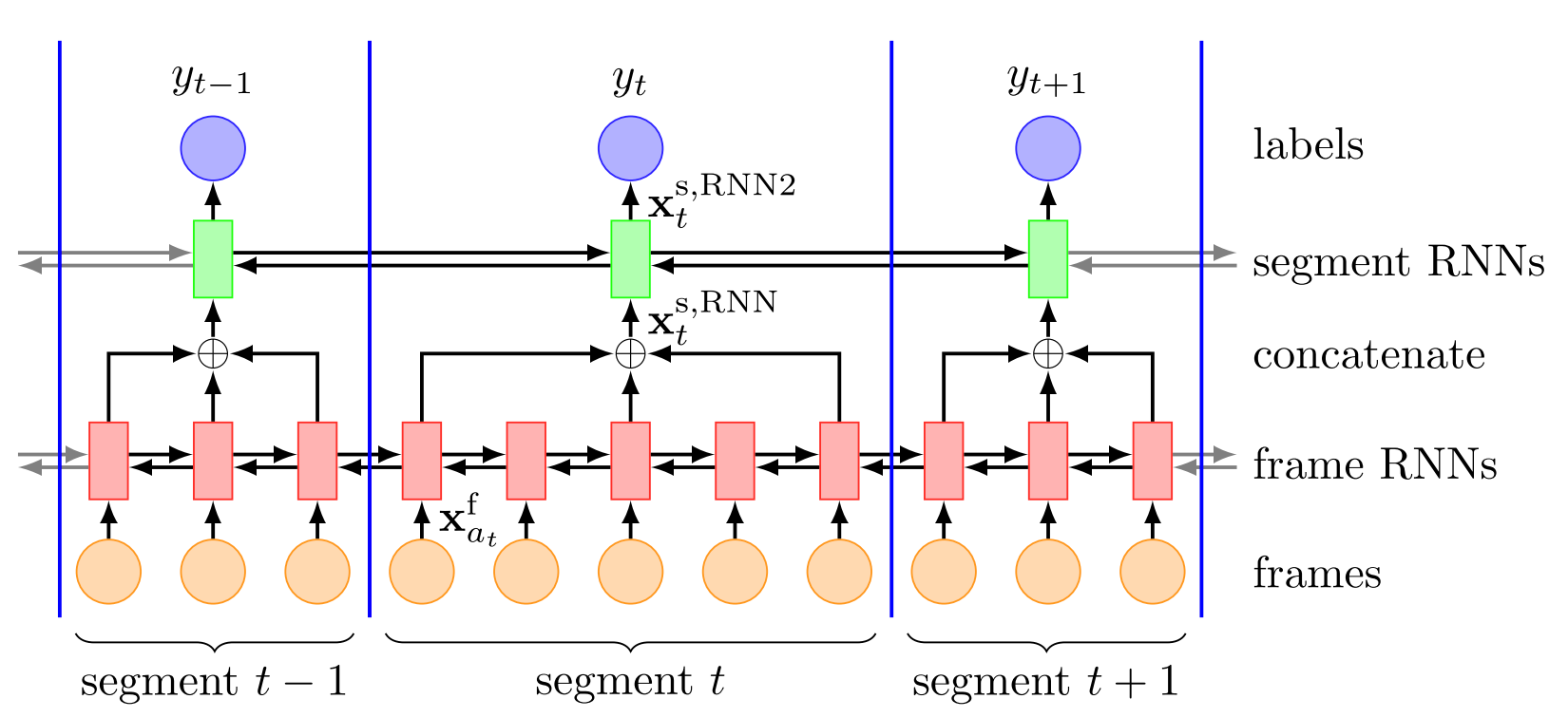

We introduce a simple and efficient frame and segment level RNN model (FS-RNN) for phone classification. It processes the input at frame level and segment level by bidirectional gated RNNs. This type of processing is important to exploit the (temporal) information more effectively compared to (i) models which solely process the input at frame level and (ii) models which process the input on segment level using features obtained by heuristic aggregation of frame level features. Furthermore, we incorporated the activations of the last hidden layer of the FS-RNN as an additional feature type in a neural higher-order CRF (NHO-CRF). In experiments, we demonstrated excellent performance on the TIMIT phone classification task, reporting a performance of 13.8% phone error rate for the FS- RNN model and 11.9% when combined with the NHO-CRF. In both cases we significantly exceeded the state-of-the-art performance.

Read the full article.

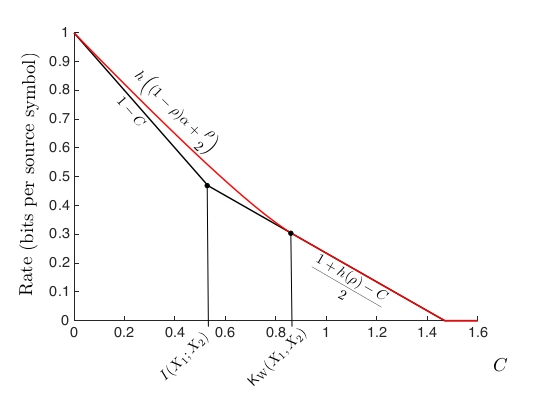

We consider a lossy single-user caching problem with correlated sources – just think of streaming compressed videos! Most users will watch these videos in the evening, leading to network congestion. If you have a player with a cache, though, you can fill this cache with data during times of low network usage, even though you may not know which video the user wants to watch in the evening. In our paper, we characterize the transmission rate required in the evening as a function of the cache size and as a function of the distortion one accepts when watching the videos. We furthermore hint at what should be put in the cache such that it is useful for a variety of videos, and we connect these results to the common-information measures proposed by Wyner, Gacs and Koerner.

Read the full article.

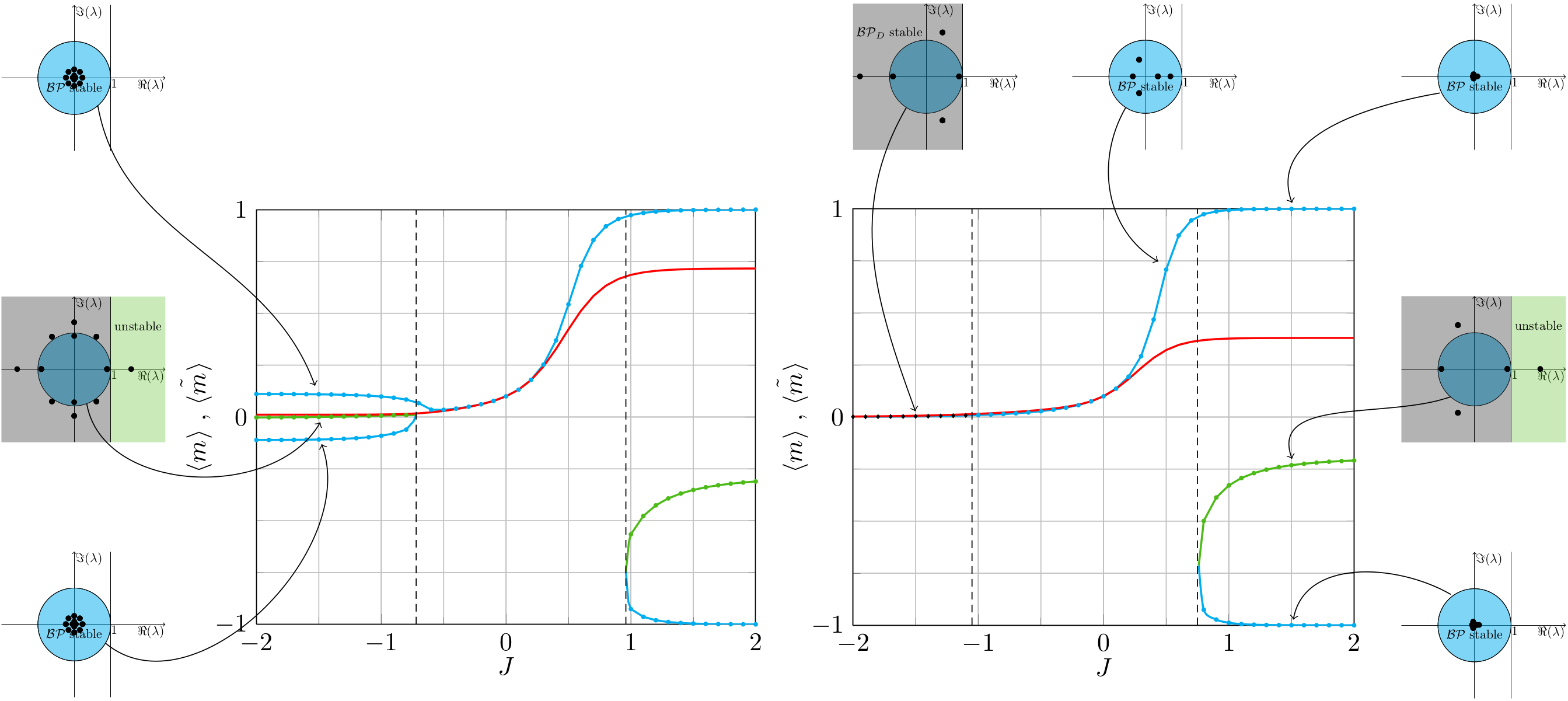

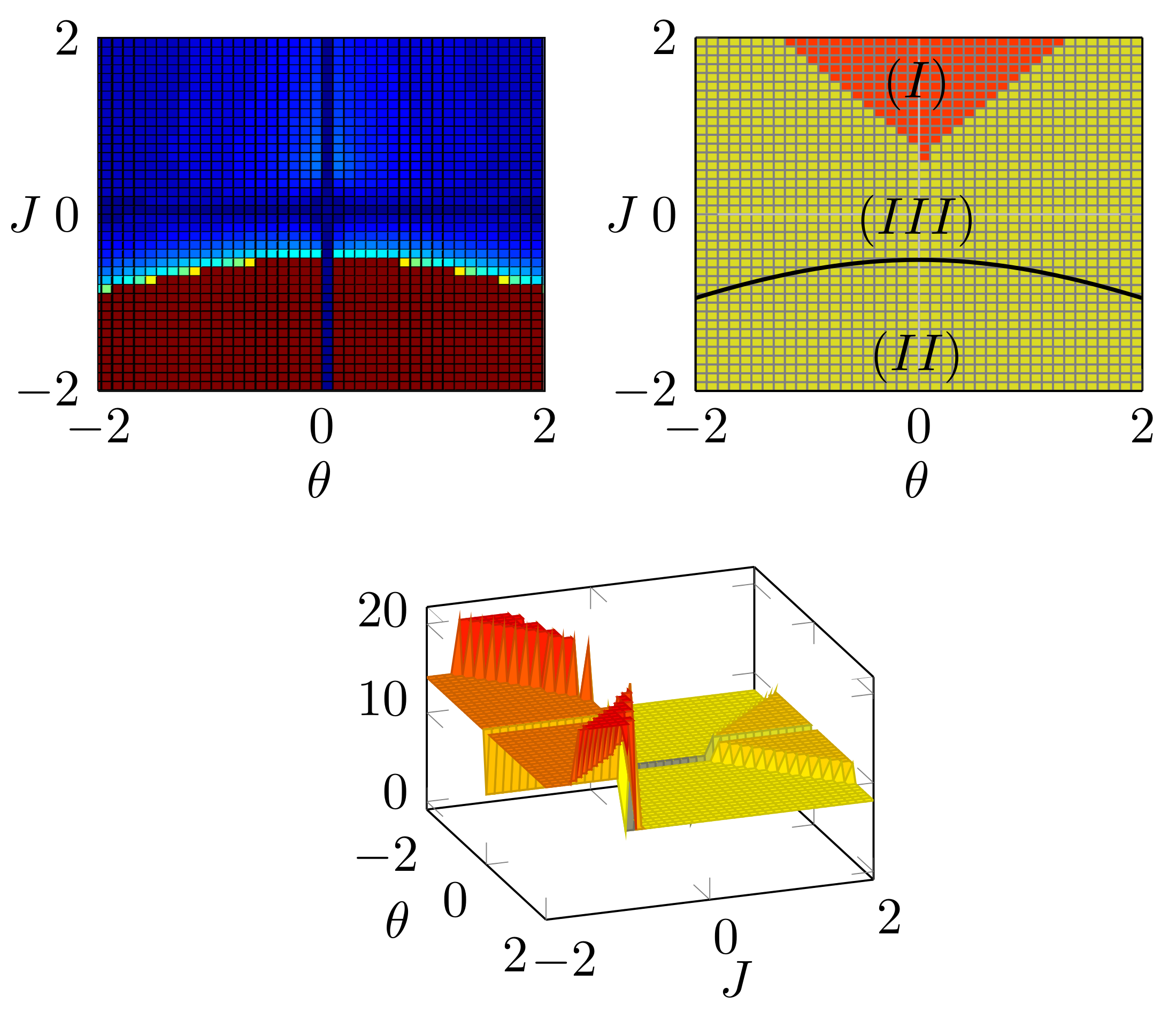

In this work, we obtain all fixed points of belief propagation and perform a local stability analysis. We consider pairwise interactions of binary random variables and investigate the influence of non-vanishing fields and finite-size graphs on the performance of belief propagation; local stability is heavily influenced by these properties. We show why non-vanishing fields help to achieve convergence and increase the accuracy of belief propagation. We further explain the close connections between the underlying graph structure, the existence of multiple solutions, and the capability of belief propagation (with damping) to converge. Finally, we provide insights into why finite-size graphs behave better than infinite-size graphs.

Read the full article.

High-accuracy indoor radio positioning can be achieved by using high signal bandwidths to increase the time resolution. Multiple fixed anchor nodes are needed to compute the position or alternatively, reflected multipath components can be exploited with a single anchor. In this work, we propose a method that explores the time and angular domains with a single anchor. This is enabled by switching between multiple directional ultra-wideband (UWB) antennas. The UWB transmission allows to perform multipath resolved indoor positioning, while the directionality increases the robustness to undesired, interfering multipath propagation with the benefit that the required bandwidth is reduced. The positioning accuracy and performance bounds of the switched antenna are compared to an omni-directional antenna. Two positioning algorithms are presented based on different prior knowledge available, one using floorplan information only and the other using additionally the beampatterns of the antennas. We show that the accuracy of the position estimate is significantly improved, especially in tangential direction to the anchor.

Read the full article.

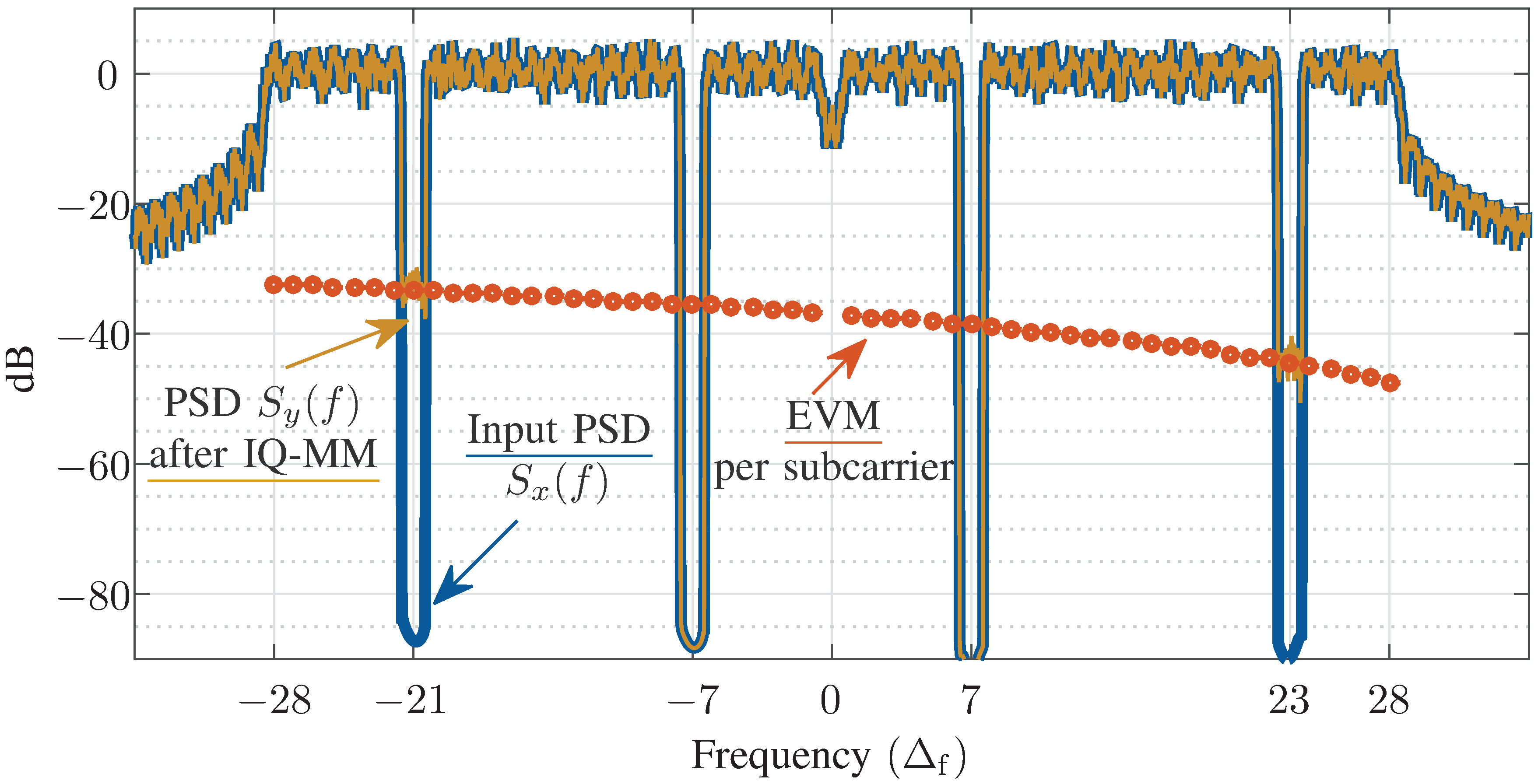

We present a method for measuring a communication signal’s inband error caused by a non-ideal device under test (DUT). In contrast to the established error vector magnitude (EVM), we do not demodulate the data symbols. Rather, we subtract linearly correlated (SLIC) parts from the DUT output and analyze the power spectral density of the remaining error signal. Consequently, we do not require in-depth knowledge of the modulation standard. This makes our method well suited for measurements with cutting-edge communication signals, without the need to purchase or implement a dedicated EVM analyzer. We show that our SLIC-EVM approach allows for estimating the subcarrier-dependent EVM for typical transceiver impairments like IQ mismatch, phase noise, and power amplifier (PA) nonlinearity. We present measurement results of a WLAN PA, showing less than 0.2 dB absolute deviation from the regular EVM with demodulation.

Read the full article.

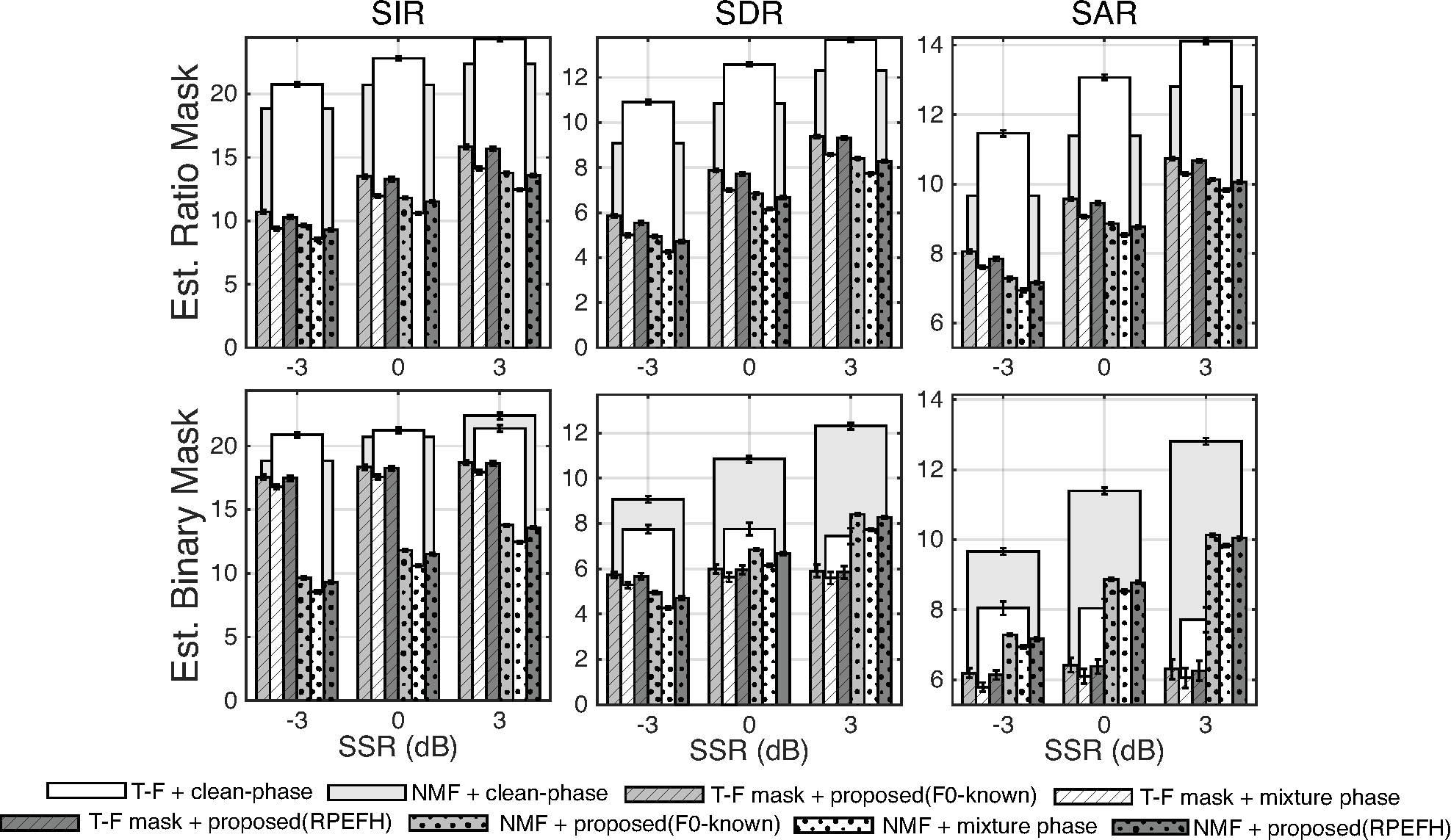

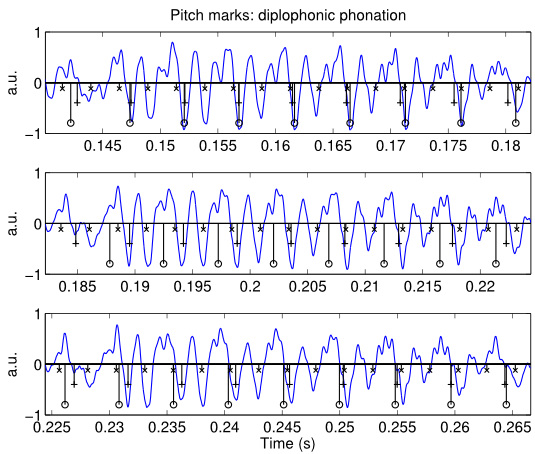

Time-frequency masking is a common solution for the single-channel source separation (SCSS) problem where the goal is to find a time-frequency mask that separates the underlying sources from an observed mixture. An estimated mask is then applied to the mixed signal to extract the desired signal. During signal reconstruction, the time-frequency–masked spectral amplitude is combined with the mixture phase. This article considers the impact of replacing the mixture spectral phase with an estimated clean spectral phase combined with the estimated magnitude spectrum using a conventional model-based approach. As the proposed phase estimator requires estimated fundamental frequency of the underlying signal from the mixture, a robust pitch estimator is proposed. The upper-bound clean phase results show the potential of phase-aware processing in single-channel source separation. Also, the experiments demonstrate that replacing the mixture phase with the estimated clean spectral phase consistently improves perceptual speech quality, predicted speech intelligibility, and source separation performance across all signal-to-noise ratio and noise scenarios.

Read the full article.

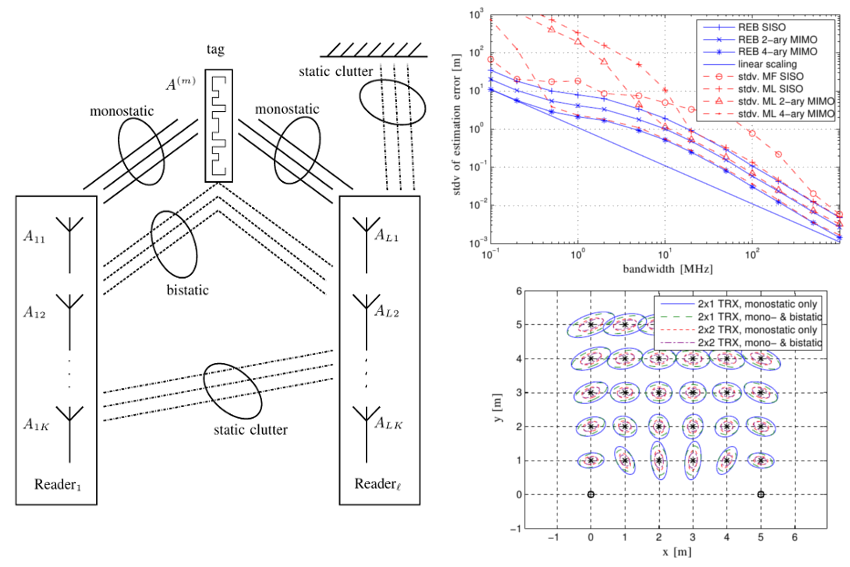

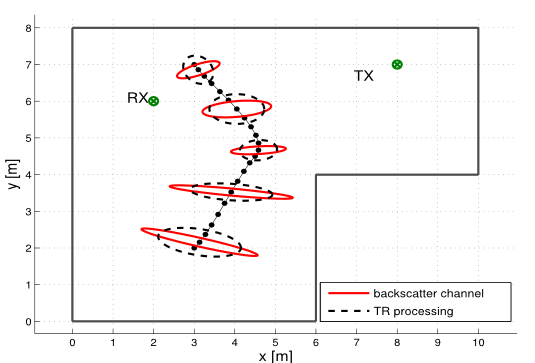

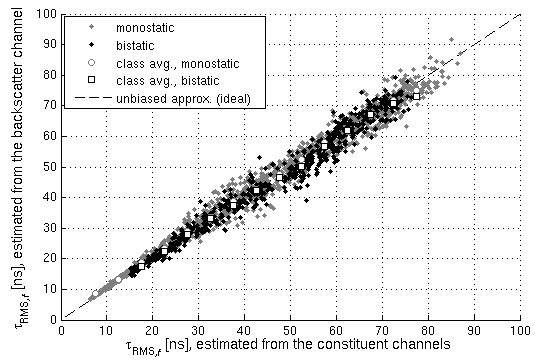

Positioning and ranging within UHF RFID are highly dependent on the channel characteristics. The accuracy of time-of-flight based ranging systems is fundamentally limited by the available bandwidth. We thus analyze the UHF RFID backscatter channel formed by convolution of the individual constituent channels. For this purpose, we present comprehensive wideband channel measurements in two representative scenarios and an analysis with respect to the Rician K-factor for the line-of-sight component, the root-mean-square delay spread, and the coherence distance, which all influence the potential positioning performance.

Read the full article.

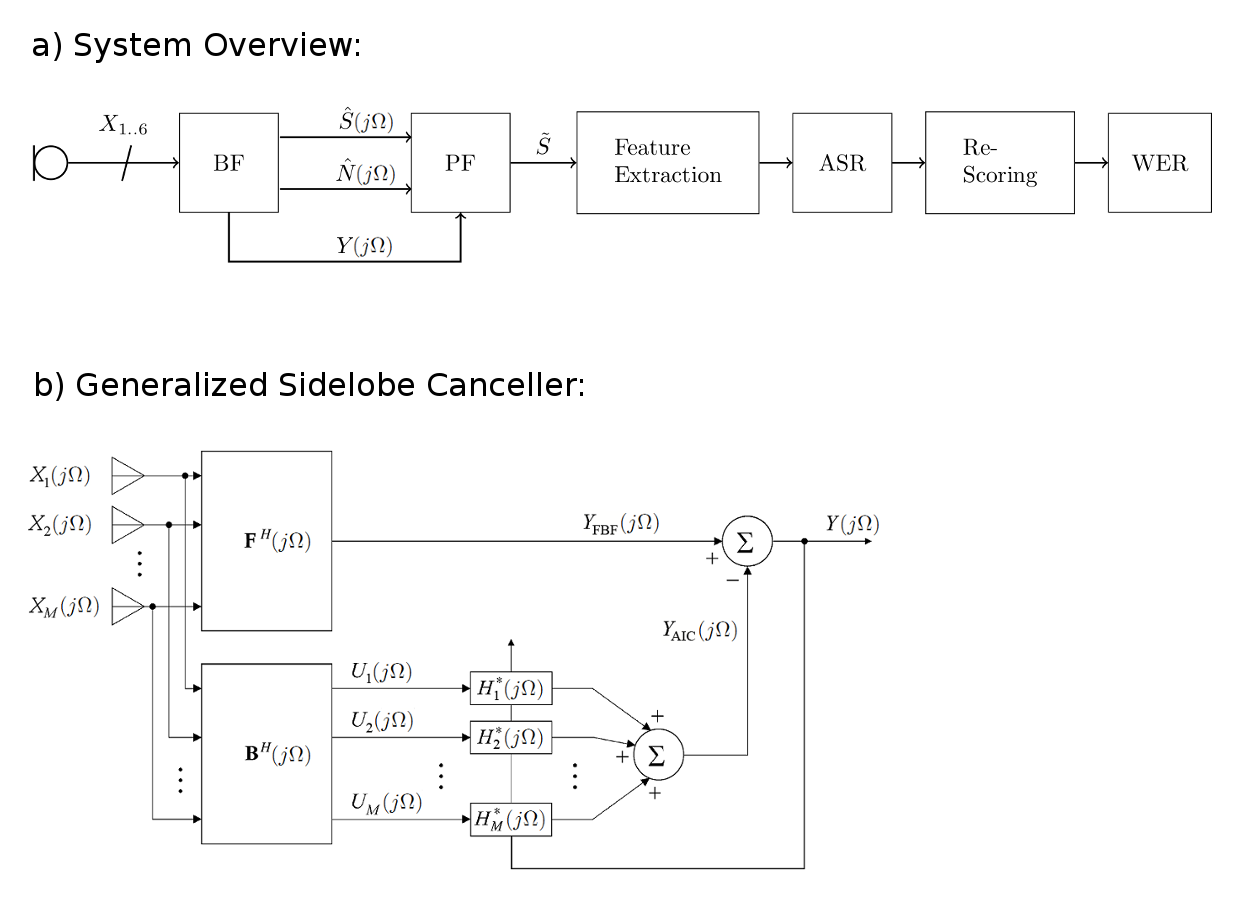

Using speech masks for multi-channel speech enhancement gained attention over the last years, as it combines the benefits of digital signal processing (beamforming) and machine-learning (learn the speech mask from data). We demonstrate how a speech mask can be used to construct the Minimum Variance Distortionless response (MVDR), Generalized Sidelobe Canceler (GSC) and Generalized Eigenvalue (GEV) beamformers, and a MSE-optimal postfilter. We propose a neural network architecture that learns the speech mask from the spatial information hidden in the multi-channel input data, by using the dominant eigenvector of the Power Spectral Density (PSD) matrix of the noisy speech signal as feature vector. We use CHiME-4 audio data to train our network, which contains a single speaker engulfed in ambient noise. Depending on the speakers location and the geometry of the microphone array the eigenvectors form local clusters, whereas they are randomly distributed for the ambient noise. The neural network learns this clustering from the training data. In a second step, we use the cosine similarity between neighboring eigenvectors as feature vector, which makes our approach less dependent on the array geometry and the speaker’s position. We compare our results against the most prominent model-based and data-driven approaches, using PESQ and PEASS/OPS scores. Our system yields superior results, both in terms of perceptual speech quality and speech mask prediction error.

Read the full article.

Robust indoor positioning at sub-meter accuracy typically requires highly accurate radio channel measurements to extract precise time-of-flight measurements. Emerging UWB transponders like the DecaWave DW1000 chip offer to estimate channel impulse responses with a reasonably high bandwidth, yielding a ranging precision below 10 cm. The competitive pricing of these chips allows scientists and engineers for the first time to exploit the benefits of UWB for indoor positioning without the need for a massive investment into experimental equipment.

Read the full article.

Error vector magnitude (EVM) and noise power ratio (NPR) measurements are well-known approaches to quantify the inband performance of communication systems and their respective components. In contrast to NPR, EVM is an important design specification and is widely adopted by modern communication standards such as 802.11 (WLAN). However, EVM requires full demodulation, whereas NPR excels with simplicity requiring only power measurements in different frequency bands. Consequently, NPR measurements avoid bias due to insufficient synchronization and can be readily adapted to different standards and bandwidths. We argue that NPR-inspired measurements can replace EVM in many practically relevant cases. We show how to set up the signal generation and analysis for power-ratio-based estimation of EVM in orthogonal frequency-division multiplexing systems impaired by additive noise, power amplifier nonlinearity, phase noise, and in-phase–quadrature (IQ) imbalance. Our method samples frequency-dependent inband errors via a single measurement and can either include or exclude the effect of IQ mismatch using asymmetric or symmetric stopband locations, respectively. We present measurement results using an 802.11ac PA at different backoffs, corroborating the practicability and accuracy of our method. Using the same measurement chain, the mean absolute deviation from the EVM is less than 0.35 dB.

Read the full article.

We propose a closed-form approximation of the intractable KL divergence objective for variational inference in neural networks. The approximation is based on a probabilistic forward pass where we successively propagate probabilities through the network. Unlike existing variational inferences schemes that typically rely on stochastic gradients that often suffer from high variance our method has a closed-form gradient. Furthermore, the probabilistic forward pass inherently computes expected predictions together with uncertainty estimates at the outputs. In experiments, we show that our model improves the performance of plain feed-forward neural networks. Moreover, we show that our closed-form approximation works well compared to model averaging and that our model is capable of producing reasonable uncertainties in regions where no data is observed.

Read the full article.

Belief propagation is an iterative method to perform approximate inference on arbitrary graphical models. Whether it converges and if the solution is a unique fixed point depends on both, the structure and the parametrization of the model. To understand this dependence it is interesting to find all fixed points.

We formulate a set of polynomial equations, the solutions of which correspond to BP fixed points. Experiments on binary Ising models show how our method is capable of obtaining all fixed points.

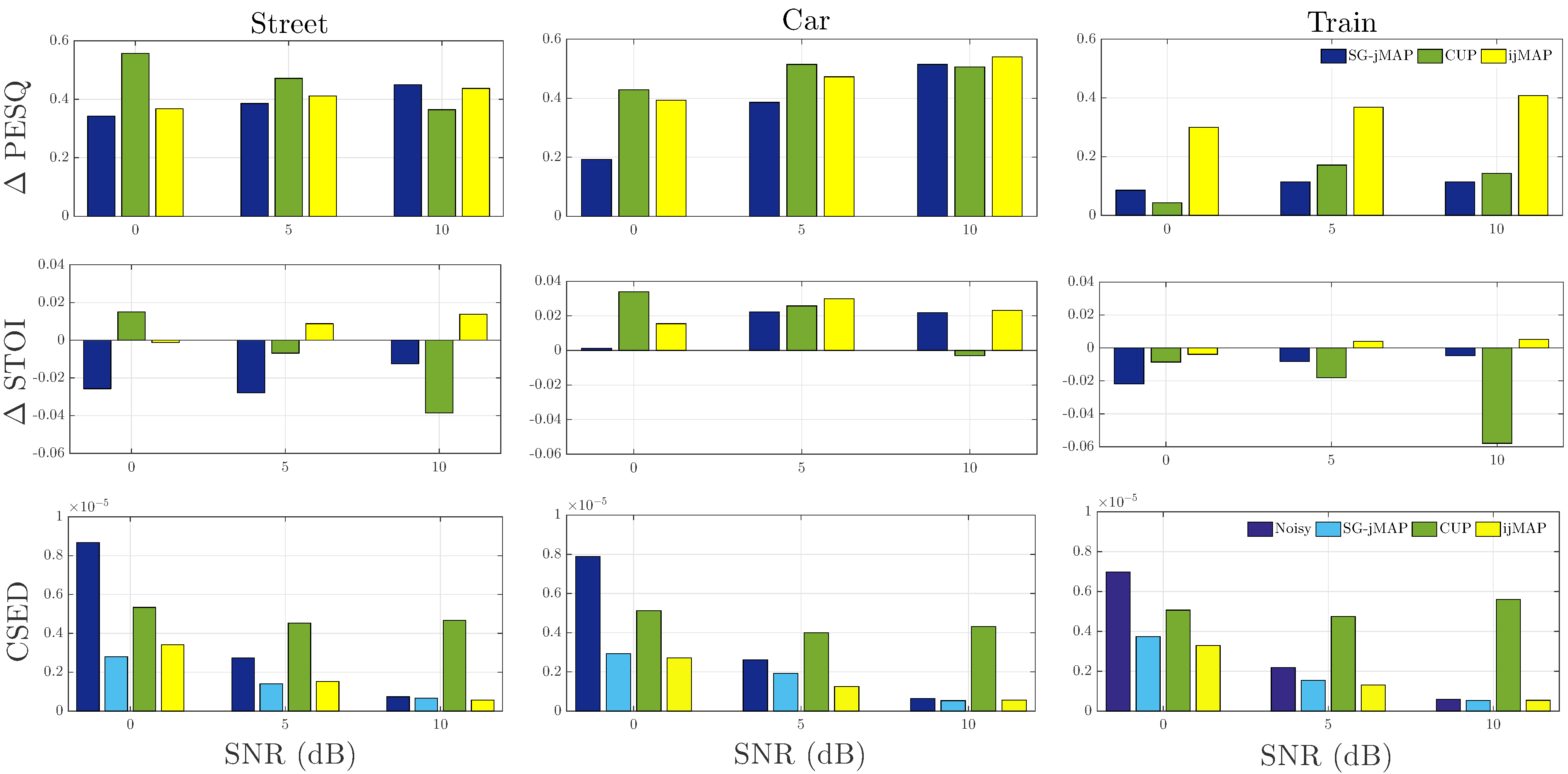

Within the last three decades research in single-channel speech enhancement has been mainly focused on filtering the noisy spectral amplitude without that much focus on the integration of phase-based signal processing. Recently, several phase-aware algorithms based on phase-sensitive signal models were proposed for speech enhancement using the minimum mean squared error (MMSE). Improved performance over the conventional phase-insensitive approaches has been achieved. In this paper, we propose an iterative joint maximum a posteriori (MAP) amplitude and phase estimator (ijMAP) assuming a non-uniform phase distribution. Experimental results demonstrate the effectiveness of the proposed method in recovering both amplitude and phase in noise, justified by perceived quality, speech intelligibility and phase estimation error instrumental measures. The proposed method, brings joint improvement in perceived quality and speech intelligibility compared to the phase-blind joint MAP estimator with a comparable performance to the complex MMSE estimator.

Read the full article.

An overview on the challenging new topic of phase-aware signal processing

Read the full article.